I’ve been an engineering leader for over a decade, and I’ve spent most of those years in private Slack groups with other engineering leaders, comparing strategies and kvetching about Kubernetes. Of the hundreds of threads I’ve taken part in, the one that got the most engagement the fastest was a recent one around AI adoption.

“Where are you on this continuum?”, it read. “A. You don’t really care how people use AI; B. You push people to use AI; or C. You mandate it?”

The response was downright frothy. People responded, people debated, the thread unspooled for what felt like miles. The clear winner? Option C, “You mandate it.”

It’s not often that engineering leaders mandate any single approach to building software. That’s partly because we’re stubborn; we find something that works, and we don’t like someone forcing us to change.

But it’s also because the consequences of any mandate are impossible to predict. It might not produce the outcomes you want, it might frustrate many of your engineers in the process, and it might turn a well-oiled engineering organization into a regiment of resentful coders with minimal work flowing through.

So, what’s different about AI? Why are we mandating it?

Two reasons: the AI promise, and the (current) AI reality.

The AI Promise: Everything’s Gonna Change

The AI promise is that it’s going to change everything in every realm of human life, software development especially. GitHub saw merged pull requests jump 29% year-over-year in 2025, and some studies show AI-heavy teams handling nearly twice as many PRs per day. The bull case is 10x, 50x, or even 100x improvements in shipping velocity.

It’s a bold prediction, but one I agree with. That’s partly because of what I observe with AI, and partly because I remember the shift from on-prem to SaaS architecture. There were naysayers, there were late adopters, and the earliest, most innovative SaaS companies developed novel business models and tapped into larger addressable markets than ever in human history. I expect AI to do the same.

Research Report

FinOps In The AI Era: A Critical Recalibration

What 475 executives told us about AI and cloud efficiency.

The (Current) AI Reality: Well, Not Yet

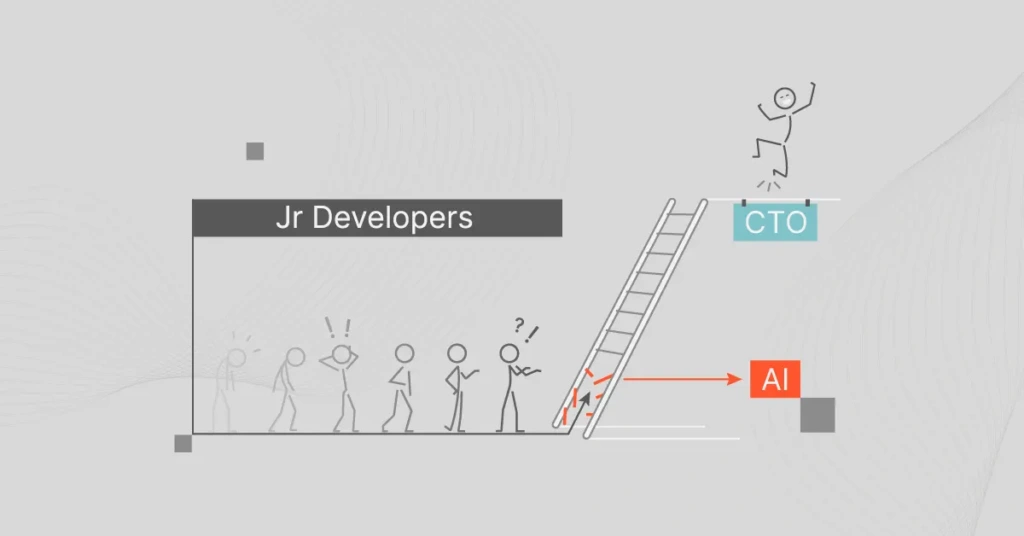

If you believe the press releases, AI is already writing itself. If that’s true, it hasn’t diffused through the rest of the industry yet. We haven’t seen a slew of low-overhead, fast-moving startups replace the SaaS incumbents. We’re not seeing companies like Meta ship dozens of new offerings that quickly attract billions of new users. We’re seeing a lot of hype, a lot of spending, and, for the most part, unclear returns.

Meanwhile, the most rigorous study we have, a randomized controlled trial by METR, found that experienced developers using AI tools were actually 19% slower. Worse: They thought they were 20% faster. The volume is up, but the jury’s still out on whether we’re shipping more value or just more commits.

This gap between AI Promise and AI Reality is responsible for the so-called AI Bubble. Investors and executives have a fear of missing out (FOMO) on the next big thing, and so they’re pumping both money and pressure into engineering organizations at incredible volumes.

FOMO < JOMO

We wind up walking a fine line. On one side: hype envy. FOMO. Unstrategically throwing money at the problem. They’re investing $xyz; we’ve gotta triple it. It’s a disembodied, digital version of every suburbanite’s favorite game, keeping up with the Joneses.

On the other side is what a friend of mine calls JOMO: the joy of missing out, in this case on envy-driven AI investment.

During periods of severe technological disruption, a large portion of your investments is, by nature, experimental. You’re building what’s never been built before. You can’t perfectly anticipate how well it’ll work, how eagerly users will adopt it, or how much revenue it’ll generate.

But here’s the thing nobody in those Slack threads is talking about: What are you getting for your money?

Not in the abstract, hand-wavy “AI will transform everything” sense. In the concrete, “We spent $47k on Anthropic API calls last month and here’s what that produced” sense.

The Question Nobody’s Asking

I’ve watched engineering leaders agonize over whether to mandate Copilot, Cursor, or Claude. I’ve seen LinkedIn thought leaders debate prompt engineering best practices for hours. What I almost never see them do is connect the cost of these tools to what they’re actually getting back.

This is wild to me. We would never greenlight a new microservice without understanding its resource footprint. We would never adopt a new database without modeling its cost at scale. But with AI? We’re just … vibing.

Part of this is understandable. AI costs are genuinely hard to track. Your engineers are hitting multiple providers through multiple channels: cloud-based, on-prem, direct APIs, and embedded in their IDEs. The bill shows up fragmented across a dozen line items, and none of them say, “Here’s what you got for this.”

Part of it is less understandable. It’s the same FOMO that drives the mandate in the first place. If you stop to measure, you might not like what you find. And if you don’t like what you find, you might have to slow down. And if you slow down, the Joneses pull ahead.

But that logic is backwards. The Joneses aren’t pulling ahead by spending more. They’re pulling ahead by spending better. And you can’t spend better if you can’t see what you’re spending on.

Two Things That Sound Boring But Aren’t

Look, I know “observability” and “ROI” aren’t words that set hearts racing. But in the current AI gold rush, they’re the difference between the miners who struck gold and the ones who just bought a lot of pickaxes.

You need to see where your AI dollars go. Not just the top-line number. You need to see it broken down by customer, product, feature, and team. When your AI costs spike 40% in a quarter (and they will), you need to know whether that spike came from a feature your users love or an experiment nobody’s touched in three weeks.

You need to connect costs to outcomes. The question isn’t, “How much did we spend on AI?” It’s, “Was it worth it?” That means integrating your cost data with your outcome data, whether that’s revenue, user engagement, deployment velocity, or whatever matters to your business. Not in a quarterly review. Programmatically. In real time.

This isn’t about optimizing prematurely. It’s about knowing which bets are paying off so you can double down on winners and cut losers before they compound.

Make The Joneses Keep Up With You

There are two races happening right now. The first is to see who can find the most durable, impactful uses for AI. That one matters. The second is to see who can spend the most, the fastest. That one doesn’t.

The engineering leaders who win the first race will be the ones who treat AI investment the way they treat every other engineering decision: with data, with rigor, and with a clear line from input to output.

That’s what we build at CloudZero. We allocate 100% of your cloud and AI costs, connect them to telemetry that ties spend to business outcomes, and give you a single place to see whether your AI investments are actually working. Not so you can spend less. So you can spend right.