Modern SaaS companies aren’t reporting weaker margins because they forgot to rightsize instances or buy reservations. It’s more because cloud spend now moves at the speed of AI experiments, overnight shifts in customer usage, and automated systems that scale in seconds.

That’s why the next generation of cloud cost optimization strategies looks fundamentally different from what worked even two years ago.

Quick read: KubeCon Atlanta Signals Key Shift: From Cloud Cost To Value Engineering

And that gap between how cloud costs behave now and how many teams are managing them is getting expensive.

Here, we’ll explore the emerging cloud cost optimization strategies shaping 2026 and beyond.

What is cloud cost optimization in 2026?

First off: Cloud cost optimization is a FinOps practice that helps organizations control, attribute, and optimize cloud spending by linking infrastructure and AI costs directly to business outcomes. In the FinOps lifecycle, cloud cost optimization is the execution layer that follows cost visibility and enables continuous control and business-aligned decision-making.

In 2026, cloud cost optimization extends beyond rightsizing and discounts to include real-time cost control, AI unit economics, and continuous cost attribution across platforms.

Research Report

FinOps In The AI Era: A Critical Recalibration

What 475 executives told us about AI and cloud efficiency.

How Cloud Cost Optimization Is Changing In 2026: Three Structural Shifts

Before we get into specific cloud cost management best practices and optimization strategies, it’s worth zooming out a little.

What’s changing in 2026 isn’t just how teams optimize cloud costs, but also what they’re optimizing against.

Consider these:

Shift 1: From cloud bills to cost systems

Modern cloud cost optimization requires treating cost as a system, not a static bill.

In 2026, cloud spend no longer lives under a single provider, a single billing format, or even a single category.

The emerging approach is to normalize cost and usage data across platforms into a consistent, structured model. We are talking about a way that makes your cost data interoperable, explainable, and ready for automation.

This shift is what enables multi-platform cost attribution, consistent unit economics across vendors, and faster, more reliable cost analysis at scale.

Without this foundation, even the most advanced optimization techniques will collapse under data friction.

Shift 2: From periodic optimization to continuous control loops

Modern cloud cost optimization requires continuous, real-time control loops that detect, attribute, and correct cost behavior while decisions are still reversible.

Cloud cost optimization used to be something teams did. But in 2026, it’s something systems run.

Monthly reports, quarterly reviews, and static budget thresholds can’t keep pace with environments where usage patterns change by the hour.

This is why real-time anomaly detection is evolving beyond “alerting” and into an early-warning system for overspending.

Shift 3: From infrastructure efficiency to business and AI unit economics

Modern cloud cost optimization is measured by cost per outcome — such as per customer, feature, or AI inference — rather than percentage savings on infrastructure.

The biggest shift yet is that optimization is no longer measured in percentage savings. It’s measured in cost per outcome.

As AI workloads, usage-based pricing, and automated scaling become the norm, the most meaningful questions are sounding more like:

- What does one more customer actually cost to serve?

- What is the marginal cost of this AI-powered feature?

- And, how is this deployment changing our unit economics at scale?

These three shifts are defining the new frontier of cloud cost optimization in 2026 and thereafter. Now, let’s go deeper.

Modern Cloud Cost Optimization Strategies For 2026

With that foundation in place, let’s look at the strategies modern SaaS teams are using to operate effectively in this new reality.

1. Use a FOCUS-first cost data layer

Today, fragmented data is a bigger limiter to effective cloud cost optimization than tooling.

The modern SaaS stack now generates cost data across infrastructure, managed cloud services, AI APIs, SaaS platforms, observability tools, and data pipelines. Each has its own billing format, attribution logic, and update cadence.

When those cost streams remain siloed, even the most sophisticated optimization strategies become inadequate.

Instead, you’ll want to use a standardized cost data layer.

Using a FOCUS-first cost data layer is now among the most recommended FinOps best practices for cloud cost optimization at scale.

To address this, the FinOps Foundation has introduced FOCUS 1.3 (FinOps Open Cost and Usage Specification). This is the newest standardized schema designed to normalize cost and usage data across providers and platforms.

Instead of reconciling inconsistent exports every month, you can now normalize your cost data once, then build optimization logic on top of it.

This enables you to do consistent cost allocation across vendors, compare unit metrics (like cost per feature, customer, or inference), and run automation that doesn’t break when a provider changes billing formats.

Teams adopting a FOCUS-first mindset are redesigning how cost information flows through their organization:

- Normalize first, analyze second

- Treat cost data like telemetry, not accounting output

- Build optimization logic that survives architecture changes.

Picture this:

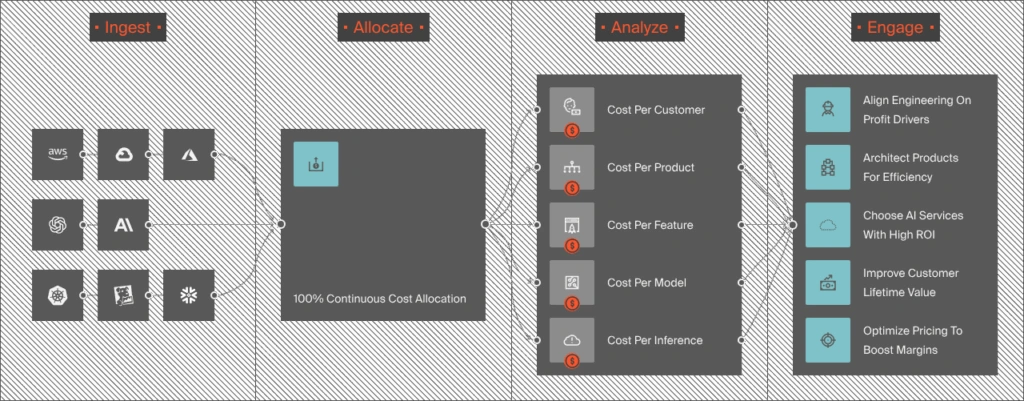

Image: The Ingest, Enrich, Normalize, Allocate, Analyze, and Engage cloud cost optimization workflow by CloudZero

With that standardized cost spine in place, you can finally move to the next frontier.

2. Replace periodic reviews with continuous cost control loops

Traditional optimization assumes that cost issues develop slowly and predictably. But modern cloud environments, like AI workloads, can spike dramatically in minutes.

This lag is one of the biggest hidden drivers of overspending in the cloud today. Data from our State of AI Costs report speaks volumes on this.

What a continuous cost control loop looks like today

A continuous control loop treats cloud cost optimization as a real-time system, instead of a reporting task.

The continuous cloud cost control loop framework includes four stages:

- Observe cost behavior continuously across clouds, platforms, and services

- Attribute changes to specific workloads, features, environments, and other usage drivers

- Predict how those changes will evolve if left unchecked

- Intervene early (automatically or with precise guidance)

The goal here is to get the cost signals while the decisions that led to them are still reversible.

That requires cost to become another operational signal, like latency, error rates, and availability.

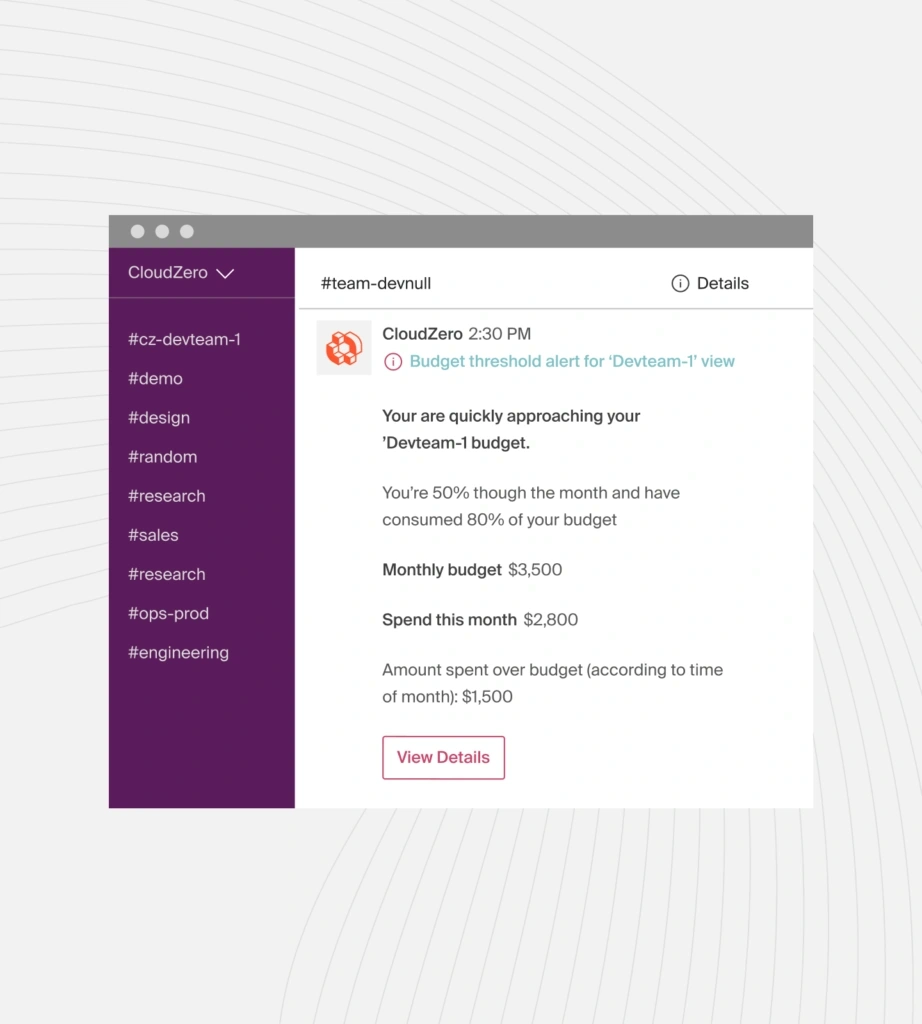

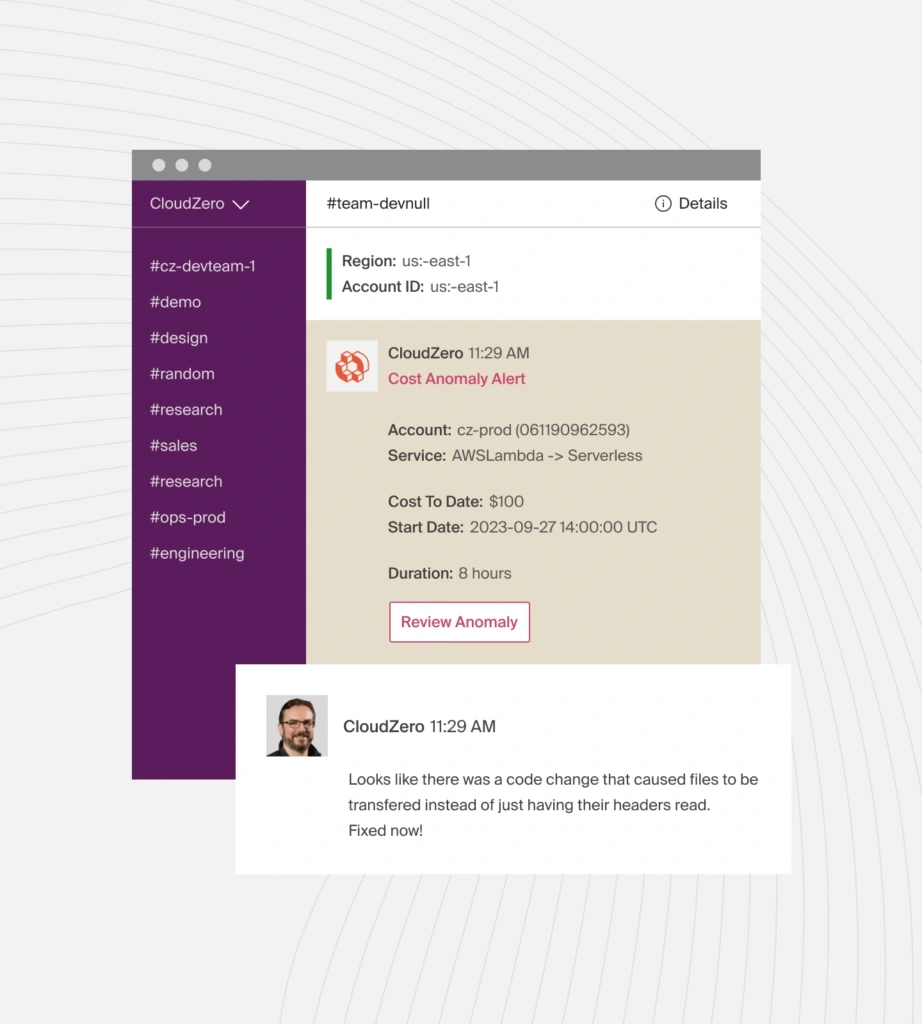

To achieve that, you’ll want to have a real-time anomaly detection system that surfaces issues earlier than traditional monitoring tools do. Something like this:

Even AWS has expanded support for near-real-time cost and usage data, granular allocation signals, and proactive budget monitoring features. It is an acknowledgment that delayed cost insights no longer work at scale.

However, the trick is to ensure you are not just getting faster data, but also strong, continuous attribution and context attached to that data. Faster data without context produces noise instead of clarity on what to do next.

So, you’ll want something like this instead:

3. Measure AI unit economics

In our December 2025 Cloud Economics Pulse, we shared how AI/ML costs are scaling, quietly (if you compare cost growth month-over-month), but dramatically when you look at the bigger picture, like this:

However, too many teams still approach AI costs with questions like, “Which model is cheapest per token?”, or, “Can we reduce context length or batch requests here?”

Those questions still matter. But in 2026, the real opportunity is in knowing how your AI costs scale in relation to the value they create.

To capture that intel, you’ll need to answer some important questions first, like:

- Is this AI feature profitable at scale?

- Which customers, or segments, are driving disproportionate AI costs?

- And how is AI usage affecting our gross margin as adoption grows?

The most reliable way to answer them is to measure your AI spend using granular, yet business-aligned metrics such as:

- Cost per AI-powered feature

- Cost per user

- Cost per AI service, and

- Cost per successful outcome.

Once your AI costs are visible at this cost per-unit level, you can more precisely tell when your system is headed for overspending and when it’s rightly supporting your growth.

The good thing is you can already capture that level of AI unit economics today inside CloudZero. Picture this:

For example, you can capture your cost per AI feature. Meaning, you can decide if it’s still worth supporting, offering as a free product, or whether it’s time to charge for it so it can support its own growth.

Helpful resources:

4. Optimize the platform overhead most teams ignore

In many SaaS organizations, the fastest-growing cloud costs aren’t tied directly to customer-facing features. They’re tied to the platform layer. Picture this:

Think of observability pipelines, data movement, internal tooling, security instrumentation, and “always-on” background services. And these costs tend to scale by default, not by intent.

Traditional cloud cost optimization methods struggle with platform overhead because, say, rightsizing doesn’t apply cleanly to streaming data and neither do Savings Plans or Reservations work well with usage- or AI-driven growth.

So, teams are now optimizing platform overhead by treating internal services like products with their own economics.

That means asking:

- Who consumes this data, and why?

- What value does this telemetry provide relative to its cost?

- Which workloads truly require high-frequency or high-retention data?

This reframing enables you to take concrete actions, such as:

- Tiered observability (not all logs deserve the same fidelity)

- Intentional data retention policies aligned to actual use cases

- Cost-aware defaults for internal tooling

Instead of assuming “more visibility is always better,” you’ll want to design cost visibility that’s proportionate to the value you are getting and the business needs.

5. Optimize for marginal cost and cost elasticity (not just total spend)

Marginal cloud cost measures the cost of serving one additional unit of usage, such as a user, deployment, or AI inference.

In 2026, total cloud spend is one of the least useful optimization signals you can use. That’s because it answers the blunt question, “Did we spend more this month than last month?”

But analyzing your marginal cost answers the far more important one, “What does the next unit of growth cost us?”

Likewise, cost elasticity describes how your costs respond to changes in usage, scale, or behavior. Some costs scale smoothly while others scale poorly, like AI inference without caching or batching.

Today, you’ll want to identify which parts of your architecture are elastic and which are brittle.

This is especially important for AI-driven features, where each additional request may carry real, recurring costs that also scale exponentially.

For example, a feature that looks efficient at low adoption may become a margin liability at scale.

Conducting a marginal cost analysis for it can help you surface that risk early, while there’s still time to redesign or reprice things (and before the inefficiency becomes a lasting part of your workflows).

6. Optimize cloud costs for resilience

For years, cloud cost optimization rewarded one behavior above all else: running as lean as possible.

That mindset made sense when availability was cheap, architectures were simpler, and failure modes were limited. In 2026, it’s becoming increasingly dangerous.

Cloud provider outages, as we saw in 2025, revealed that systems optimized only for efficiency will fail hardest when stress hits.

On paper, they look cost-efficient, but are fragile in practice.

That’s because when something breaks, failover paths are severely under-provisioned, and the financial impact far exceeds the “saved” infrastructure costs.

Today, you’ll not want to ask, “What’s the cheapest architecture that works?”

Cost-efficient resilience in an AI-driven world demands that you ask, “What level of resilience is worth paying for, and where?”

This shift will help you make more intentional tradeoffs, such as:

- Paying for redundancy where failure is existential

- Accepting risk where the impact is limited or recoverable

- Understanding the cost of downtime, not just uptime

The thing is, resilience is often under-optimized because its costs are scattered, from cross-region data movement to idle resources reserved “just in case”.

Without deep, actionable visibility, these costs feel like waste.

However, with an advanced view, you can make resilience more explicit with actionable insights like:

- Cost of redundancy per service

- Cost of failover readiness per region

- Incremental cost of higher availability targets

When your resilience costs are this visible and attributable, you can then fine-tune them intelligently instead of cutting them blindly.

Resources:

What Next: Get Ahead of Cloud Cost Optimization 3.0 With the Platform Built for Exactly This Shift

Cloud cost optimization has entered a new phase.

The early days focused on efficiency — like rightsizing resources, buying commitments, and cleaning up obvious waste.

The next phase added governance, with budget alerts, reports, and optimization recommendations layered on top.

But in 2026, neither approach is enough.

Now, it’s about cost control at the speed, scale, and “always-on” capabilities of modern systems.

If you want to get ahead of the curve, you’ll want to use advanced cloud cost optimization strategies that help reduce cloud costs sustainably — supporting broader experimentation, faster shipping, and AI-driven innovation without letting spend outrun margins.

CloudZero Was Built For This Very Shift

CloudZero applies FinOps principles to modern cloud cost optimization by making unit economics, marginal cost, and real-time cost control measurable and actionable.

With CloudZero, you can:

- Normalize cost data across platforms to create a reliable cost spine (FOCUS-style, but purpose-built for action)

- Use real-time cost anomaly detection to surface overspending signals early, understand why they’re happening, and act before they hit your bill

- Track unit economics like cost per deployment and AI service across the SDLC stages

- Embed cost intelligence into your engineering culture and product decision-making

- Support AI adoption and experimentation with clear economic guardrails

Innovative teams at Grammarly (which runs 50+ LLMs), Moody’s, and Coinbase already use CloudZero to stay ahead of a rapidly changing cloud cost optimization landscape. And our approach has surfaced more than $20 billion in anomalous cloud spend before it hit these teams’ cloud bills.

You too can stop fighting cloud costs at all costs and instead tell exactly who and what is driving your costs, why those costs behave the way they do, and which levers to pull — without throttling engineering velocity or eroding your margins. Reserve your risk-free demo here today to see how Cloud Cost Optimization 3.0 works for your environment — on your terms.