Quick Answer

Blind tokenmaxxing is uncontrolled AI token consumption with no visibility into what that spend produces. It mirrors the cloud waste problem of the late 2010s — bills climb, but nobody can connect costs to outcomes. The fix isn't fewer tokens; it's cost attribution at the team, agent, feature, and customer level so every AI dollar ties to a measurable business result.

There’s a new performance metric spreading across Silicon Valley engineering teams: tokens consumed.

Meta built an internal leaderboard for it and employees competed for titles like “Cache Wizard” and “Model Connoisseur.” The top user burned through 281 billion (with a B!) tokens in a month. This spending could run into the hundreds of thousands of dollars. Teams were running multiple agents in parallel, crafting ever-longer prompts, and keeping AI systems running around the clock, all to climb the rankings.

They called it tokenmaxxing. And then, two days later, Meta quietly took the leaderboard down.

The problem wasn’t the money people spent on the tokens. The problem was that nobody could answer what they got in return.

Tokenmaxxing Is The New Cloud Waste

This is the same conversation we had about cloud spending several years ago. Teams were provisioning aggressively, bills were climbing fast, and finance was asking questions engineering couldn’t answer. The companies that won weren’t the ones that spent less. The winners could see what they were spending and why. They were the ones who could connect every dollar of infrastructure to a feature, a customer, or a business outcome.

AI token spend is moving through that same arc, but faster, and with even less visibility than cloud ever had.

At CloudZero, we’re watching it in our customer data in real time. Based on our research:

- Average monthly AI spend jumped 36% year-over-year, from $63K to $85.5K

- The share of companies spending over $100K/month on AI more than doubled, from 20% to 45%

- 40% of companies now spend $10M+ annually on AI infrastructure and inference

- Only 51% can accurately evaluate the ROI of that spend

That last number should be a shock to anyone paying attention to budgets. Nearly half of companies have scaled their AI investment past $10M annually and still can’t tell their board whether it’s working.

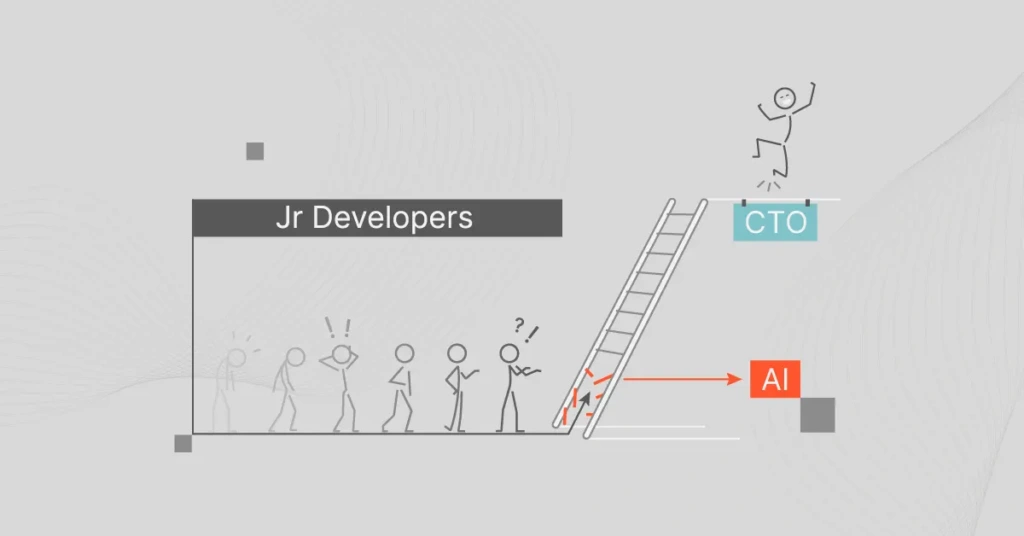

Salesforce just launched “Agentic Work Units“. It’s a new metric designed to measure AI output and impact, not just token consumption. Their EVP said it plainly: “I could tokenmax by running endless loops with Claude Code that led to nothing… but if customers didn’t actually get that much work out of it, then what’s the point?”

HubSpot’s CEO put it even more simply on LinkedIn: “Outcome maxxing >> token maxxing.”

That framing is missing something. Namely, you can’t outcome-max without first knowing what your tokens actually cost: per team, per agent, per feature, per customer. The output side of the equation is meaningless if you can’t anchor it to a denominator.

This is a measurement problem that CloudZero was built to solve and is solving every day for customers.

Research Report

FinOps In The AI Era: A Critical Recalibration

What 475 executives told us about AI and cloud efficiency.

How Hudl And Duolingo Use CloudZero To Drive Outcome-Maximized AI Usage

Consider what this looks like in practice. One employee at Hudl was driving $600K per year in token spend across 40 different models (70% of the company’s total AI costs) and no one in finance or engineering knew. Not because anyone was being careless, but because when AI spend appears in shared compute pools with no dimensional attribution, it’s essentially invisible. It doesn’t show up as “AI.” It shows up as EC2, as S3, as a vague line item that no one owns.

This is the AI attribution problem. You don’t solve it by asking teams to tag better or build a custom dashboard. You solve it with a cost intelligence layer that joins billing data and telemetry, maps spend to your actual business dimensions — such as customer, product, feature, agent — and calculates the economics of every AI decision before they upend your P&L.

When Duolingo’s OpenAI costs spiked 10x overnight, they would have had no way to see which feature caused it. But using CloudZero, the company caught it at the feature level, resolved it in hours, and the analysis revealed a caching gap that led to a 40% reduction in text-to-speech costs. Engineers began treating cost efficiency the same way they treat latency, as a first-class signal, not a quarterly reckoning.

That’s what good looks like. It doesn’t mean using fewer tokens or slowing AI investment. It means having the intelligence to make faster, more confident decisions.

Blind tokenmaxxing is just the new cloud waste. The behavior isn’t irrational, because aggressive AI investment is often the right call for some companies. But investment without attribution is a recipe for trouble. It’s a bet, really.

The companies winning in the AI era aren’t the ones spending the most. They’re the ones who can see the economics clearly enough to invest with confidence.

If your AI bill is growing faster than your ability to explain it, that gap is the problem worth solving first.

👉 Here’s what good AI cost management actually looks like in production.