In March 2026, CloudZero’s Ben Austin, Director of Product Marketing, sat down with Ray Rike, Founder and CEO of Benchmarkit, to walk through findings from FinOps in the AI Era: A Critical Recalibration, a joint survey of nearly 500 organizational leaders on how they’re managing or, rather, struggling to manage AI costs.

The March 7 webinar, part of a recurring research partnership between the two companies, covered the state of FinOps for AI, where the visibility and forecasting gaps are worst, and what separates organizations that are adapting well from those losing ground. The data was eye-opening. The action items were concrete.

Watch the video

Enter your email for instant access

Here are the top takeaways.

1. The maturity-efficiency paradox

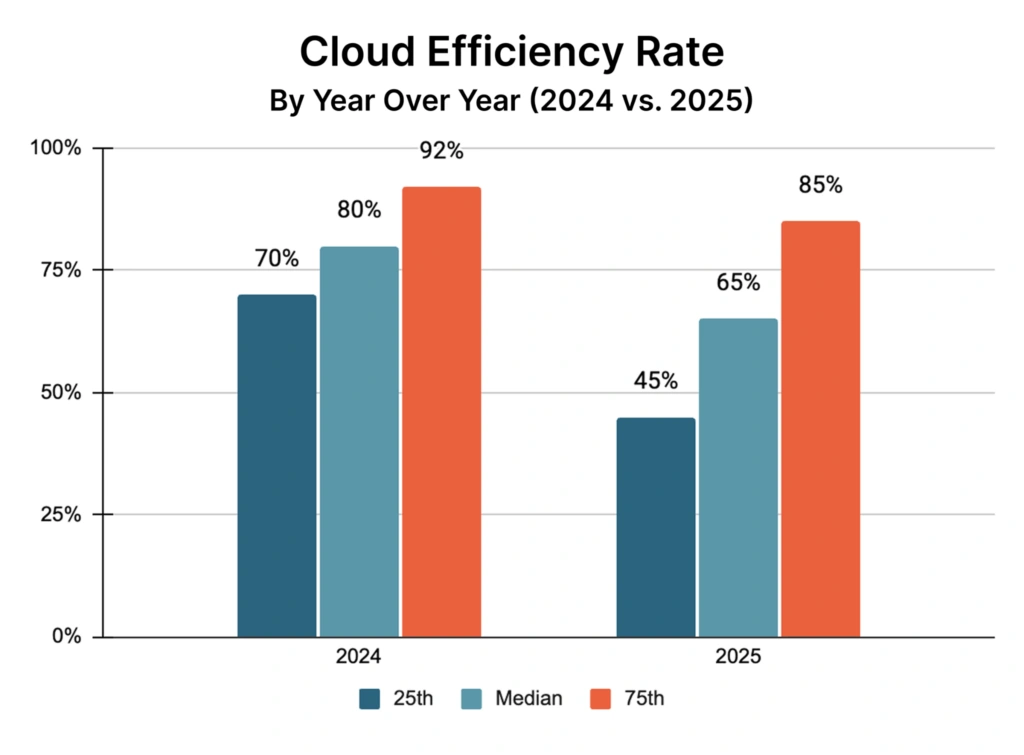

Ben and Ray immediately dug into the top takeaway from the AI Era report. First, the good news: 72% of organizations now have some type of formal cloud cost program — up from 39% the year prior. Teams are taking this seriously.

The bad news: the Cloud Efficiency Rate (CloudZero’s metric defined as revenue minus cloud costs, divided by revenue) dropped from 80% to 65% year-over-year. In plain terms, for every dollar companies are bringing in, 35 cents is going straight back to cloud and AI providers.

Ray put the stakes plainly: this isn’t just about spend volume. It’s about what that spend is doing to margins.

“Your gross profit as a company is really being impacted here,” Ray emphasized, adding that this is before factoring in data-as-a-service, database costs, and other infrastructure components that don’t always show up in the efficiency calculation.

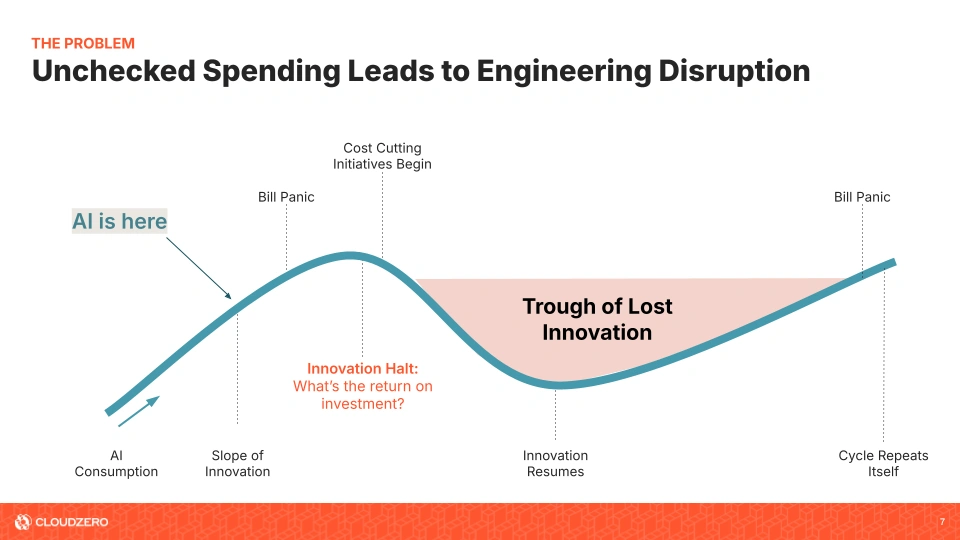

2. The bill panic cycle is real — and it stalls innovation

One of the sharpest frameworks in the session was what Ben called the bill panic cycle. It goes like this: unchecked AI spend accumulates, a surprise bill arrives, cost-cutting initiatives kick in, and engineering teams get pulled off product work to deal with it. Innovation halts. Then, once costs are back under control, the cycle starts again.

“As these new technologies roll out and go through organizations, there’s a lot of hype, there’s a lot of excitement, and potential,” Ben said. “And so organizations go all in — but then at some point that lack of visibility and lack of allocation becomes a significant problem when the finance team gets a bill that’s over, by a lot, what they were expecting.”

The takeaway here is that the goal isn’t to eliminate AI investment. Rather, it’s to shrink this cycle of lost innovation. Ideally, you want to avoid the bill panic moment altogether by building visibility into your operations as early as possible.

3. Stop treating AI spend as cloud spend

One of the more clarifying moments in the session was Ben’s side-by-side breakdown of cloud versus AI cost environments.

Says Ben: Traditional cloud spend is usage-based and infrastructure-driven. Unit prices are known and stable. Billing reports and APIs are mature. It’s predictable at scale.

AI spend is a different animal entirely. It’s usage-based too, but human-driven, which makes it far more variable. Demand spikes based on how people write prompts, which experiments are running, and who in the organization has access to which tools. Visibility into cost and usage is inconsistent across vendors. APIs, where they exist at all, aren’t standardized.

“It’s kind of the Wild West,” Ben said. “People are paying for this stuff on credit cards. It’s entirely usage-based and human-driven, extremely highly variable. And there’s very inconsistent visibility into cost and usage, and limited or no APIs.”

“It’s kind of the Wild West.”

Ben added that this isn’t necessarily a permanent indictment of AI vendors. Rather, it signals a maturity gap. Cloud took years to develop and standardize the billing that today’s infrastructure teams now rely on. AI is earlier in that journey. The call to action is to not wait for vendors to catch up before building your own visibility layer.

4. Forecasting is broken — and finance isn’t happy

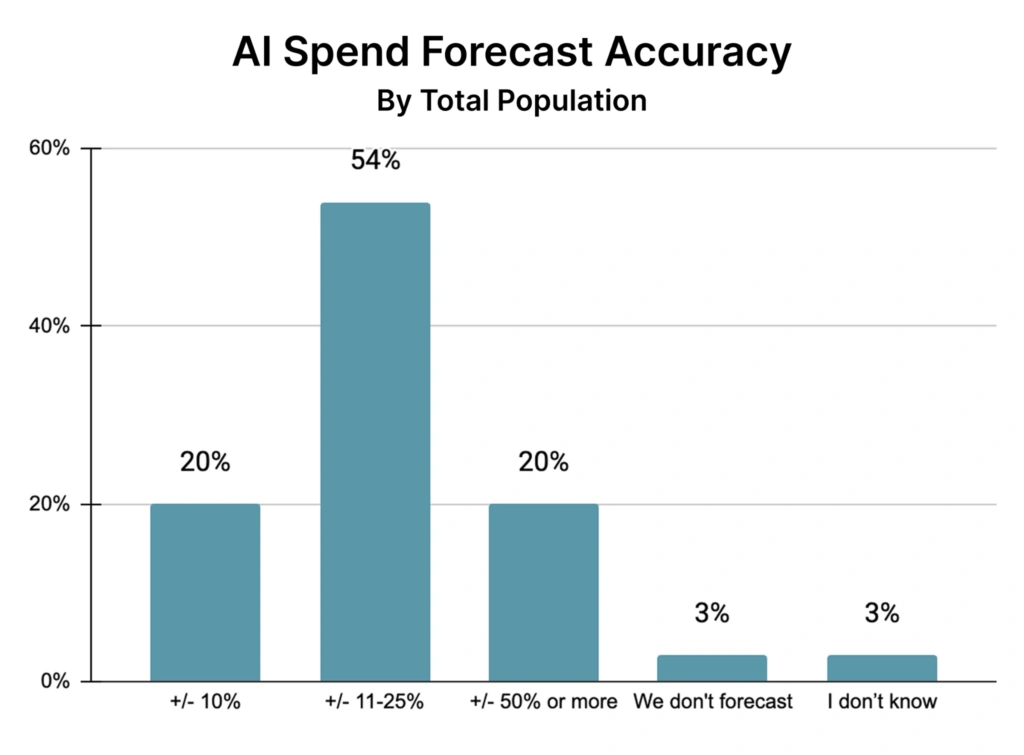

Perhaps the starkest data point in the entire session: 54% of organizations missed their AI spend forecast by 11 to 25%. Another 20% missed by 25 to 50%.

Only 20% of the 475 organizations surveyed came within 10% of their forecast.

Ray didn’t let the severity of that land softly.

“Imagine if your revenue organization, your sales organization, were missing their revenue forecast by 11 to 25%,” Ray said. “And how you respond.”

“Imagine if your revenue organization, your sales organization, were missing their revenue forecast by 11 to 25%. And how you respond.”

The implications for cross-functional credibility are real. As Ray put it, if you’re having those conversations with your head of FP&A or finance — connecting AI spend to profitability impact — they’re going to view you differently as a colleague and a peer.

In other words, if you miss a forecast by 20% with no explanation ready, that relationship can go off the rails.

5. Granularity is where the gap is widest

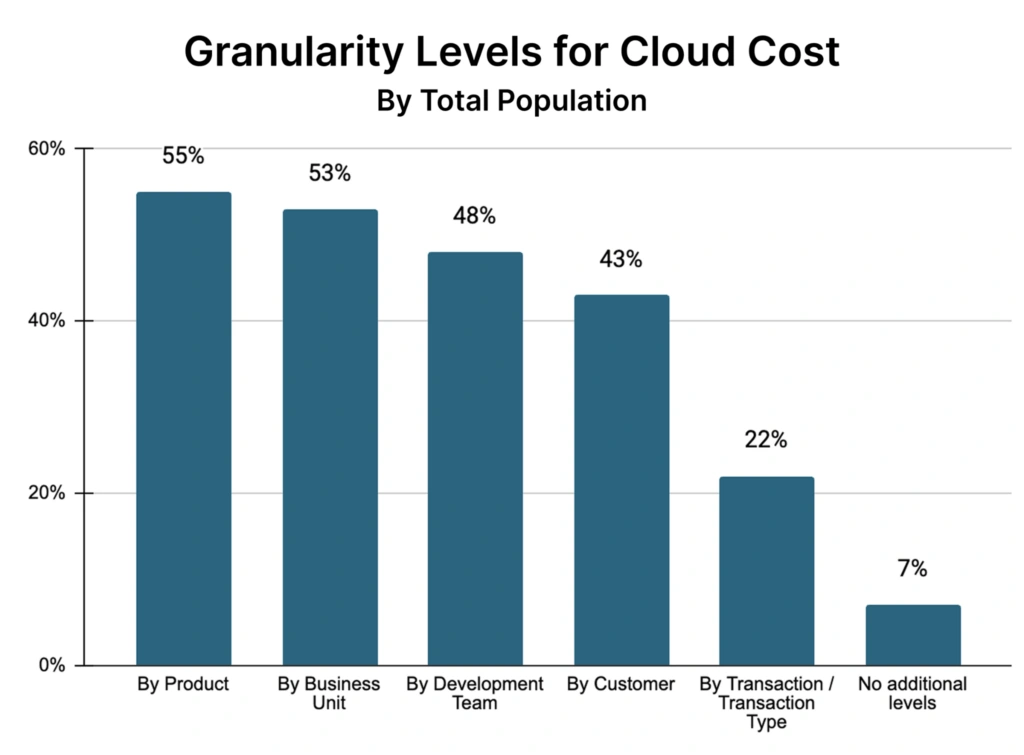

Four out of five organizations (80%) that deliver digital products factor cloud costs into their pricing. That makes cost tracking a customer satisfaction issue, not just an internal one. But the tracking doesn’t match the exposure.

Only 43% of organizations track cloud and AI costs at the customer level. Only 22% — primarily in financial services — track at the transaction level.

Ray shared an example that illustrated what’s at stake: a CFO who knew token costs were dropping but still saw rising inference costs. The reason? No guardrails, and no visibility by product or feature. One customer was consuming roughly 10 times what any other customer was — and no one had caught it.

“If you only get 22% tracked by transaction, you’re gonna be exposed,” Ray said.

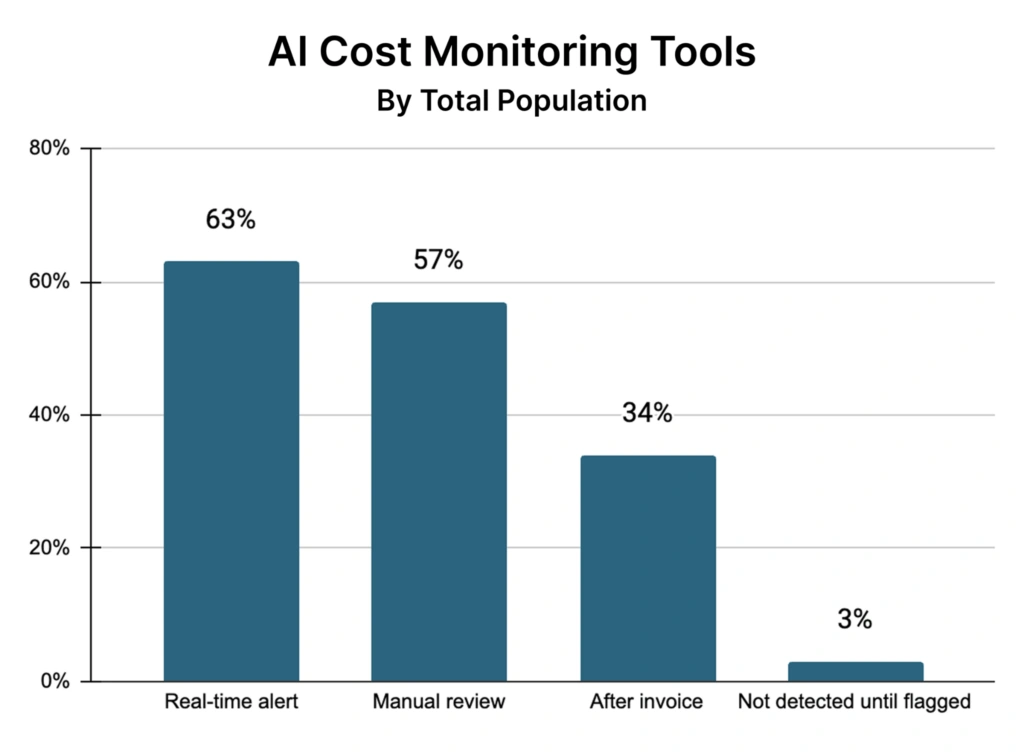

The maturity gap shows up in tooling too. 63% of organizations have built internal dashboards. 57% rely on their LLM provider’s own billing tools. The problem: the average enterprise is now using 3.1 LLM models — and tracking spend across multiple vendor portals with no unified view creates exactly the kind of gap the survey exposed.

6. How to build a defensible AI operating plan

Ben then walked the audience through a set of practical steps for finance and FinOps teams trying to bring more rigor to AI cost planning. These are tangible actions that separate teams with defensible forecasts from those getting blindsided.

Anchor assumptions in cost drivers

AI budgets fail when they’re built on best guesses instead of variables. The fix is to translate engineering decisions into business impact — specifically into unit costs tied to real cost drivers for your organization. Cost per customer is a simple starting point. More granular unit costs get you further.

Pre-build your variance narratives

If you’re forecasting based on cost per customer and you know your customer growth assumptions, you can explain before the fact why costs might come in higher or lower. Slower growth means lower spend. Faster growth means higher costs — but that’s a good story.

“Nothing kills credibility like having a big surprise come up midway through the year or midway through a quarter and not having an answer for it,” Ben said.

Separate growth spend from experimentation

Label AI spend by type — growth initiatives versus experimentation — and tie ownership to each bucket. This gives you levers to pull when costs start climbing. You can dial back experimentation without touching growth investment.

“If costs are starting to get out of control,” Ben explained, “you can say, ‘Okay, we need to pair back some of that experimentation spend, but keep our foot on the gas as far as the growth spend.’”

Review leading indicators monthly

Quarterly reviews are too slow. The FinOps Foundation’s own guidance points to one- to two-month forecasting horizons, and monitoring at least monthly. AI spend can move fast enough that a quarterly cadence leaves surprises baked in by the time anyone catches them.

Ray added one more dimension worth noting: 64% of organizations are now charging AI costs back to the business unit or department that incurred them. As Ray puts it, if you’re responsible for AI infrastructure and you walk into a multi-billion-dollar business unit and tell them their costs came in $12 million over budget, that’s a conversation nobody wants to have without a framework behind it.

7. What separates top performers

The session closed with five attributes Ray and Ben identified among the most mature organizations in the survey:

- Granular visibility that distinguishes AI from cloud — top performers track token volume by product and use case, not just in aggregate.

- Customer- and transaction-level allocation — where only 22% of organizations are today, but where the leaders already operate.

- Engineering efficiency over commercial levers — the biggest cost wins come from code optimization, not vendor negotiation.

- Cost-to-price alignment — 84% price AI into their products, but only 43% track costs by customer. Pricing without attribution is a guess.

- Real-time guardrails — 63% use real-time overage detection. The leaders have moved well beyond monthly reviews.

From the Q&A

How does CloudZero fit into all of this?

Ben’s answer mapped directly back to the challenges the audience identified in the opening poll: lack of visibility, unclear allocation, inaccurate forecasting.

“Accurate allocation — that’s our bread and butter,” Ben said. “That’s what we do better than anybody else: help accurately allocate the spend, whether it’s cloud costs, AI costs, back to the parts of your business that you care the most about.”

What about agentic AI and resource sprawl?

Ray flagged this as one of the genuinely hard problems emerging right now. Agentic workflows are multi-variate resource consumers — they can tap LLMs, system-of-record databases, data models, and more simultaneously.

Tracking what they consume, and attributing that cost to a business outcome, is still an open problem for most teams. CloudZero can surface the cost signal; governing the agent behavior itself is a separate challenge.

How do you know if you need a solution like CloudZero?

Ray offered a simple gut-check: “If you’ve ever heard someone in finance, whether that’s during the budget process or an executive team meeting, say, ‘Our AI or cloud costs are out of control — do we have any clue how much we’re going to be spending next quarter or next year?’ — if anything like that comes out, it’s probably a good opportunity to at least have that conversation.”

The bottom line

The survey data tells a clear story: AI investment is accelerating while the infrastructure for managing it — visibility, allocation, forecasting, governance — is lagging badly.

Most organizations are not within 10% of their AI spend forecasts.

Most don’t track costs at the customer or transaction level.

Most are relying on vendor-provided billing tools that weren’t built for a multi-model, multi-vendor environment.

The recalibration isn’t about pulling back on AI. It’s about building the financial and operational layer underneath it — so that when the next bill arrives, you have an explanation ready, and a plan already in motion.

Want to dig into the full findings from the report? Dive in here.

Ready to get visibility into your AI and cloud spend? Schedule a demo.