Organizations receive high monthly bills from cloud providers with no way of determining why the number changes from month to month or how to accurately predict future spend.

More importantly, there is little information to provide visibility if cloud resources are being deployed efficiently or if spend is correlated to business performance.

With AWS, Azure and GCP growing 40% year-over-year growth in 2021, and companies like Snowflake, MongoDB, and Datadog growing even faster (Snowflake’s YOY revenue growth hit 110% in Q4 2021), one thing is clear in today’s economic environment: unmanaged cloud spend will only become a more serious issue.

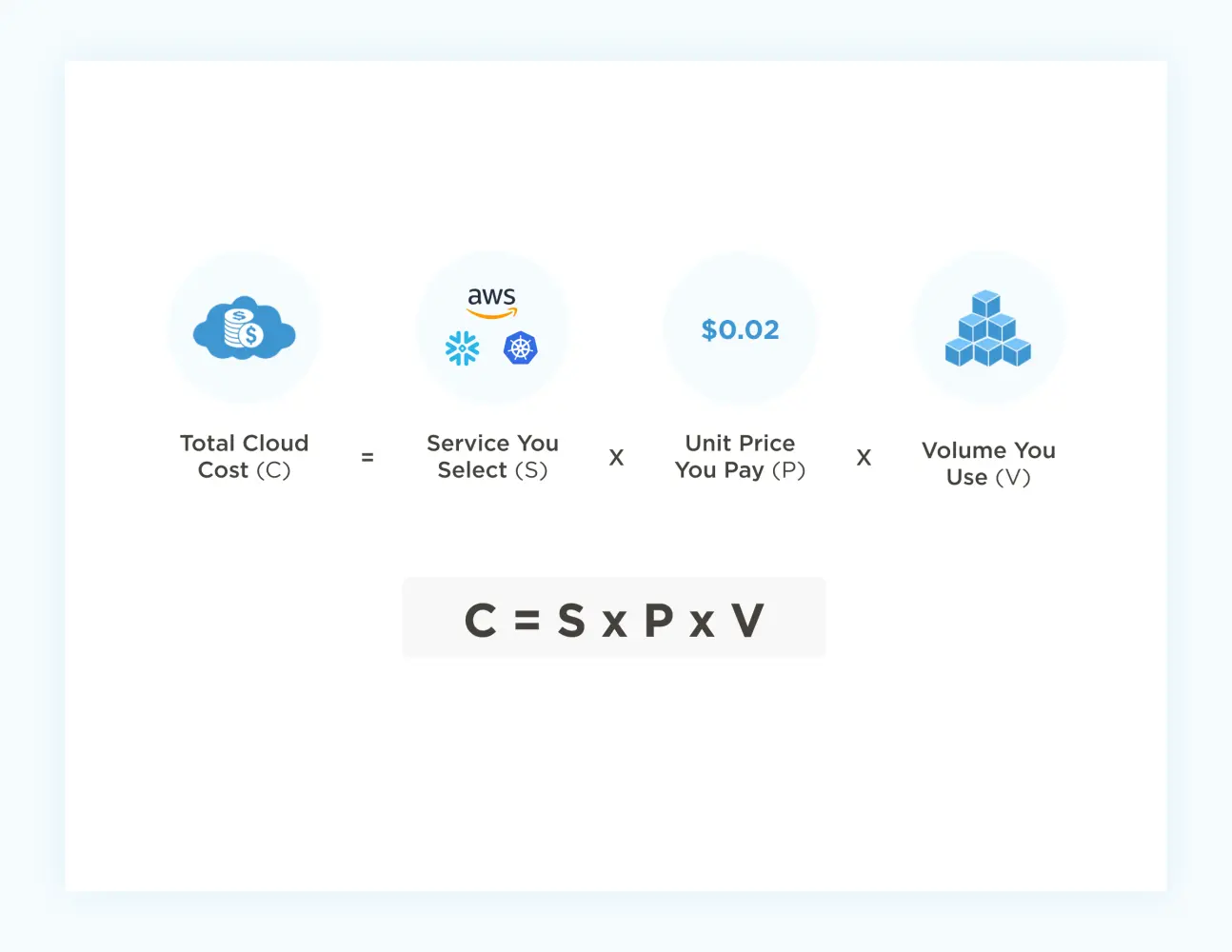

What many organizations don’t realize is that there’s a surprisingly simple formula for managing cloud costs. It looks like this:

In effect, organizations looking to control their cloud spend have three knobs they can turn: Service, Unit Price, and Volume. But they can’t turn all of them whenever they want — some are fixed early on in the software development lifecycle, others are more adjustable after the fact.

In this article, I break down what each of these variables mean, sorting them in order from easiest to hardest to optimize.

Unit Price

What it is: The fee you negotiate with your service provider.

Who influences: Procurement. Finance. Cloud Operations.

How it works: Within each service, organizations are eligible for certain discounting programs. Common examples:

- Enterprise Discounts – Organizations pay less for cloud activity by committing to a certain level per year.For example, AWS’s Enterprise Discount Program offers discounts for companies willing to commit upfront to a certain amount of cloud spend. Other cloud vendors offer similar programs.

- Reservations – Organizations reserve capacity in advance and thus receive a lower overall price.There are many different variations of these discounts, including AWS Standard Reservations, AWS Convertible Reservations, AWS Savings Plans, GCP Committed Use Discounts, etc.

- Spot Instances – Spot Instances (or preemptable on GCP) lets you shift workloads to a different time of day to receive lower prices (like peak vs. off-peak train tickets).

How to optimize: Price is the easiest knob to turn after the fact. Price is based on existing and future architecture and organizational needs. Negotiating an Enterprise Discount, requires good forecasting of future cloud spend, and the better you are at that the greater the discounts you can lock in.

Reservations requires one to really understand their capacity requirements and the risk/reward balancing act of making a commitment. Spot requires an understanding of the architecture of the application and its ability to shift workloads to different times of the day.

Difficulty level: Low to Medium.

Negotiating a good price depends on a general sense of your likely cloud usage volume in a given year. That estimate is easy enough to make based on last year’s data and a good understanding of the directional heading of your cloud spend.

But as we’ll see, dynamically optimizing your usage volume can have rippling benefits on price. Also, many companies want to do some level of optimization first before locking in any commitments.

Volume

What it is: How much you’re using the cloud — and how that compares to how much you thought you’d be using it.

Who influences: Engineering. Cloud Operations.

How it works: At the simplest level, engineers are writing code that dictates how much of a given service is going to be consumed at what level – compute, storage, network, database, etc.

For example, I recently saw a component of our application that had 8 different types of API calls that were made multiple times in a given execution of that unit of code.

This particular component is also executed on a schedule, at least 3 times a day. So if we assume 2 API calls for each type, then that results in 16 calls, 3 times a day or 48 calls.

Each call has a price. If that process was only run 2 times a day that is reduced to 32, thus reducing the overall cost.

This also includes eliminating obvious waste (e.g. unattached EBS volumes, databases with no connections, old or redundant snapshots, etc.)When businesses put a premium on light-speed innovation, volume tends to mount unchecked.

How to optimize: Get visibility – cost by service, cost by feature, underutilized resources, etc. Visibility shows you what you’re spending, and empowers you to decide where you might want to explore making changes to reduce cost.

The ability to understand your cloud spend data through many dimensions is a key differentiator for CloudZero.

Difficulty level: Medium.

Making progress here first requires visibility.Many organizations will take a centralized approach to getting started, often eliminating the obvious waste.To truly have an impact here, companies must align their costs with the Engineers who can control those costs.

While many times the solutions to drive material savings are easy to implement, it requires Engineering leadership to prioritize allocating some time to stay on top of this (much like technical debt is managed).

Service

What it is: Which cloud provider(s) and underlying cloud services you’re using, and how you’re using them.

Who influences: Software Architects, Engineering, Infrastructure Teams

How it works: Everyone has heard of the Big 3 cloud providers: Amazon Web Services (AWS), Google Cloud Platform (GCP), and Microsoft Azure.

We are also seeing companies spend billions of dollars on cloud providers like Snowflake, MongoDB, Databricks, Cloudflare, Auth0 (which was recently acquired by Okta), Twilio (who has also acquired other providers like Segment and SendGrid), etc.

Service areas include but are not limited to:

- Compute

- Storage

- Network

- Database

- Messaging

- Content Delivery

- Authentication

- Security

… and more. Within each area, there are dozens of services one could use.

For example, Amazon lists 14 types of basic compute service. Compute services come in many flavors — virtual machines, containers, serverless, etc.

Beyond the architecture decisions of which type of compute service to use, one must also consider the impact of decisions like operating system (if you are like me you are likely still recovering from Microsoft’s 2019 licensing changes for Windows in the public cloud), instance type (over 50 different options there just for EC2), instance generations (yes one still needs to worry about being on the latest version), etc.

All of these decisions must be made upfront, and all have reverberating impacts on cloud spend, especially as organizations scale.

And that’s just compute. Engineers face similar decision trees for storage, network, security, content delivery, security and whatever else their organization needs.

How to optimize: Pick the right cloud service upfront and monitor the cost impact of your choices early in the development lifecycle so you can “fail fast”.

Understand what your organization needs, the scale at which it needs it, and what each different service empowers you to do. Service is one of the more difficult variables to adjust after the fact.

Difficulty level: High.

Making informed service choices requires a good deal of time, data, effort, and expertise.

There are many variables that impact cloud costs. As you can see, much of the decision-making rests with engineers, but the financial outcomes are generally the responsibility of finance teams.

Also, while Unit Price is often the easiest lever to optimize, it is important to remember that reducing Volume or selecting the right Service can oftentimes have the biggest impact.

Solutions like CloudZero exist to facilitate this collaboration, giving organizations maximum control over the cloud cost formula.