For data professionals interested in data modeling, artificial intelligence, and machine learning, Databricks offers a robust cloud data platform. The Databricks Lakehouse combines data lake and data warehouse capabilities in one architecture.

This makes Databricks particularly suitable for building sophisticated data analytics, artificial intelligence (AI), and Machine Learning (ML) models.

Plus, Databricks claims its lakehouse is up to 12X cheaper than traditional alternatives. In April 2022, Databricks announced that it now delivers up to 4X better price performance on AWS for data lakehouse operations using Graviton2 instances.

Does that hold true, and how does Databricks pricing actually work? Let’s take a closer look at that and more below.

How Does Databricks Charge?

Databricks charges you based on the compute resources you consume. Databricks uses per-second billing for this pay-as-you-go model. Using Databricks doesn’t require upfront costs or recurring contracts. You just pay for what you need when you need it (on-demand rate).

If you want to use Databricks for free but with limited features, such as to train your data team, you can use the Databricks Community Edition (fully open-source). Databricks offers a free 14-day trial if you want to try it out fully.

Yet, you can earn discounts off the standard rate (on-demand pricing) in two ways:

- Commit to a certain amount of usage; the greater your commitment, the greater your discount. Plus, you can use the discount across multiple clouds.

- Use Spot Instances whenever applicable to get up to 90% off on-demand or standard pricing.

Databricks bills per Databricks Unit (DBU).

What is a DBU in Databricks?

DBU units measure the amount of processing power you use on Databricks’ Lakehouse Data Platform per hour. Billing is based on per-second usage.

To determine the cost of Databricks, multiply the number of DBUs you used by the dollar rate for each DBU.

Several factors determine how many DBUs a specific workload consumes, including:

- The amount of data it processes

- How much memory it uses

- The vCPU power it takes

- Your Region

- Pricing tier and, thus, the type of Databricks services you use

Your Cloud Service Platform (CSP) plays a crucial role in how much you actually spend on Databricks (Total Cost of Ownership). Here’s why.

Research Report

FinOps In The AI Era: A Critical Recalibration

What 475 executives told us about AI and cloud efficiency.

Understanding Databricks Pricing: What You Need To Know

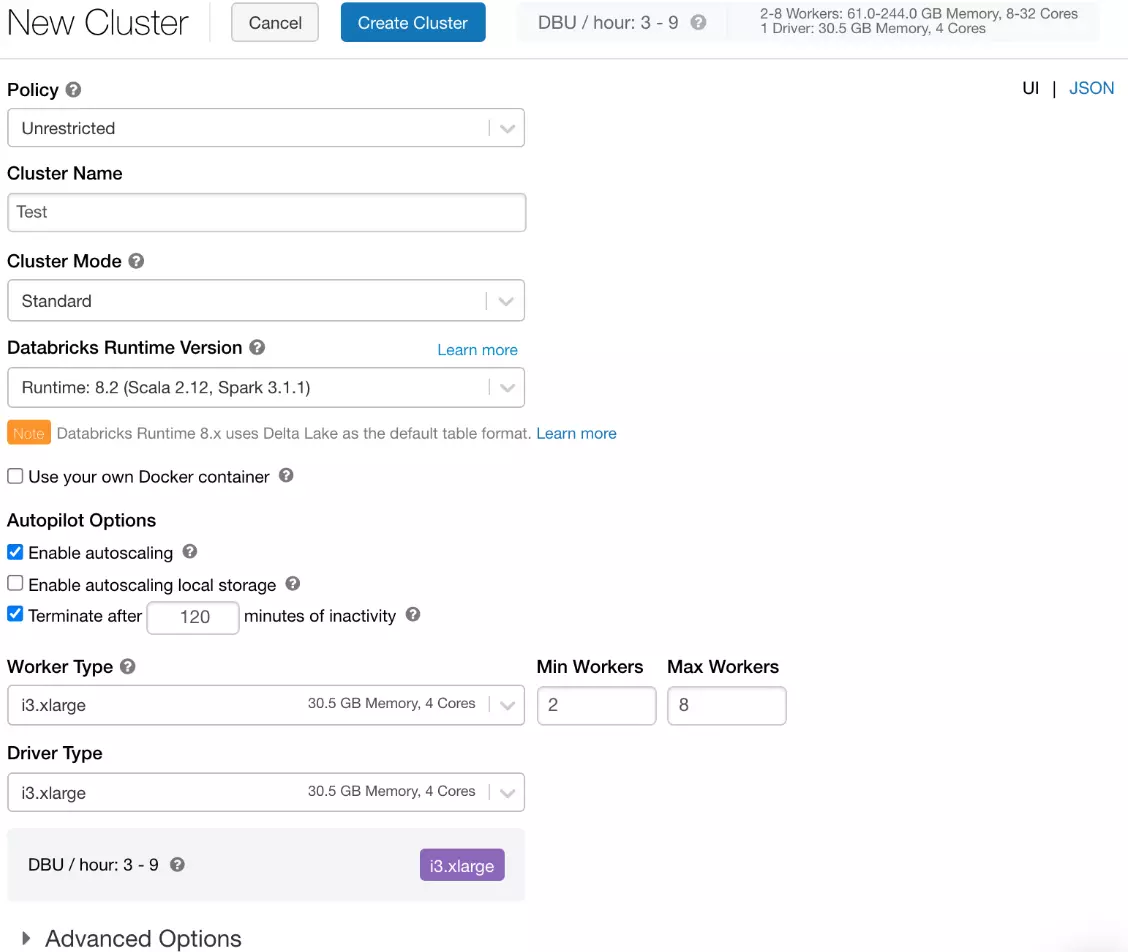

Pricing for Databricks clusters depends on the following factors:

- Databricks pricing tier you select (Standard, Premium, or Enterprise).

- Databricks Compute type you choose (Jobs Compute for specific data engineering pipelines, SQL Compute for BI reporting and SQL queries, and All-Purpose Compute for general data science and ML workloads) as well as Serverless Compute.

- Your Cloud Service Provider (AWS, Azure, or Google Cloud) and region. Unlike standalone services like Snowflake, Databricks has native AWS, Microsoft Azure, and GCP offerings.

Your cloud provider provides the compute instances, storage, and networking capacity you use with Databricks — and you pay the CSP a separate fee for those.

Databricks consumes DBUs as long as the compute cluster is active. The number of DBUs you use depends on various factors, such as your data volume, processing time, and the complexity of your data transformation.

Example: Let’s say you used an i3.xlarge cluster for a two-hour data pipeline that consumed three DBUs to complete your task. You’d need to pay your cloud service provider directly for the compute cluster you used during the two hours and then pay an additional charge in DBUs to Databricks.

Behind the scenes, when the job starts, Databricks automatically turns on your CSP-provided compute instances (such as Amazon EC2 instances), executes the task, and then switches the instances off after a predefined idle period to save costs (Databricks’ auto-terminate feature).

Credit: Databricks auto-terminate feature

In a minute, we’ll examine each approach. Meanwhile, you can test out Databricks for 14 days free to see if it’s right for your workload.

What does the Databricks free trial provide?

You get user-interactive notebooks to work with Apache Spark, Delta Lake, Python, TensorFlow, SQL, Keras, Scala, MLFlow, and scikit-learn, among other tools. If you want to deploy Databricks on a private cloud, you’ll need to contact them for custom configuration.

During the trial, Databricks won’t charge you for using its service, but the underlying cloud infrastructure will (such as Amazon EC2 instance or Azure VMs).

You can cancel the free trial at any time before it expires. Otherwise, you’ll automatically be subscribed to your current plan.

Here’s how your AWS, Azure, or Google Cloud infrastructure affects your Databricks costs.

1. Databricks pricing on AWS

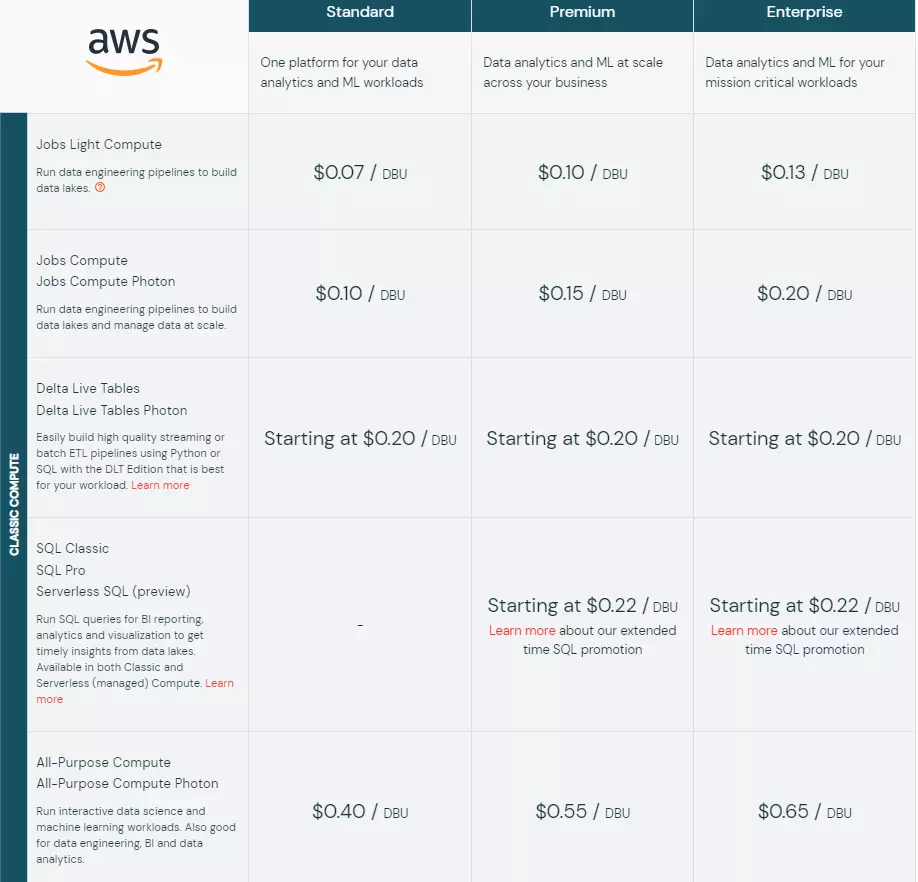

This pay-as-you-go method means you only pay for what you use (on-demand rate billed per second). If you commit to a certain level of consumption, you can get discounts. There are three pricing tiers and 16 Databricks compute types available here:

Databricks on AWS pricing

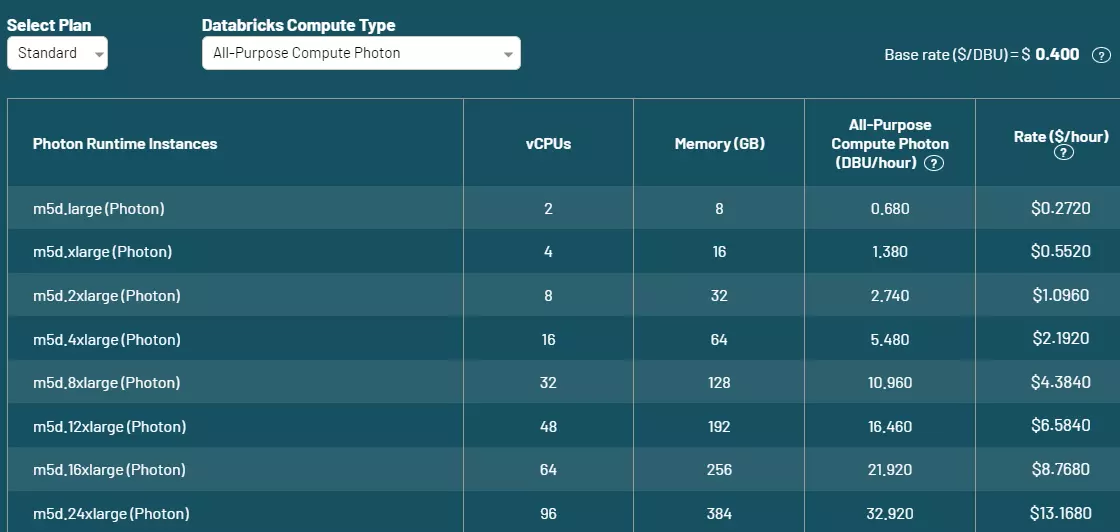

This is what your dollar rate per DBU for one hour using a m5d instance type would look like if you chose the Standard plan and an All-Purpose Compute (with Photon) Databricks Compute type:

Databricks on AWS pricing for m5d instance types

However, each plan includes or lacks some capabilities. For example, the Standard plan is 50X faster than Apache Spark but doesn’t include Databricks SQL Workspace and Databricks SQL Optimization, whereas the other two plans do.

Credit: Databricks

Databricks’ Serverless Compute service will run on Databricks’ AWS account, so you won’t have to pay Databricks and AWS separately.

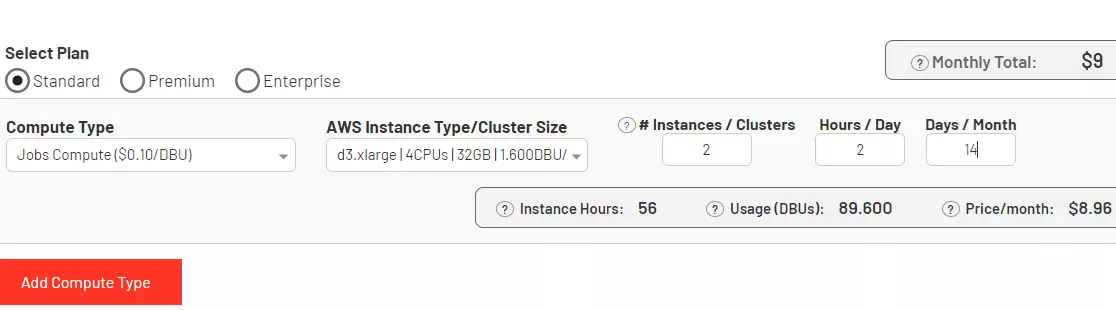

Now, if you want to simplify that calculation, Databricks offers a calculator with filters to make it easier:

Credit: Databricks pricing on AWS calculator

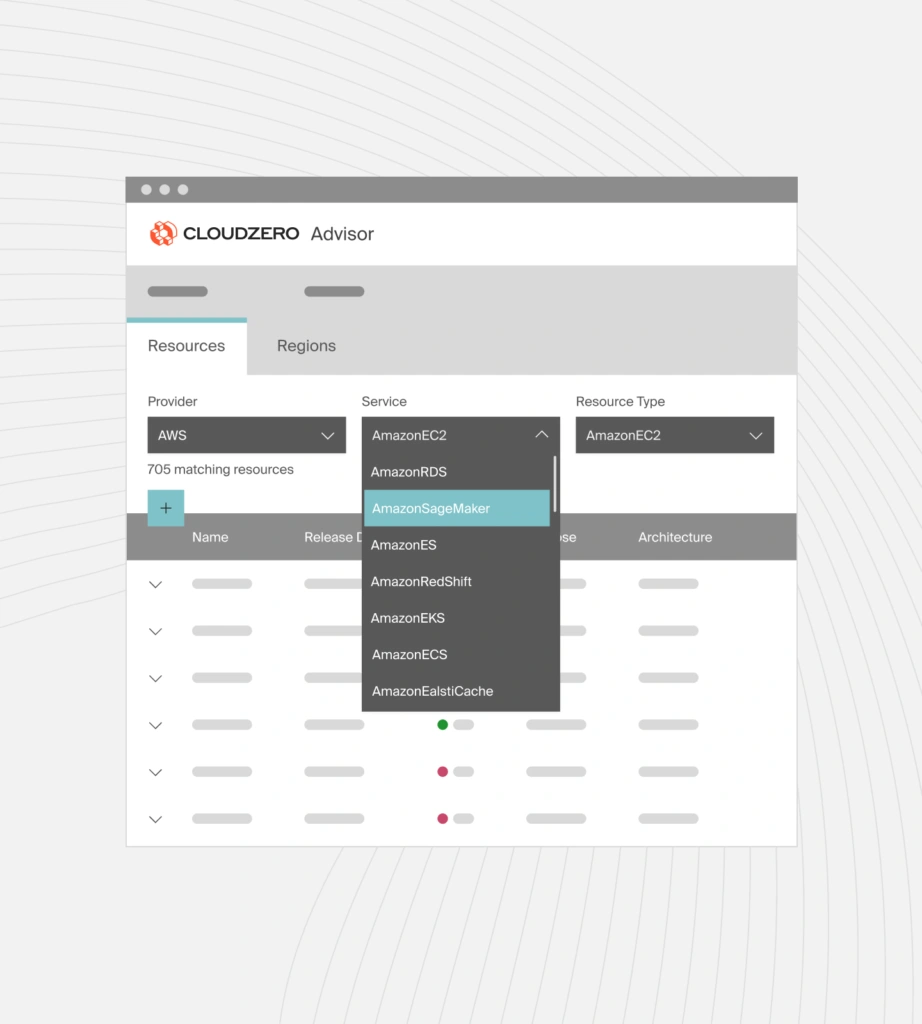

Then, with CloudZero Advisor, you can also get recommendations on AWS services such as EC2, S3, and ElastiCache based on pricing, performance, and instance configuration to optimize your CSP costs. Here’s an idea of what to expect:

Discover the best AWS tools based on pricing, AWS services, regions, instance types, sizes, and more using CloudZero Advisor.

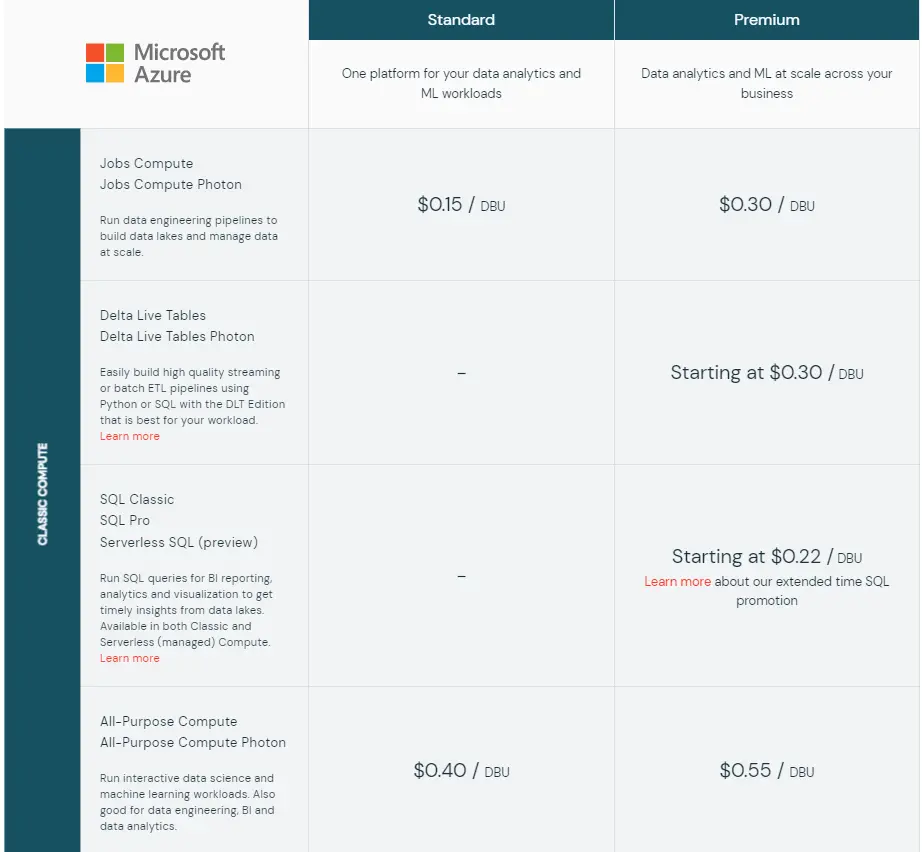

2. Databricks pricing on Azure

The Databricks pricing on Azure offers two plans (Standard and Premium) and supports nine types of Databricks compute workloads:

Microsoft Azure Databricks pricing

On top of the payment for Azure virtual machine usage, Azure Databricks will also charge for managed storage, disks, and blobs.

Nonetheless, Azure offers discounts of up to 33% and 37% off the on-demand DBU rate per hour when you commit to a certain usage level for one and three years, respectively. If you want the best deal, you can also use spot instances.

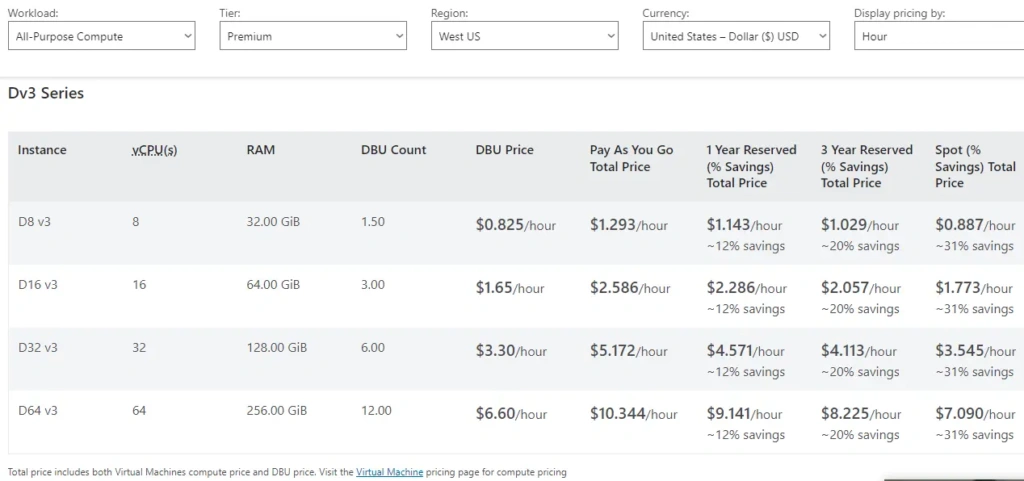

Here’s an example of prices if you used Azure’s DV3 series instances:

Microsoft Azure Databricks pricing for General Purpose – DV3 Series instances

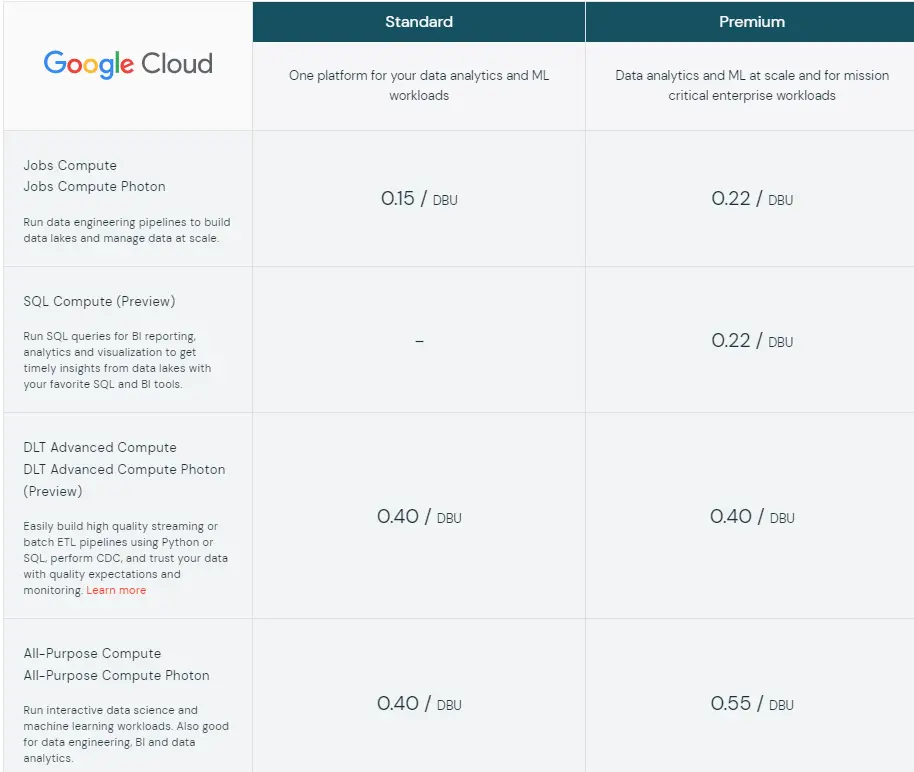

3. Databricks pricing on Google Cloud

Databricks on Google Cloud also offers on-demand billing, like AWS and Azure, without upfront costs or contracts. You’ll get two plans (Standard and Premium), and seven compute types to work with:

Databricks on Google Cloud pricing

Here’s a more detailed example, with various pricing factors considered:

Pricing for Databricks on Google Cloud

What Affects Your Databricks Costs?

Below are a few key takeaways to keep in mind:

- Databricks bills in Databricks Units (DBUs) are processing power per hour and charged per second of usage.

- Compute and storage capacity influence your Total Cost of Ownership when using Databricks.

- Your Cloud Service Provider charges you directly for storing your data in cloud object storage (such as Amazon S3 or Azure DLS).

- Compute costs have two components: First, you pay your CSP directly for the underlying compute infrastructure (such as Amazon EC2 or Azure VMs). Then, you pay for DBU usage as long as your Databricks compute cluster is running.

Accurately Collect, Understand, And Optimize Your Databricks Costs With CloudZero

Databricks integrates data lake and data warehouse capabilities for modern data professionals. The tool is handy for training and analyzing AI and machine learning models.

Even so, many people feel that Databricks’ pricing is a bit high. To reduce your costs, you can:

- Start and stop jobs with Databricks Auto-termination to reduce waste, hence your Databricks TCO.

- If you already know how many compute and storage resources you will need, take advantage of committed-usage discounts and Spot instance capacity.

However, if you aren’t sure how much capacity you’ll need, you can use a robust enough tool to aggregate, collect, normalize, analyze, and present your Databricks costs in a way that’s valuable to you.

Once you’ve tracked the cost insights for a while, you’ll have enough accurate, granular intelligence to make a decision.

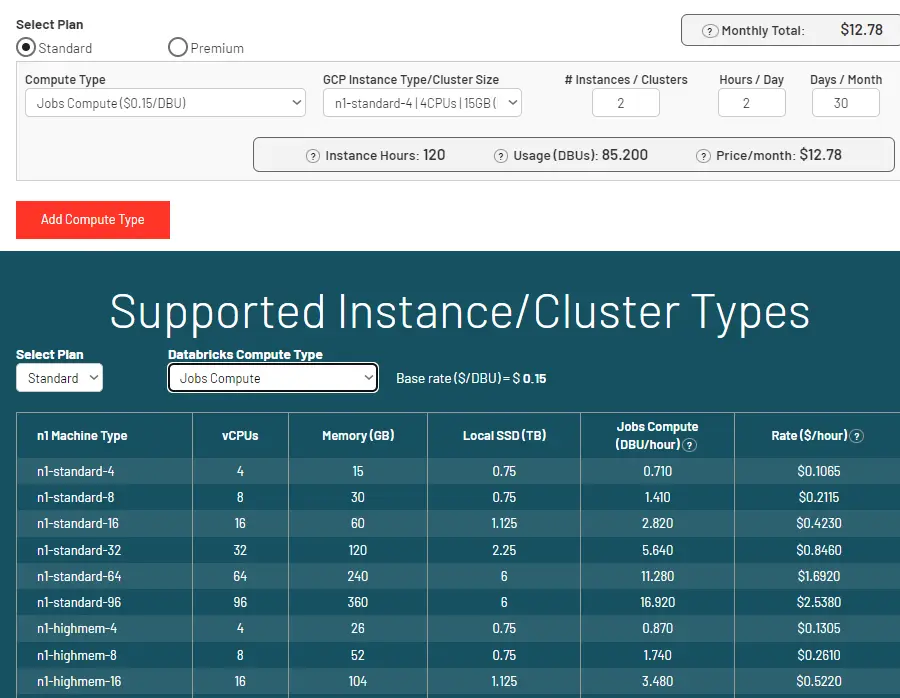

You can achieve all of this and more with CloudZero.

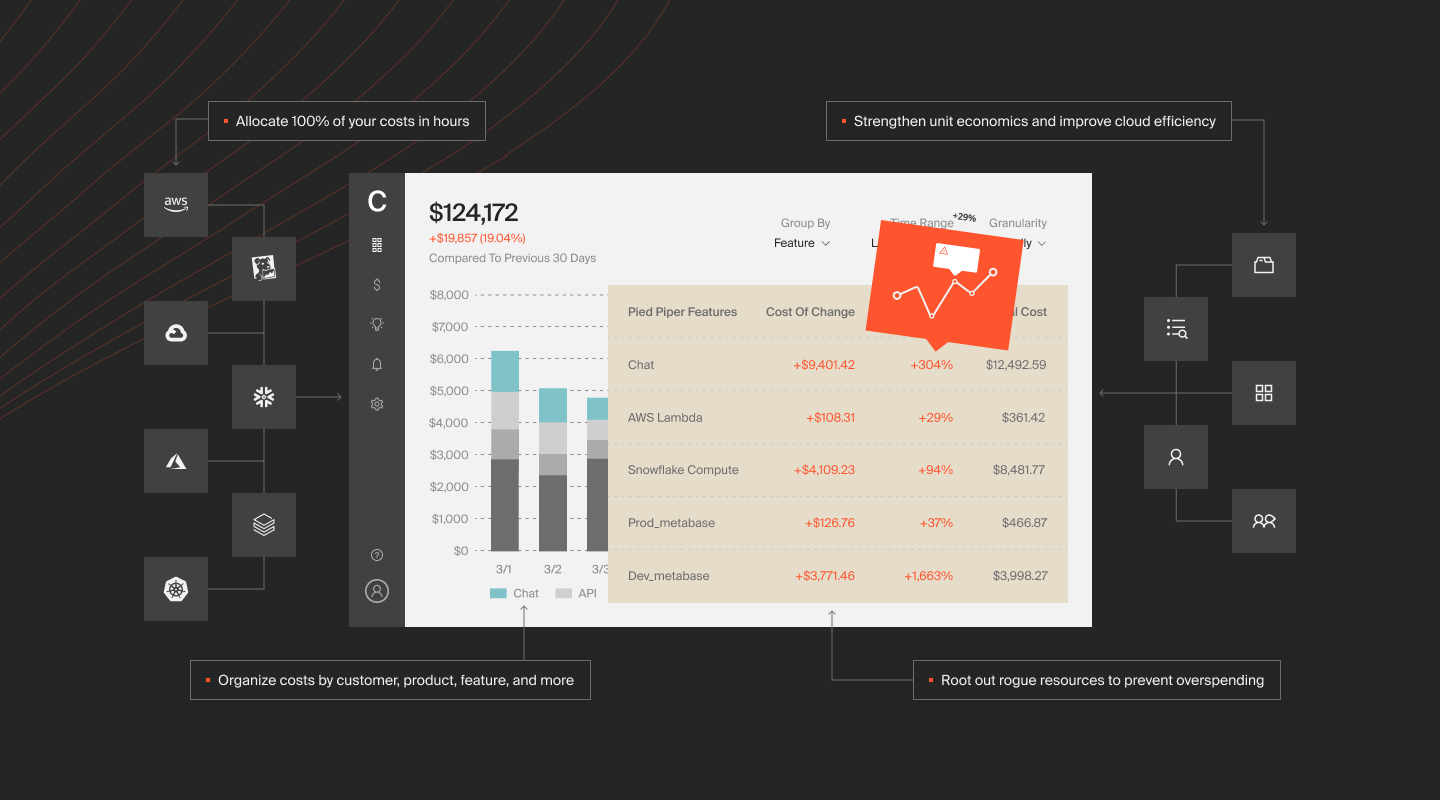

CloudZero AnyCost aggregates, enriches, visualizes, analyzes, and presents your Databricks costs across AWS, Azure, Kubernetes, and GCP. For organizations using Databricks for AI and ML workloads, CloudZero’s AI cost management framework provides the additional layer of visibility needed to track costs by model, training run, and inference endpoint.

The future is multi-cloud, and CloudZero allows you to get a holistic view of all your costs, including Databricks, MongoDB, New Relic, and Snowflake costs.

With CloudZero AnyCost, you can zoom in on your costs and view costs by customer, product, feature, team, project, environment, service, and more.

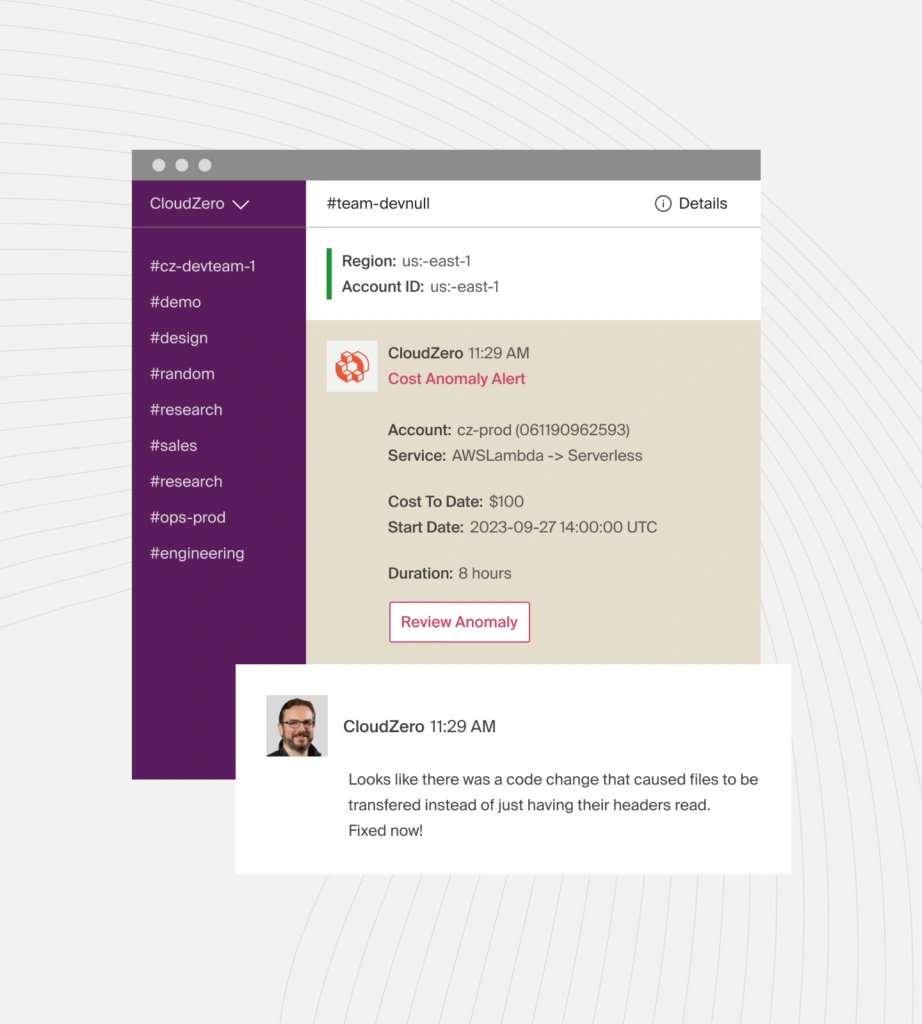

Real-time anomaly detection sends smart alerts to a specific team or individual via Slack, email, or text so they can prevent overspending.

to see the power of CloudZero for yourself!

to see the power of CloudZero for yourself!

FAQs

We’ve answered some of the most common questions about Databricks pricing below:

What is the Databricks pricing model?

It’s pay-as-you-go. There are no contracts or upfront payments required. You can use this on-demand rate (as needed) if you’re unsure how many resources you’ll require to complete a particular task. Committed usage offers discounts if you know how much you’ll use.

How is Databricks billed?

Databricks charges you based on the amount of Databricks Units (DBUs) you consume per hour.

Does Databricks have a free plan?

Yes. It offers a 14-day free trial, after which you’ll be automatically billed at the on-demand rate if you don’t cancel.

Is Snowflake more expensive than Databricks?

It can be tough to compare Snowflake vs. Databricks pricing because both offer unique data cloud services with different pricing considerations.

Is Databricks an alternative to Snowflake?

Many organizations use Databricks (data science, analytics, and modeling for AI and ML) and Snowflake (Data analytics and Business Intelligence) together. For a detailed comparison of their performance, pricing, and use cases, check out Snowflake vs. Databricks.