As cloud systems become increasingly sophisticated, you want a cloud monitoring platform that helps you identify, isolate, and fix root-cause issues. Meanwhile, engineering leaders are under increasing pressure to reduce technology costs as the global economic outlook remains uncertain.

With Datadog, you can observe, monitor, analyze, and report on the health of your infrastructure, applications, and services in any cloud and at scale. Engineers tell us that Datadog is easy to use, unlike many alternatives, and offers actionable insights into root causes and cloud cost drivers.

One big complaint we often hear about Datadog is that it can be quite expensive. In this guide, we’ll discuss Datadog pricing, cost optimization strategies, and more.

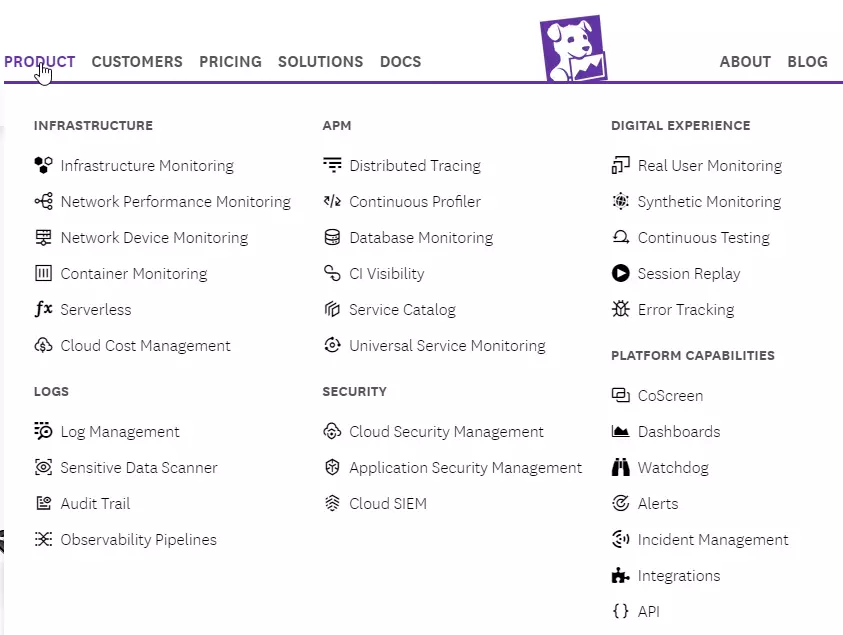

What Does Datadog Do?

Datadog is a full-stack observability and cloud monitoring platform. You can track the health and performance of your cloud applications by aggregating, analyzing, and reporting telemetry and logs with Datadog.

Datadog offers over 18 monitoring services, organized into five categories: monitoring infrastructure health, application performance monitoring (APM), managing logs, monitoring digital experiences, and tracking security posture.

You can monitor any software stack or application at various levels of detail with Datadog’s all-in-one monitoring solution.

Research Report

FinOps In The AI Era: A Critical Recalibration

What 475 executives told us about AI and cloud efficiency.

Understanding Datadog Pricing And Cost Management

Datadog pricing is usage-based and depends on the specific monitoring services you configure it to track. Monitoring infrastructure costs is different from tracking your application’s performance metrics and so on.

There are a few more variables to consider, though. Some services charge per host, while others bill you per user, per GB of data, by spend, or per session/test/function/run.

For example, Datadog charges per host per month for infrastructure monitoring. It defines a host as any virtual or physical OS instance it monitors. That instance may be a server, virtual machine (VM), node (for Kubernetes), or App Service Plan instance (for Azure App Services).

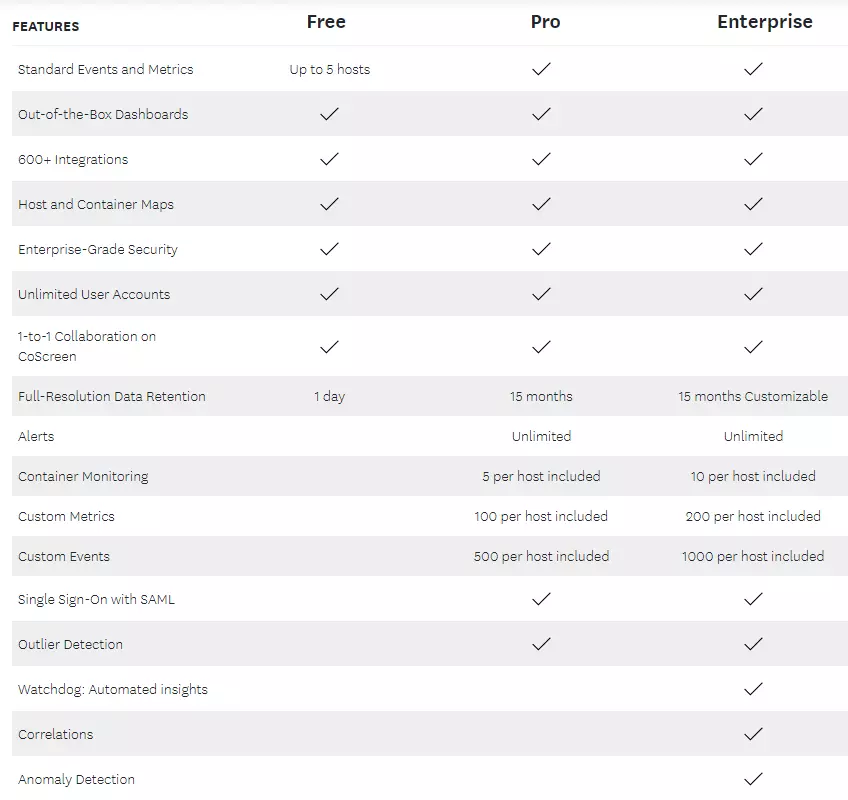

The service is free for up to five hosts and one day of full-resolution data retention. After that, Datadog plans start at $15 per host per month, and volume discounts apply if you use over 600 hosts per month.

Datadog infrastructure monitoring plans

Optimizing Versus Cutting Datadog Costs

Monitoring costs can add up quickly with Datadog. For example, when running microservices, the monitoring service can pull a lot of logs, increasing your ingestion costs. In addition, Datadog charges increasingly higher data retention fees the longer you store your monitoring metrics and logs.

It also charges $1 per container per month (committed use), meaning your Datadog charges can ramp up quite a bit as your containerized application scales.

Even so, building your own cloud monitoring stack with open-source and “free” tools, such as Grafana and Prometheus, can take a lot of time and skill and may cost more than using a SaaS monitoring service like Datadog.

If you’re like most engineers, CTOs, and CFOs, you’re under pressure to keep costs low and ROI high. However, you do not want to indiscriminately slash your Datadog cloud monitoring cost. Instead, you’ll want to optimize it. Here’s the difference.

What Is Datadog Cost Optimization?

This refers to improving the cost of using Datadog by identifying inefficiencies you could remove, minimize, or repurpose to save more.

Like with other cloud tools, cost optimization for Datadog requires first understanding where your money is going. Only then can you efficiently pinpoint what to cut or retain without weakening your monitoring and observability capabilities.

To optimize Datadog costs, organizations experiment with a variety of workarounds, including:

- Reducing the time they keep logs in Datadog

- Ingesting a portion of the logs and not retaining it at all (dealing with log rehydration when required)

- Ingesting fewer logs altogether, potentially hindering observability and monitoring

Workarounds like these are not ideal. They can compromise insights and even risk regulatory compliance. A smarter approach ensures you maintain visibility while unlocking significant advantages.

The benefits of optimizing Datadog costs

Cost optimization ensures you unlock the full potential of Datadog while staying in control of your spending.

- Save money without sacrificing performance. By focusing only on the features and data you truly need, you can cut down on unnecessary costs. This means you’re not overpaying for metrics, logs, or features you’re not actively using.

- Make scaling affordable. As your business grows, your monitoring needs increase. Datadog cost optimization ensures you can scale your infrastructure without seeing your costs spiral out of control.

- Boost team efficiency. When you streamline what’s being monitored, your team has fewer distractions. They can focus on meaningful alerts and insights, improving response times and productivity.

- Get more value from your tools. Optimizing costs often means auditing and fine-tuning how you use Datadog. This helps you uncover better ways to use the platform, ensuring you leverage its full potential while staying within budget.

- Avoid surprise bills. A well-optimized Datadog configuration ensures you’re not caught off guard by unexpected charges. You can avoid unpleasant surprises by managing data retention, setting alerts for usage thresholds, and keeping track of your subscriptions.

- Align spending with business goals. When your costs are optimized, you’re investing in monitoring that directly supports your key business objectives. This ensures that every dollar spent contributes to growth, reliability, and performance.

Proactively Manage Datadog Costs With These Best Practices

Try the following techniques and see how your Datadog costs change over time.

1. Chat with your account manager or sales rep

Your Datadog Account Manager or sales representative can be helpful in several ways. Here are two example scenarios.

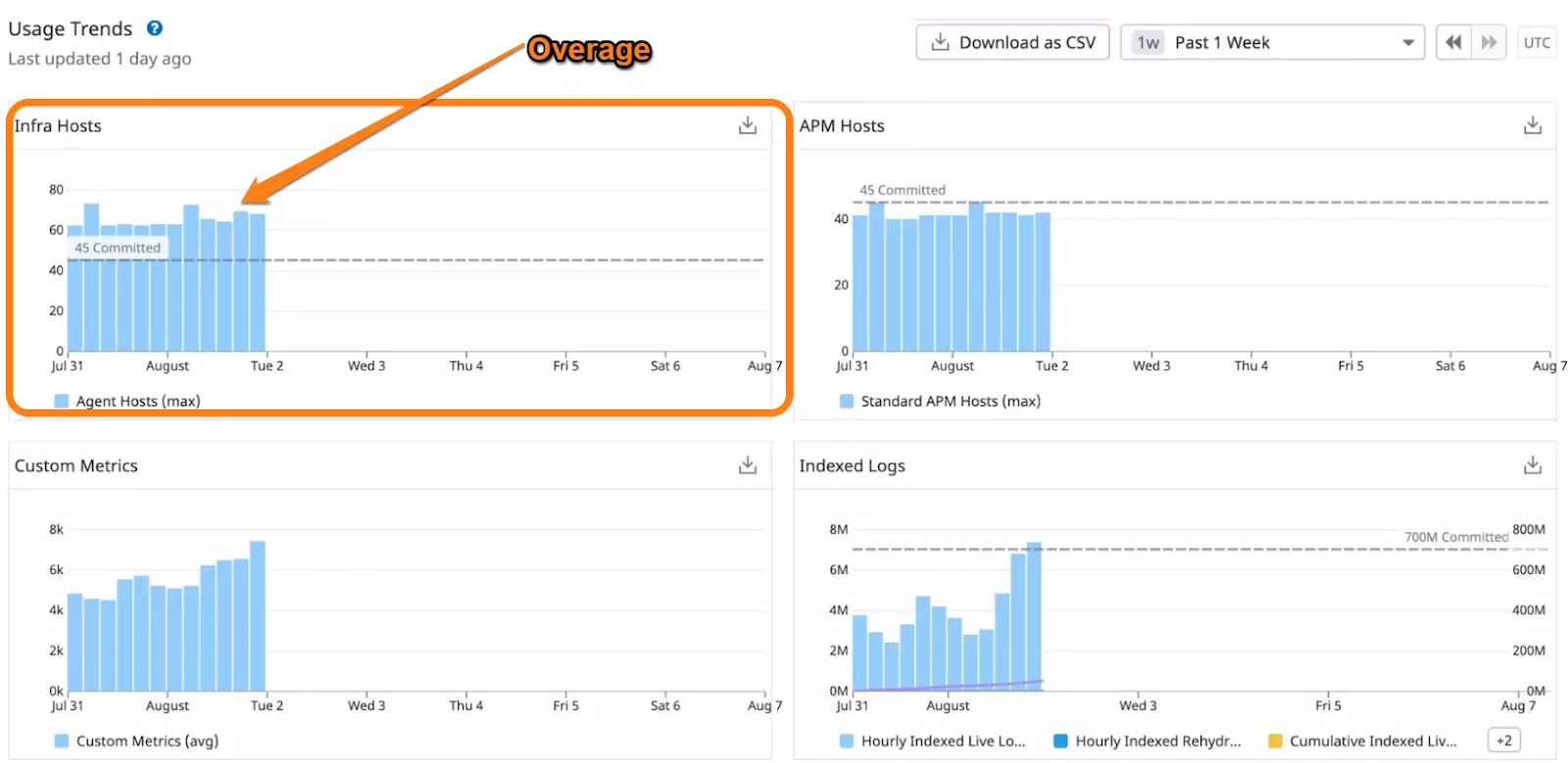

You can request them to clarify any gray areas in your potential contract. One thing to ask, for instance, is about the price and impact of overages on your Datadog subscription.

Take services that charge “per host,” for example. They come with a limited volume of metrics and containers you can query and monitor. Exceeding this threshold can incur overages, which can be expensive (Datadog charges $0.002 per additional container per hour).

Overages in Datadog usage

Another thing to consult is the level at which the next tier begins. The goal is to understand when you are nearing the next pricing tier. With this in mind, you can calculate the financial impact of bumping up your volume for better unit pricing.

One more tip: First-time Datadog users can request a free trial to test out the platform before signing up. This reduces risk by verifying the tool suits your monitoring needs before spending money or committing long-term.

2. Choose the right commitment level for your needs right off the bat

A crucial aspect of negotiating your Datadog contract is selecting an ideal commitment level for your requirements. You do not want to overcommit from the beginning, even if you know your usage will grow over time.

How?

You can instead adjust your committed volumes twice or thrice a year, which can help optimize your unit prices.

However, keep in mind that you can only increase, not decrease, the commitment level. So, it may be smarter to set your commitment at the lower end of your projection volume range and gradually increase it as your needs grow.

Something else. You can use one of three Datadog payment options: annual, monthly, or hourly. Annual commitment delivers the most savings ($23 per host/hour vs. $27 per host/hour on the Enterprise plan). You can, however, choose a shorter-term commitment if cash flow is an issue and you need more flexibility.

3. Take advantage of Datadog’s committed use discounts

The platform has monthly minimum usage commitments for various services, including infrastructure monitoring, APM, log management, and database monitoring. Now, Datadog does not publicly share all the minimum commitment rates.

But as an example, container monitoring costs $1 per prepaid container per month versus $0.002 per on-demand container per hour. In a 30-day month, using this approach can save you $0.44 per container per month in committed use discounts. That figure can add up fast as your application scales to thousands of containers.

4. Reconsider what custom metrics to monitor

Datadog deems a metric custom if it doesn’t come from one of its just over 600 integrations. Each is identifiable by a combination of name and tag values (such as host tags). The Pro plan offers 100 custom metrics per host you include, while the Enterprise plan offers 200.

Here’s the thing: Metrics are pooled across hosts. So, for every ten hosts monitored, 1000 custom metrics are allotted. By configuring only some hosts for custom metrics, for instance, you can assign 400 custom metrics to two hosts and 200 to the remaining eight (such as for build servers).

Then, once you streamline how you use custom metrics in your account, you can opt to remove unused tags. You want to be sure that when adding tags, it is at a higher granular level than existing tags. For instance, if you add a state tag to a collection of metrics you already track at the city level, your custom metrics allocation will not be affected.

5. Turn off Datadog containerized Agent logs

A portion of the logs generated by the Datadog agent are Datadog tracking its own performance, which you pay for. You’ll want to disable the Agent at the ingestion and agent levels to reduce transit costs.

Disabling log management is also an option. This is disabled by default, but it’s worth checking if it was enabled and left on by mistake or on purpose. Remember, it costs $1.27 per one million log events per month and $0.10 per ingested/scanned GBs per month, so disabling this can have substantial savings over time.

6. Ingestion controls and retention filters to the rescue

Datadog enables you to set ingestion controls to send only the most crucial traces from an application. You can then use retention filters to define the retention period for each indexed span as soon as the traces arrive. Tweaking these settings can help minimize APM overages.

7. Reduce egress charges

In addition to Datadog’s built-in billing, you may incur interacting costs between Datadog and your infrastructure provider.

Transmitting a large volume of logs out through NAT Gateways or public internet access points in other clouds incurs data egress charges. If you are an AWS customer, you can proxy through PrivateLink instead, enabling you to ingest logs via an internal endpoint. Here, data transfer savings can be as high as 80%. But there is a solution…

The Next Level: Investing In Cloud Cost Intelligence

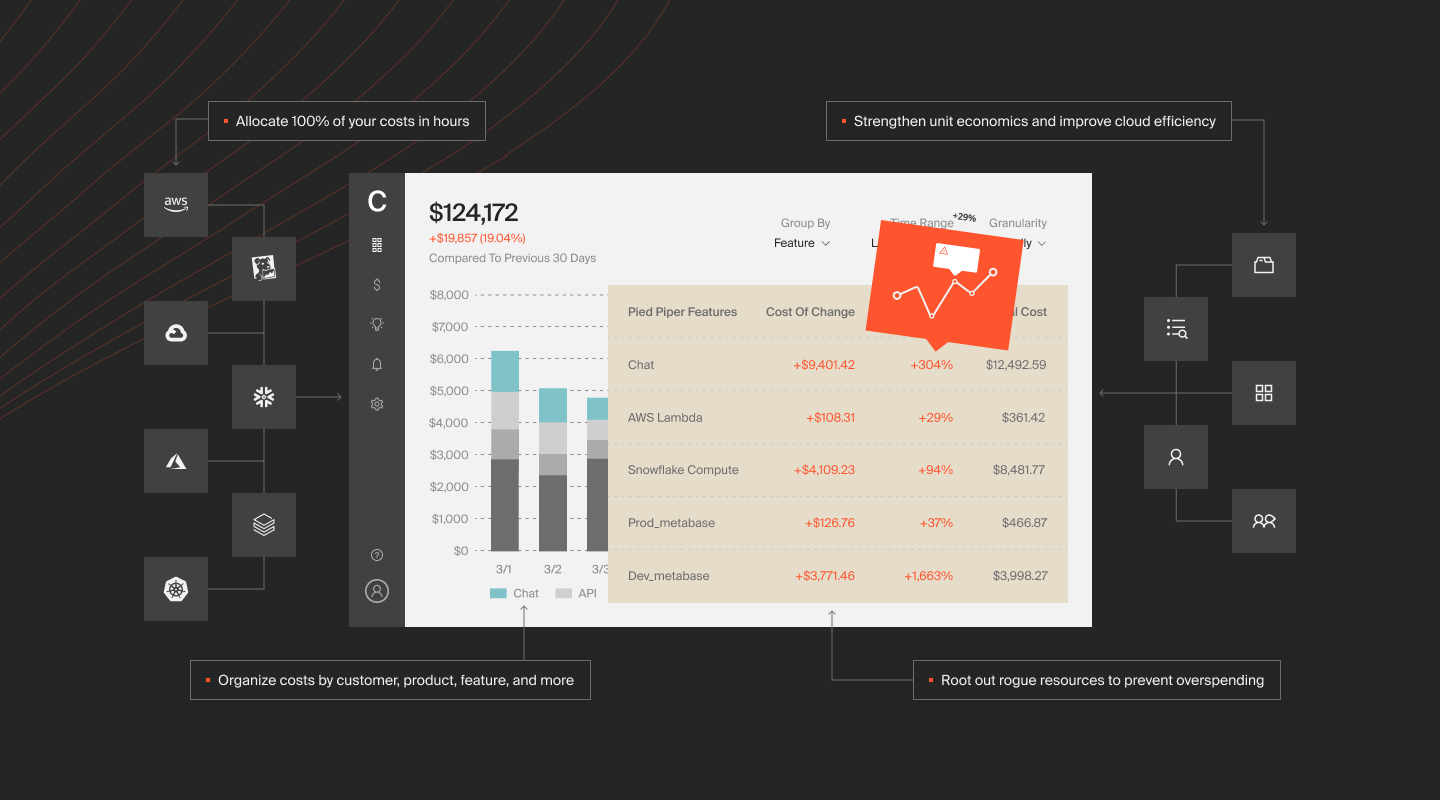

If you want to collect in-depth yet easy-to-digest cloud cost insights, CloudZero can help. Unlike traditional cloud cost management tools, CloudZero’s code-driven approach makes it more of an observability tool than a mere cost platform.

That means you can automatically aggregate, analyze, and get reports on your tagged, untagged, and untaggable cloud cost management data from both your infrastructure and applications.

Besides, CloudZero breaks this data down into immediately actionable cost intelligence, such as cost per individual customer, per product, per software feature, per project, and more.

You can also view these insights by role: engineering (cost per deployment, environment, dev team, and more), finance (cost per customer, project, message, and more), and FinOps (COGS, and more).

Better yet, you can use CloudZero AnyCost to combine and analyze costs of different cloud service providers together or independently, including AWS, Google Cloud, and Azure, as well as Kubernetes, Snowflake, MongoDB, Databricks, and New Relic.

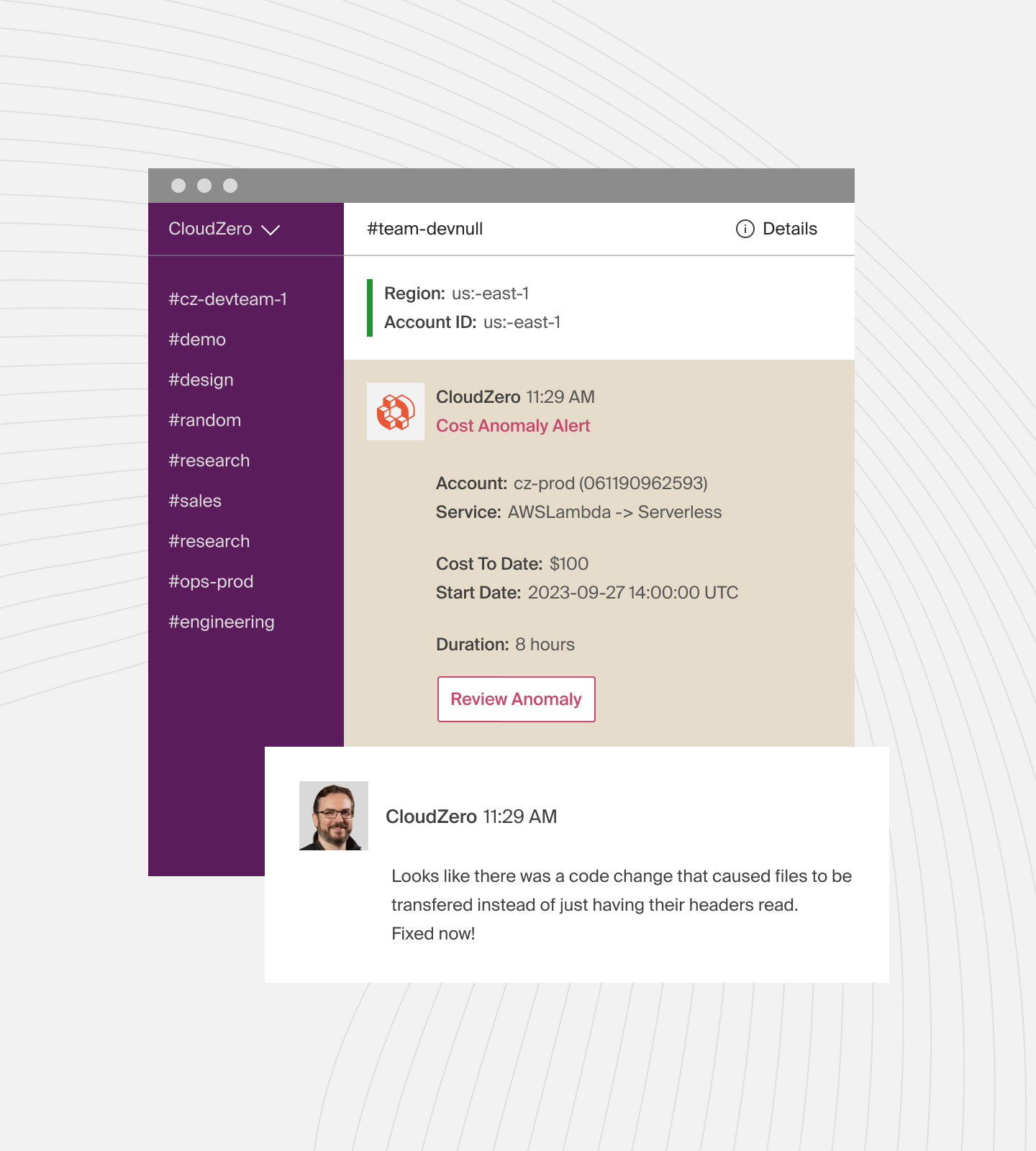

To help you prevent overages, CloudZero provides real-time cost anomaly detection. Noise-free alerts notify you of abnormal cost trends as soon as possible via Slack, email, or your favorite incident management tool.

Plus, CloudZero offers a tiered pricing model that won’t vary month to month. And if you’re looking for a Datadog pricing calculator to estimate your spend before committing, your Datadog account rep can walk you through one.