FinOps is one of the latest buzzwords in cloud computing. It’s a merger of two critical departments — finance and operations — and describes “cloud financial operations” (cloud financial management or cost management).

According to the FinOps Foundation, FinOps is “the practice of bringing financial accountability to the variable spend model of the cloud, enabling distributed teams to make business trade-offs between speed, cost, and quality.”

Unlike traditional on-premise systems, where the cost of developing software products can be tracked to physical infrastructure, operating in the cloud is a lot more variable and difficult to grasp.

Most cloud service providers offer a pay-as-you-go model, and services scale almost infinitely as workloads increase. In addition, multi-tenant systems and containerized infrastructure such as Kubernetes add complexities that obscure cloud costs and make allocation a challenge.

The end result is twofold:

Finance teams cannot understand how money is spent in the cloud.

Engineers and product teams have no idea how their activities impact cloud costs.

This is why FinOps matters — and why it exists. Done right, it can help your business tackle these barriers and begin to understand your cloud costs better. Here are some FinOps best practices to get you started — and help you make the most of every dollar you spend in the cloud.

5 Cloud FinOps Best Practices To Optimize Your Costs

1. Design your team responsible for FinOps

This is by far the most crucial step when adopting a FinOps approach.

Some companies call this team a Cloud Center of Excellence, while others call it the FinOps Team. For many, the team may not have a special title, and it could include a cross-functional team dedicated part-time.

This group should be a governing body that provides best practices and develops KPIs and metrics to help teams understand the unit economics of the business.

This team usually includes a dotted line to executive leadership. Depending on their involvement with cloud activities, it should consist of representatives from finance, product, and technology or engineering.

For example, you may have a finance manager who owns the budget, a cloud owner on the engineering tech side, and a product owner who manages all the products. This team will define the practices that serve as the organization’s guardrails.

2. Improve cloud visibility across your organization

A major challenge when operating in the cloud is that teams whose activities contribute to cloud cost need insight into cloud cost drivers. So, an integral FinOps practice is ensuring cloud cost visibility for everyone involved in the cloud. Affected teams need visibility and a clear understanding of how their activities impact cloud costs.

Keep in mind that different groups will have different nuances or understanding of cloud costs, so it’s important to provide cost information in a language they understand.

For example, finance may be interested in how cloud costs compare to the forecast. Engineers and developers, on the other hand, want to see how much it costs to create an architecture or product feature and how changes to that architecture impact cloud costs.

For them, it is critical to find answers to questions like “If we scaled or increased compute or memory, how will that impact cloud costs?” The product team is interested in how new customer contracts or an increase in the scope of a particular product impact cloud costs.

Once visibility is established, the next step is ensuring group communication. This brings us to the next point.

The first step to improving visibility usually starts with cost allocation, which can be challenging, especially if you have untagged infrastructure or inconsistent tags if you’re dealing with untagged, untaggable, inconsistent, containerized, shared costs — or anything else preventing you from achieving accurate visibility — CloudZero takes a code-driven approach to organizing cloud spend that can help you achieve immediate visibility.

3. Create a single source of truth

Establish a single go-to place for looking at your cloud costs. When teams start with AWS, the default tool is usually AWS Cost Explorer. While this is a good place to start, it does have limitations. AWS Cost Explorer is two-dimensional and lacks multi-filtering capabilities. It’s also not the easiest tool to use and requires effort to understand the data it generates.

Larger businesses may use three to four different tools for managing cloud costs, with each team using a different tool. This creates a problem because each team looks at costs differently, with inconsistent numbers. This is where a solution like CloudZero comes in.

CloudZero provides a centralized cost intelligence platform that gives each team the information it needs to make decisions. With CloudZero, engineering, finance, and product teams can all view costs through the unique lens of their role. This breaks down the silos around cloud costs and creates transparency and an enabling environment for more productive conversations.

4. Leverage cost savings and waste reduction strategies

Once you have all three items above, use the low-hanging fruits, such as reserved instances (RIs) and savings plans, to optimize costs. Depending on the type of services you use, you could also consider private pricing deals.

Next, look for ways to reduce waste. For example, remove any legacy resources that are not being used. A $100 per month storage bucket that hasn’t been used in four years costs $1,200 per year — almost $5,000 for the four years. While this might seem insignificant for a multi-million dollar business, multiple instances of such applications add to a significant expense.

The last option for cost savings is to consider rearchitecting your application. Most companies do a lift and shift when moving to the cloud. But once you’ve established a central governing body that provides best practices and you have cost visibility, an important step is to re-architect your application to adopt native AWS services and unlock even more savings.

5. Measure unit cost to understand cloud efficiency

The cloud is supposed to fuel innovation, so cutting costs for the sake of always saving money likely isn’t a good use of time for your engineering team. Likewise, increasing cloud spending isn’t bad when your business grows and adds new features. Instead, it’s important to understand whether you’re using the cloud efficiently in the context of your business.

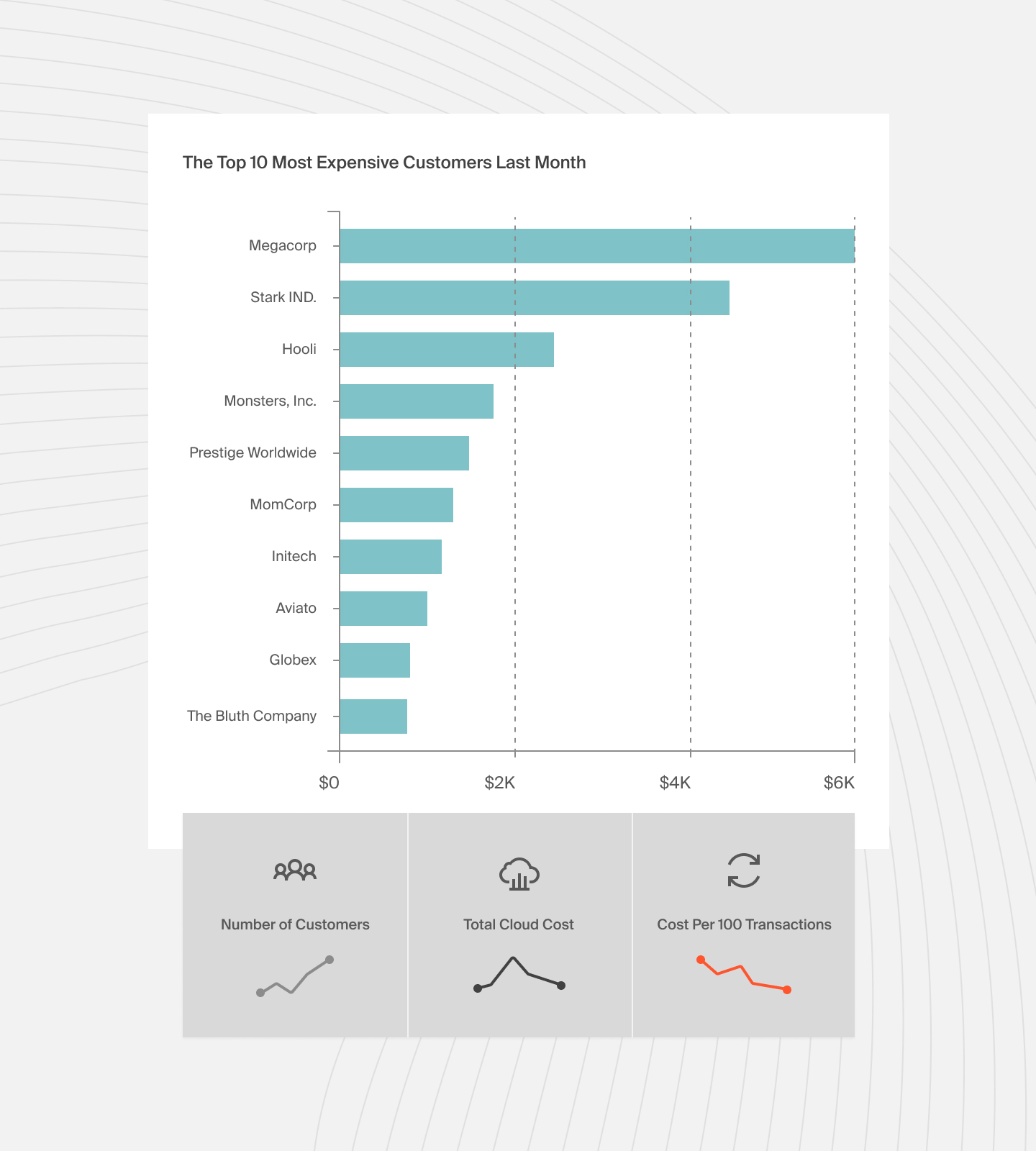

Unit cost puts cost into the context of your growing and changing business. For example, instead of focusing on your overall costs going up 10%, you can measure your average cost per customer, user session, transaction, etc. It’s usually a good idea to start with a single metric representing your business. From there, you can get more granular and advanced and track unit costs for different products, market segments, and more.

If you’re looking for a single metric to measure your business against, try the cloud efficiency metric.

Get Started With CloudZero

These FinOps best practices can help lower cloud costs and increase profitability. Use CloudZero, a cost intelligence platform, to streamline cloud cost management and start your FinOps journey.

to learn how CloudZero empowers you to build an effective FinOps program.

to learn how CloudZero empowers you to build an effective FinOps program.