This month, two FinOps research reports landed in close proximity. One from the FinOps Foundation — their 6th annual State of FinOps, drawing on a broad global practitioner community. One from CloudZero: FinOps in the AI Era: A Critical Recalibration, built on responses from 475 senior leaders at cloud-mature, AI-active organizations, with a focused lens on how AI is reshaping cloud cost management.

Read each alone, and you get a useful snapshot for your business. Read them together, and you get a multidimensional picture of a discipline that’s expanding its scope at exactly the moment its core metrics are under stress.

Where Both Reports Agree: AI Changed The Operating Environment

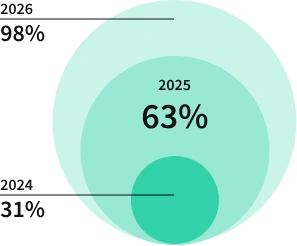

Both reports show AI has moved from an emerging concern to a mainstream FinOps reality, and fast. The FinOps Foundation documents that 98% of practitioners now manage AI spend, up from 31% just two years ago.

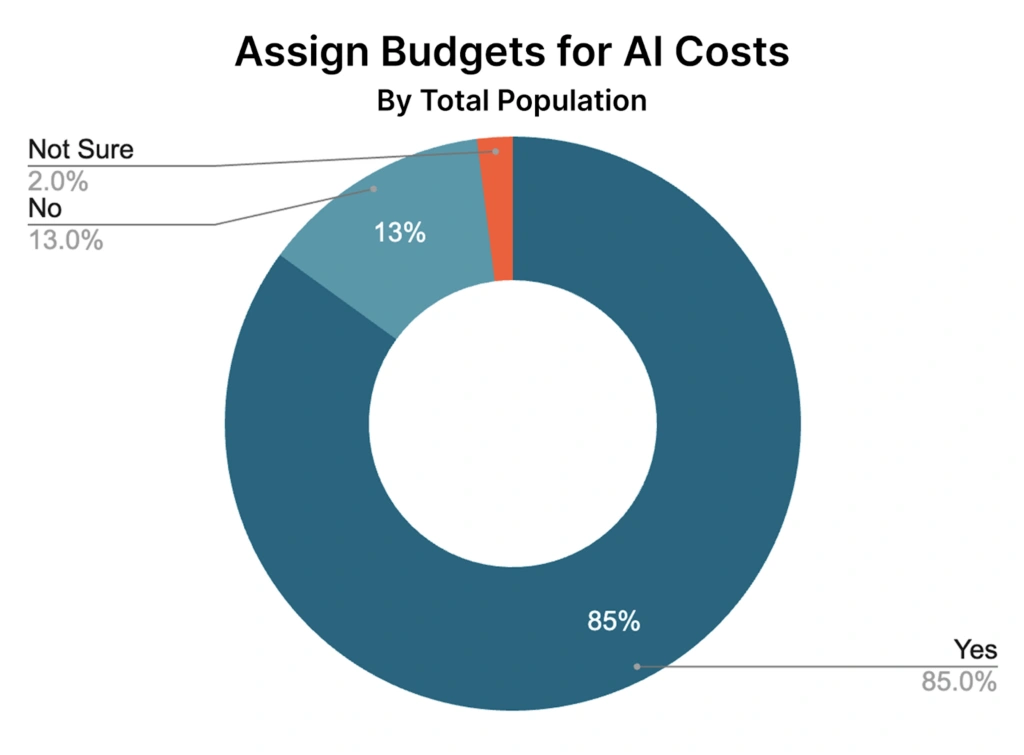

Meanwhile, our own data shows 85% of organizations now have formal AI budgets in place. Two out of five spend $10M or more annually on AI alone, approaching the 47% spending that much on cloud overall.

Both reports also confirm that allocation is the hardest unsolved problem. The Foundation lists it as the top capability priority across SaaS, data center, licensing, and data cloud platforms.

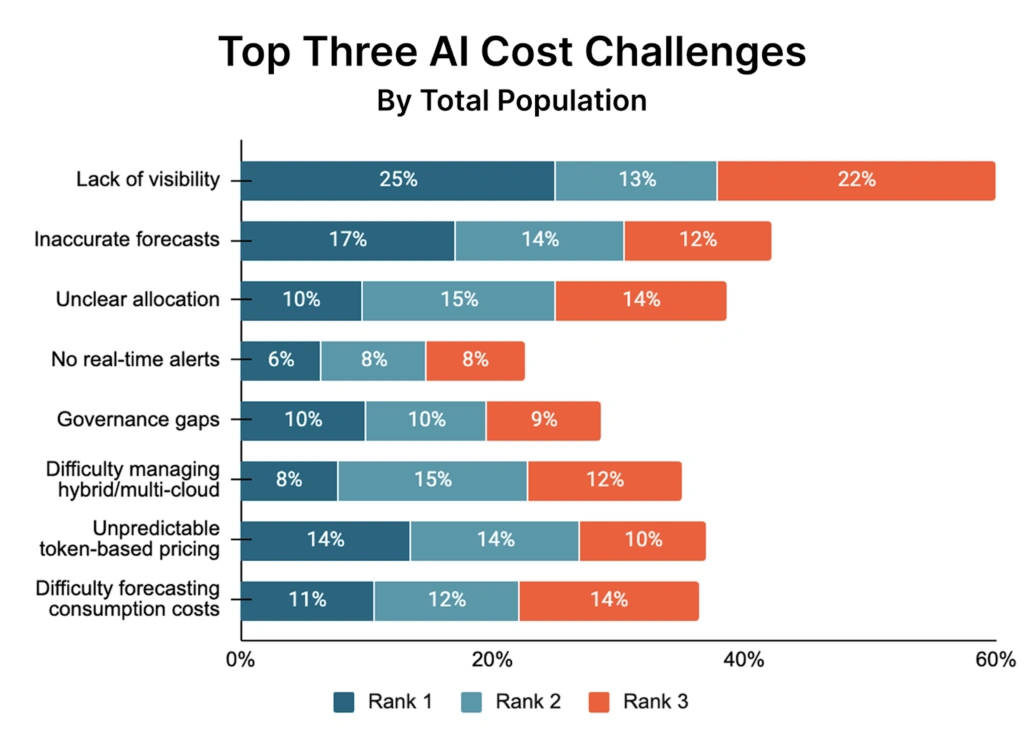

Our study found that visibility tops the AI cost challenge list — 60% ranked it in their top three problems, with 25% naming it their single biggest challenge.

And both confirm that FinOps teams are being asked to absorb more complexity with roughly the same headcount. The Foundation notes that organizations managing $100M+ operate with an average of 8–10 practitioners. Our data reflects the same lean-team reality against a rapidly expanding scope.

These are two different surveys with different methodologies, but the signals are similar: AI is not a new thing to manage but, rather, a disruption to existing systems that FinOps was built around.

They See Expansion, We See Stress

The State of FinOps 2026 is, by design and mission, a forward-looking industry document. Its findings are largely optimistic. It reveals that the scope of FinOps is expanding across SaaS, licensing, data center, and AI.

It tells us that executive alignment is improving. Four out of five (78%) FinOps functions now report to the CTO or CIO. Even the Foundation itself has updated its mission to reflect this growth, updating its statement from “the value of cloud” to “the value of technology.” The overall framing is one of a maturing, increasingly influential discipline.

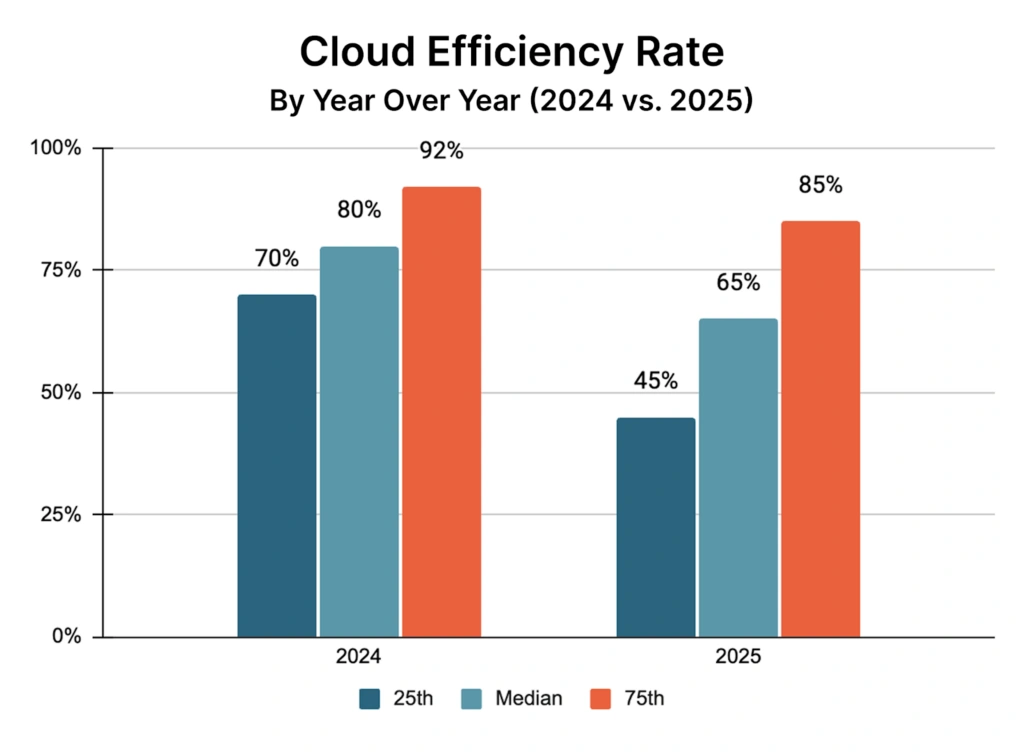

Our study tells a more complicated version of that story. Namely, we noted a drop in the Cloud Efficiency Rate (calculated as (Revenue – Cloud Costs) / Revenue) across every segment and every quartile year over year. The median fell from 80% to 65%. The 25th percentile dropped from 70% to 45%. Even top performers slipped, from 92% to 85%.

That’s not insignificant, and none of this happened in isolation.

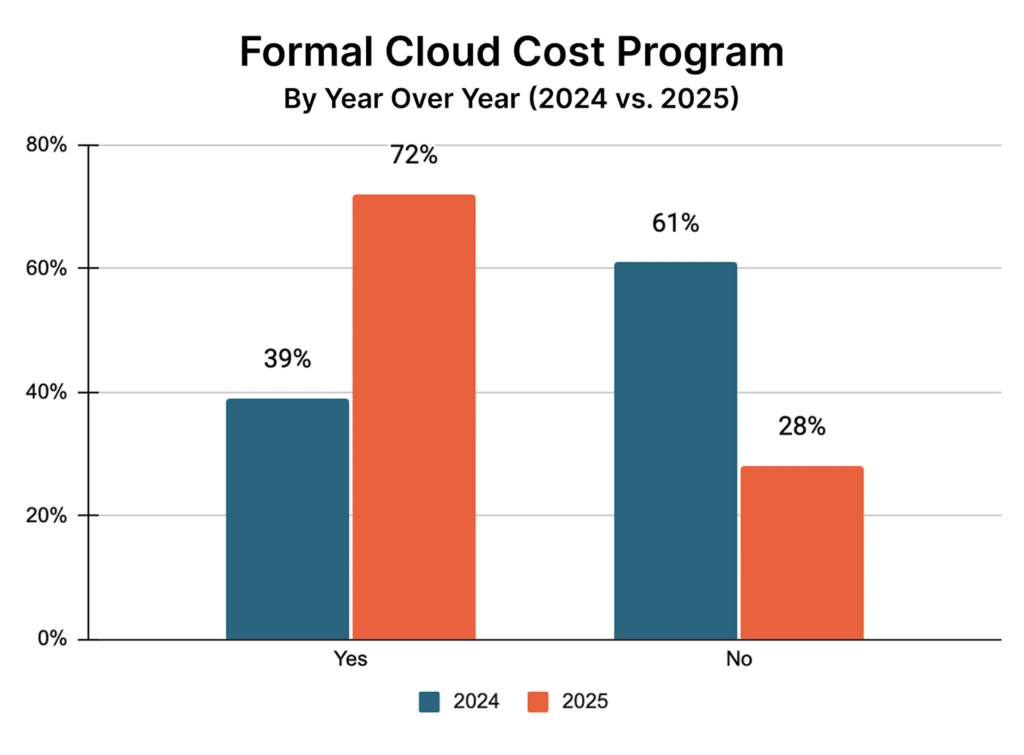

Over the same period, our study found that formal FinOps programs nearly doubled (39% to 72%). Budget assignment climbed to 87%. Chargeback adoption jumped from 45% to 64%. FinOps functions now exist at 80% of organizations.

The maturity indicators went up. The efficiency metric went down.

The Foundation measures FinOps inputs — what teams are doing, how they’re structured, what they’re prioritizing. It doesn’t measure output. Ours does, which is how you catch a paradox that input-focused design misses. Worth noting: the Foundation’s own practitioners are signaling diminishing returns on traditional optimization, with one noting they’ve “hit the big rocks of waste.” Our efficiency data shows the outcome of that plateau — and what’s filling the gap.

AI introduced variables the existing framework wasn’t built to handle: token volume, model selection, prompt behavior, inference patterns. The playbook has to evolve.

What CloudZero’s Report Adds

Beyond the efficiency metric, three findings from our survey build on what the Foundation report describes.

1. The lumping problem

The Foundation identifies allocation as the top capability gap. What our data adds is the most common root cause: organizations aren’t separating AI costs from cloud costs at all. We found that 78% of organizations fold AI spend into overall cloud expenses, not as a distinct line item but merged into infrastructure totals.

This leads to a structural visibility problem. When AI spend is buried in compute and storage bills, that limits the ability to understand, allocate, or optimize that spend.

2. The metrics weren’t built for AI economics

The Foundation acknowledges that AI value quantification is unsolved — and our data points to a structural reason: the measurement frameworks were designed for a different cost model entirely. Namely, AI introduces a third category: the training layer.

For instance, an ML-driven platform spending millions on model simulation and training isn’t wasting money; it’s building the capabilities that make production work. This means a 55% Cloud Efficiency Rate may reflect disciplined AI investment rather than increased operational waste.

The limitation is that the CER metric can’t tell you which is which. Until FinOps develops AI-native measurement frameworks, efficiency numbers will look worse than the underlying reality.

Until then, optimization and business decisions are being made against the wrong baseline.

3. The pricing paradox

Here’s where the two reports diverge most sharply: 84% of organizations are already pricing AI costs into their products. Cost-plus is the dominant model — in other words, AI spend flows directly into what customers pay.

On the surface, that looks like financial discipline. But pair it with the forecasting data and it becomes something else entirely.

Only one in five organizations forecast AI spend within ±10% of actual. One in five miss by 50% or more.

Cost-plus doesn’t eliminate forecasting risk. It redistributes it, and where it lands depends on how the model is structured.

In a pure usage-based model, customers pay based on their own consumption, so spend variability follows them. But when AI costs are volatile and unpredictable, customers absorb the bill shock. And that’s a churn risk.

In a blended model, where a company sets an average AI cost per seat or per feature and holds that rate, the company absorbs the gap between estimated and actual costs every time AI spend runs hotter than the blended rate.

That margin impact lands silently — with no direct line back to the forecasting miss that caused it. And in any model that requires infrastructure commitments, bad forecasting still hurts procurement efficiency and negotiating leverage, regardless of what the customer invoice looks like.

There’s no version of cost-plus pricing where poor forecasting is consequence-free. The damage route depends on how the model is structured — but when most organizations can’t forecast AI spend within 25% of actuals, the exposure is real in all directions: margin erosion, customer bill shock, or both.

The Foundation flags forecasting as a top priority, whereas our data shows what that gap is currently costing organizations that are already pricing AI into their products.

A Discipline In A Critical Recalibration

The Foundation report describes a practice growing in mandate, gaining executive visibility, and taking on an increasingly strategic role in technology value decisions. That’s accurate. FinOps is no longer just explaining past spend — it’s beginning to shape future technology commitments before they’re made.

Our data adds the harder truth underneath that progress: the core efficiency metric is declining, the measurement framework hasn’t kept pace with AI economics, and the most common FinOps failure point — poor cost allocation — has its roots upstream of where most teams are looking. Fewer organizations even attempted to calculate CER this year (41%, down from 47%), suggesting practitioners themselves are beginning to question whether existing metrics still work.

Together, they describe a discipline that is genuinely growing in influence while simultaneously navigating a recalibration in its foundational effectiveness metrics — a recalibration triggered by AI cost complexity that arrived faster than the tools, frameworks, and team structures needed to absorb it.

This isn’t a crisis. FinOps solved cloud cost chaos over a decade. It will solve AI cost complexity too. But the first step is an accurate diagnosis: the maturity is real, the efficiency pressure is real, and the two can coexist in the same organization at the same time.

That’s where FinOps actually stands heading into 2026. Not broken. Recalibrating.