In the traditional finance world, budget variance was a static comparison between actual and budgeted spend. But in the cloud era, where costs scale with usage, experimentation, and engineering decisions, variance tells a much richer story.

Done right, budget variance helps you distinguish between healthy growth and margin erosion. It can signal strong feature adoption, rising customer demand, or successful launches. It can also reveal waste, inefficiencies, and weak cost controls.

That’s why high-performing SaaS companies treat budget variance as a core FinOps KPI. In this guide, we’ll explain what budget variance really means in the cloud, how to analyze and interpret it, and how to turn surprises into business value.

What Is Budget Variance?

Budget variance is the difference between planned spend (budgeted) and actual spend over a given period.

Budget Variance = Actual Spend − Budgeted Spend

In traditional finance, this metric indicates whether spending was over or under plan. In cloud and SaaS environments, however, budget variance is not inherently good or bad. Because cloud costs are elastic, variance must be interpreted in context — based on what drove the change and whether it created business value or waste.

If the difference was positive, you spent more than expected (unfavorable variance). If it was negative, you spent less (favorable variance).

But in the cloud era, that definition doesn’t tell the whole story because costs aren’t fixed. Cloud spend is elastic, driven by factors such as:

- Usage (more customers, more data, more API calls)

- Architecture choices, such as autoscaling, serverless, Kubernetes usage, AI inference workloads

- Engineering velocity, including new feature deployments, testing environments, and data replication

- Business events, such as product launches, new enterprise contracts, seasonal surges, etc.

A budget variance of +20% could mean your new AI-based feature gained more traction/adoption than you expected (good variance). Or, it could mean that a Kubernetes job got stuck and was scaling wastefully for 12 hours straight (bad variance).

“Variance in SaaS budgets isn’t good or bad by default. It needs context. It’s less about how far off you were and more about what’s driving that difference, and whether it’s valuable or wasteful.”

A monthly finance email saying “We’re 12% over budget” doesn’t help engineering or your product teams understand why, or what they need to do next.

In FinOps, budget variance is valuable only when analyzed with context, like:

- Which team, product, feature, or environment caused the variance?

- Is it tied to business value (growth, adoption) or inefficiency (waste, misconfiguration)?

- Was the variance predictable (forecast-driven) or a surprise (needs investigation)?

- Is it a one-time event, recurring pattern, or growing trend?

- Should we optimize, refactor, invest more, or sunset the root cause (say a feature)?

When you answer these questions, variance becomes meaningful. It tells you whether to optimize, invest further, or reshape your architecture. And achieving that starts with understanding what actually drives it.

Related read: CFO Cloud Cost Metrics: Key KPIs To Track

playbook

The AI Cost Optimization Playbook

Traditional cloud cost management is broken. Here’s why — and how to make the switch to cloud cost intelligence.

Why Budget Variance Requires Context In The Cloud

In cloud environments, budget variance rarely maps cleanly to a single decision or team. Costs are shaped by usage growth, architecture choices, deployment velocity, and business events — all happening simultaneously.

Without shared context, variance often becomes a source of confusion or finger-pointing between engineering, finance, and product teams. With the right context, it becomes a signal that guides better decisions: when to optimize, when to invest, and when to reforecast.

Understanding what drives variance is the first step toward using it strategically.

What Are the Root Causes Of Cloud Budget Variance?

In the cloud, budget variance rarely has a single cause. It’s usually the result of a chain of architectural, operational, or financial decisions that quietly ripple into spend.

Additionally, those decisions are made and interpreted by different teams speaking different languages.

Engineering talks tech (in deployments, utilization, etc). Finance speaks, well, finance (budgets, forecasts, margins). Product speaks in features, adoption, contracts, ROI, etc.

And without a common context, variance becomes a source of confusion, or worse, finger-pointing.

In our experience budgeting at CloudZero, it is crucial to create a shared understanding of what your variance means and how to interpret it in various contexts, so you can take aligned, informed action instead of reacting in silos.

Consider these:

1. Usage-driven variation

These shifts aren’t always “bad.” In fact, they often represent growth, well, unless they catch you by surprise. They often look like business-driven usage growth:

- New customer onboarding (especially enterprise contracts)

- Feature adoption, like new AI capabilities driving inference costs

- Seasonal or viral traffic spikes, and

- Increased data retention or analytics requirements.

2. Architecture and engineering–driven variance

These are technical, behavior-driven shifts that don’t necessarily create business value but can quietly drain your margins.

Engineering Issue | What It Looks Like | Impact |

Overprovisioning | Fat EC2 instances, over-sized Kubernetes clusters, etc | Wasted compute spend |

Idle resources | Forgotten test environments, unused storage, orphaned EBS volumes | Cost without value |

Inefficient architectures | Chatty microservices, unmanaged data transfer, AI model replication | Spikes in egress/API costs |

Sudden scaling events | Runaway auto-scaling, misconfigured serverless, batch jobs | Cost anomalies |

Variances could also be process of ownership-related.

3. Process and ownership gaps

These issues aren’t technical but cultural and process-oriented. Fortunately, they’re also the most common and the most fixable:

- Lack of cost visibility for engineering teams (a.k.a “cost is finance’s job”)

- No shared accountability (nobody owns the spend)

- No real-time alerts. These teams only learn about variance after the cloud bill hits

- Poor tagging and weak cloud cost governance, making cost attribution impractical

- Shadow IT resources that quietly raise your Cost of Goods Sold (COGS).

And then there are budgeting-related issues.

4. Forecasting and budgeting misalignment

Sometimes, variance is the result of outdated or rigid finance modeling. Consider these:

- Budgets being based on static models that do not account for cloud-based elasticity (aka variable costs)

- Finance being unaware of new engineering projects, such deployments, AI adoption, or architecture changes

- Teams forgetting to factor in data growth, seasonality, or multi-environment scaling

- Commitments like Savings Plans, Reserved Instances (RIs), and CUDs not being sized correctly

But you’re not here just to see why variance happens. You’re here to learn how to control it, interpret it, and turn it into value.

Let’s do that.

How To Do a Budget Variance Analysis For SaaS And Cloud Businesses

To get real value from a cloud budget variance analysis, you need to see what caused the change, who or what drove it, and whether it created value or waste. That means going beyond static numbers and turning variance into insight.

Here’s how to do that in a way that’s actually useful to engineering, finance, product, and leadership. It’s the same approach we used at CloudZero to uncover over $1.7 million in savings from our own infrastructure.

And it’s one you can adopt, too. Here’s how.

1. Start with the basic variance calculation

Begin with the traditional comparison between budgeted and actual spend but don’t treat it as the conclusion. So, treat that number as a signal, like a flag that says “dig deeper”.

2. Attribute variance to meaningful business drivers

Cloud variance becomes actionable only when tied to something business-relevant. Instead of saying:

“Compute costs increased by 12%.”

Aim for insights like:

“Compute costs increased because the AI recommendation engine saw 45% higher inference usage after Customer X onboarded.”

Or, “storage spend spiked because this staging environment was left running for 11 days, accumulating idle capacity.”

To achieve this precision, map your cloud spend to specific:

- Features or workloads

- Teams or departments

- Customers or contracts

- Environments (prod, staging, QA, dev)

- Business events (launch, migration, API release, marketing campaign)

Now, you might be thinking, “most cost tools don’t support this level of cloud cost visibility, though“.

Well, that’s exactly where CloudZero stands out.

CloudZero doesn’t just allocate 100% of your cloud spend. It also maps it to the people, products, and processes that drive it, down to the hour. And because it updates continuously (not in 24-hour batches), you see cost trends in real time.

That means you can quickly determine:

- When your spend is healthy and aligned with growth

- When it’s higher than planned but business-justified

- When it’s pure waste and needs intervention immediately

Don’t take our word for it. Take your quick, free tour of CloudZero here. Better yet,  to get a hands-on experience.

to get a hands-on experience.

This is how you move from “What happened?” to “Why it happened and whether it was worth it.”

3. Go beyond totals or averages

Totals and averages create the Peanut Butter Budget Effect, where costs are spread “evenly” across products, customers, or teams, just like peanut butter on toast. It looks neat and balanced, but in reality, it’s misleading.

Cloud costs don’t behave that way.

An average might suggest that every feature costs $3,000 per month to operate. But when you peel it back, you might discover:

- One feature costs $5,500

- Another costs around $3,000

- Four others are under $1,000

But when you lump all spend together, you hide both your silent margin killers and your surprisingly efficient features.

Instead, analyze variance at the level of:

- Feature or product module

- Individual customer or contract

- Environment (prod, staging, QA, sandbox)

- Contract tier (Standard vs Enterprise)

- Region

- Deployment model

- Team or engineering group

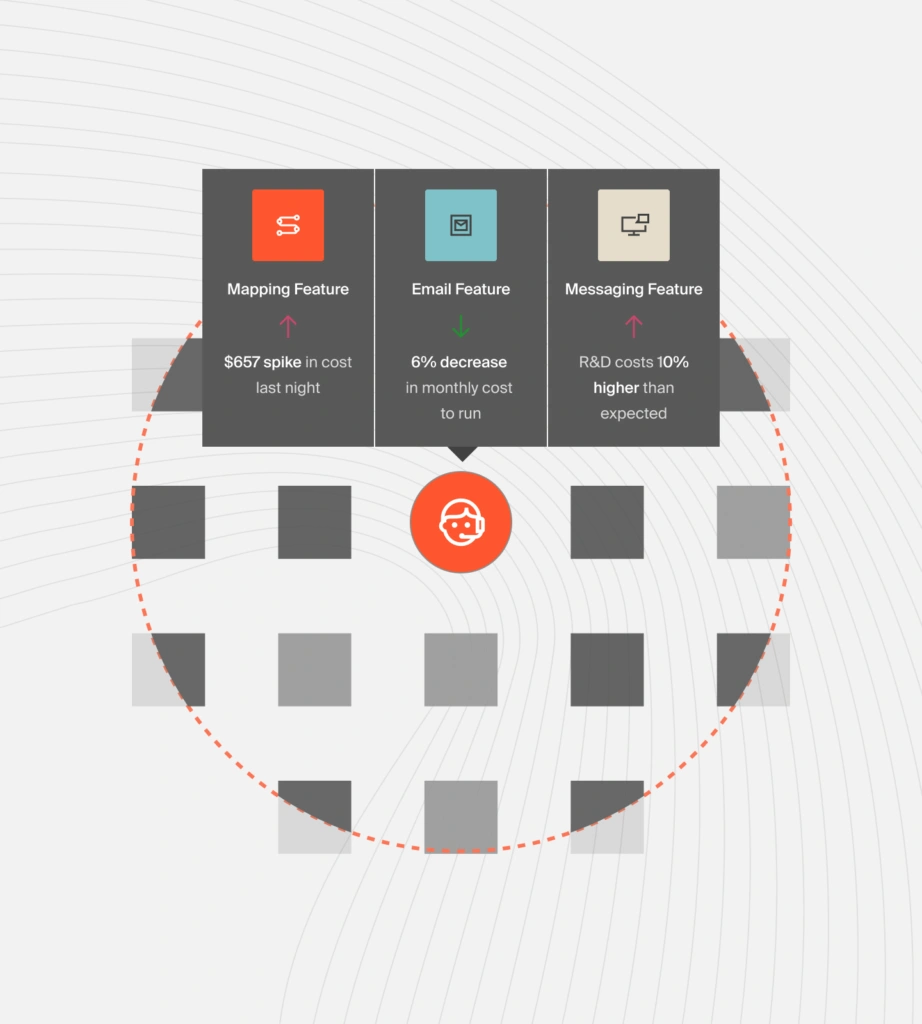

One of the most powerful (and often underused) dimensions we swear by is Cost per Feature.

This dimension enables you to see what it truly costs you to run, maintain, or support a specific feature. It means you can evaluate:

- Should this feature move into a paid tier so it can pay for itself?

- Is its usage and value growing enough to justify reinvestment or supporting it further?

- Should we refactor it to make it more efficient?

- Or is it no longer viable and should be decommissioned ASAP to cut costs?

These are not questions you can confidently answer with gut feeling or a raw AWS Cost and Usage Report. You need real cloud cost intelligence, the kind that connects spend to specific features, individual customers, and particular outcomes.

This is straightforward to do in CloudZero. And you do get personalized guidance from a Certified FinOps Professional if you need help identifying insights, interpreting patterns, and turning your cost data into decisions. It’s how CloudZero customers see ROI within 14 days.

4. Turn variance analysis into action

Budget variance analysis isn’t meant to reveal who overspent. It should help your team understand what changed (and what to do about it). From defensiveness to decision-making.

Instead of pointing fingers, variance analysis should reveal:

- What happened

- Why it happened

- Whether it created value or wasted money

- What decision it informs (like should we invest, optimize, reforecast, or monitor this feature/trend further)?

You want to be able to look at variance and answer, “Is this something we should fix, scale, or fund?”

Resource: How Wise Used CloudZero To Engage 250+ Engineers In Proactive Cost Management

5. Choose the right response on repeat

Once you understand the variance type, deciding what to do next becomes much clearer:

- Optimize when there’s waste or inefficiency

- Invest further when variance reflects healthy growth or adoption

- Reforecast when the budget was based on outdated assumptions

- Monitor when the pattern is emerging but not yet material

This is how you turn budget variance analysis from a spreadsheet exercise into a strategic FinOps capability.

What Next: Turn Budget Variance Into Your Strategic Signal And Competitive Advantage

In the cloud and SaaS, budget variance doesn’t exactly exist to tell you where you went wrong. It’s also a tool for helping you understand where your business is going — faster, slower, more profitably, or wastefully.

When you analyze variance at the level of features, customers, teams, or environments, it stops being a mere budget anomaly, and becomes your strategic signal to tune things to your advantage.

And with CloudZero, you get the real-time cloud cost intelligence to see variance the way it should be seen:

- Map 100% of your cloud spend to the people, products, and processes that drive it

- Attribute variance to specific features, events, deployments, or customer behavior. Down to the hour

- Identify whether a spike is healthy (growth), harmful (waste), or simply expected

- Get the context you need to decide whether to optimize, invest, reforecast, or scale

- And if you choose, do it alongside your own Certified FinOps Professional so you can get ROI soonest.

Key Takeaways: Budget Variance In The Cloud Era

- Budget variance is not inherently good or bad in SaaS and cloud environments

- Variance must be interpreted in the context of usage, architecture, and business events

- Healthy variance often signals growth, adoption, or successful launches

- Harmful variance usually points to waste, inefficiencies, or weak governance

- FinOps teams use variance to decide when to optimize, invest, reforecast, or monitor

In the cloud, budget variance isn’t just a control mechanism. It’s a strategic signal.

You’ll be in good company, too. Leading teams at Drift, Coinbase, Moody’s, Duolingo are just a few of the many organizations that use CloudZero to maintain cloud cost leadership.  to turn budget surprises into your competitive advantage.

to turn budget surprises into your competitive advantage.

FAQs

Why does budget variance matter for SaaS companies?

Budget variance matters for SaaS companies because cloud spend flows directly into COGS and gross margins. Variance reveals whether increased spend is tied to value-generating activity—such as customer growth, feature adoption, or enterprise usage—or value-destroying behavior like waste, misconfiguration, or idle resources.

Is all budget variance bad?

No. Budget variance is not inherently good or bad. In cloud environments, variance can be healthy, harmful, or simply expected. For example, a 20% increase in spend could reflect strong adoption of a new AI feature (healthy variance) or a misconfigured Kubernetes workload scaling wastefully (harmful variance). Variance only becomes meaningful when tied to specific teams, features, customers, or workloads — not raw totals.

How do engineering and finance interpret variance differently?

Engineering, finance, and product teams view budget variance through different lenses:

- Engineering focuses on deployments, scaling behavior, and infrastructure utilization

- Finance focuses on budgets, forecasts, COGS, and margins

- Product focuses on features, adoption, ROI, and customer impact

Budget variance becomes actionable only when all three functions analyze the same cost data mapped to a shared business context, such as features, customers, or environments.

How does budget variance affect COGS and margins?

For most SaaS companies, cloud spend is a direct component of COGS. Unexplained or poorly attributed budget variance inflates COGS and compresses gross margins.

Tracking variance at the level of customers, features, or workloads helps teams identify which parts of the product drive profitable growth and which dilute margins over time.

How does CloudZero help with budget variance analysis?

CloudZero helps teams analyze budget variance by mapping 100% of cloud spend to the features, customers, teams, and environments that drive it. This makes it clear why spend changed, who owns the variance, and whether it created business value or waste.