AI cost management is the practice of tracking, allocating, and optimizing the cloud infrastructure costs tied to building, running, and scaling AI workloads. It differs from traditional cloud cost optimization because AI infrastructure behaves differently at every layer of the stack.

The biggest problem isn’t overspending. It’s that most organizations can’t see where their AI spending is going. Model inference, GPU compute, training jobs, vector database queries, and API calls rarely appear in a cloud bill as “AI.” They show up as EC2, S3, or a vague compute line item.

By the time finance asks questions, the trail is cold. Teams that can’t track AI spend can’t manage it, and they certainly can’t optimize it.

CloudZero billing data shows why: AI spending averaged just 2.5% of total cloud spend across our customer base in December 2025, even as organizations plan to grow monthly AI budgets by 36%, from $62,964 to $85,521. The gap isn’t dishonesty. It’s invisibility.

Most AI cost is buried in compute and storage, uncategorized and unmanaged, and closing that gap demands a different approach from anything traditional cloud cost tools were built to handle.

Why AI Spending Is Harder To Manage Than Traditional Cloud Costs

Unlike traditional workloads, AI infrastructure introduces cost drivers that don’t behave predictably, and don’t show up cleanly in standard billing data. Three problems in particular make AI spending harder to manage.

- Demand is spiky and unpredictable. Inference traffic doesn’t follow a linear growth curve. A single product launch, a new integration, or a shift in user behavior can send GPU utilization and AI cost vertical overnight.

- The cost drivers are non-obvious. Training costs are large but infrequent. Inference costs are smaller per call but compound fast at scale. Token usage, context window length, model selection, caching behavior, and batch size all affect AI spending in ways that don’t map to traditional infrastructure metrics.

- Attribution is hard by default. When a customer request triggers a model inference, that AI cost often lands in a shared compute pool with no tagging, no allocation, and no connection to the product, team, or customer that generated it. CloudZero defines this as the AI attribution problem — the root cause of most AI cost overruns.

Traditional cloud cost tools weren’t built for any of this. They lack the granularity needed for cost-per-token, per-request, and unit economics at the AI layer. Without allocation by team, feature, or customer, there’s no way to tie AI spending to business value, or to forecast it accurately.

Consequently, the teams that manage AI costs most effectively aren’t relying on native cloud tooling. They’re building a structured practice around three connected layers — and applying FinOps for AI principles at each one.

CloudZero’s AI cost intelligence platform breaks down AI spending by model, team, and unit cost, giving engineering and finance teams a shared, real-time view of what AI actually costs the business.

The CloudZero AI Cost Intelligence Framework

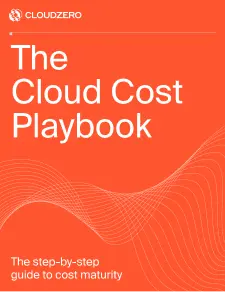

CloudZero’s AI cost intelligence framework organizes AI cost management into three connected layers: visibility, allocation, and optimization. Each depends on the one before it: you can’t allocate what you can’t see, and you can’t optimize what hasn’t been attributed.

Layer 1: Visibility — Know what you’re actually spending on AI

Getting to real AI cost visibility means breaking through the compute and storage abstraction that hides most AI-related spend in cloud billing. CloudZero recommends three concrete actions to get there.

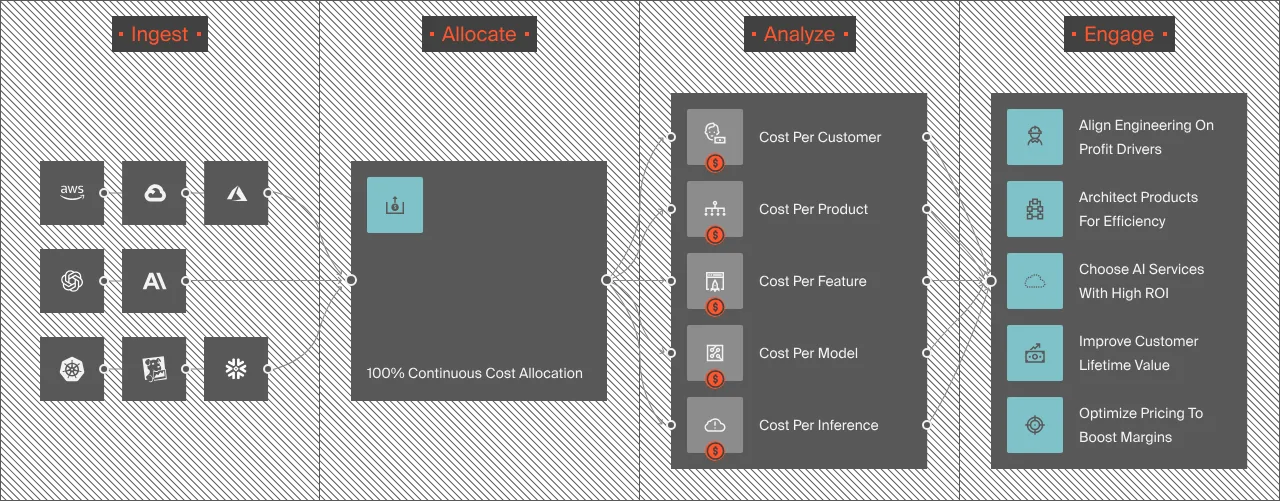

- Tag AI workloads consistently. Every resource associated with an AI workload, training clusters, inference endpoints, vector databases, embedding pipelines, should carry a tag identifying the workload type, the model, and the owning team. CloudZero analysis shows most organizations have significant AI-related resources running with zero cost allocation tags. Without them, cloud cost allocation is impossible at the AI layer.

- Ingest third-party AI API costs. AI spending from providers like Anthropic, OpenAI, or Cohere usually sits outside standard cloud billing entirely. It needs to be normalized and mapped alongside infrastructure spend for a complete picture of total AI cost. CloudZero is the first cloud cost platform to announce integration directly with Anthropic, pulling Claude usage and cost data into the same platform used for AWS, Azure, and GCP. Read more: The AI cost ‘black box’ — and how CloudZero provides clarity.

- Connect cost data to model telemetry. Billing data alone doesn’t explain cost movement. Understanding why AI spending is rising requires connecting spend to model metrics: token counts, request volumes, GPU utilization, latency, and batch processing rates.

A useful way to frame AI cost visibility is as a maturity progression:

- At Level 0, AI is buried in general compute with no distinct view.

- At Level 1, tagging provides partial visibility.

- At Level 2, spend is aggregated into dashboards with unit metrics like cost per inference.

- At Level 3, AI costs are fully connected to business outcomes — enabling budgeting and trade-offs like any other line item.

Most organizations sit at Level 0 or 1. Getting to Level 2 is the most impactful jump, and it’s what makes the next layer possible.

Layer 2: AI cost allocation — What it is and why it matters

Visibility tells you what you’re spending. AI cost allocation tells you who’s responsible, and that distinction is what makes cost management actionable.

AI cost allocation is the process of attributing AI-related spend to the specific teams, products, features, or customers that generate it. Without allocation, you have a bill but no accountability, and no way to know whether your AI investment is paying off.

Most teams stall here because showback and chargeback models weren’t designed for shared inference infrastructure. When multiple teams route requests through a single model endpoint, accurate allocation requires request-level telemetry, not just resource-level tags.

CloudZero’s patented CostFormation engine handles this directly, allocating 100% of cloud and AI costs, including untagged and shared resources, to the right products, features, or customers without requiring perfect tag coverage first.

CloudZero’s tagging dashboard

The core unit metric this unlocks is cost per inference, which CloudZero defines as the total cost of a model response divided by the number of inference calls in a given period, adjusted for model, context length, and hardware. This is the foundational AI unit economic metric. Without it, any optimization effort is guesswork.

Useful resource: AI cost optimization at scale.

Layer 3: AI cost efficiency — Reduce cost without reducing value

With visibility and allocation in place, AI cost efficiency improves and optimization becomes precise rather than speculative. The goal isn’t to cut AI spending indiscriminately; it’s to eliminate waste while protecting performance and user experience.

Across our customer base, AI workloads routinely run below optimal GPU utilization during autoscaling events. This is what we call AI Scaling Economic s— one of the most common and least visible sources of AI cost waste. When a model endpoint scales out too early or fails to scale down after a spike, that idle capacity still hits the bill. It’s one of the most common sources of AI cost waste, and one of the least visible.

The most effective AI cost efficiency levers are:

- Model tiering. Route simple requests to lighter, cheaper models and reserve frontier models for tasks that actually need them. When we look at customers with high-volume, repetitive traffic, tiering is consistently one of the fastest inference cost levers they have available.

- Semantic caching. Storing and reusing responses to repeated or semantically similar queries cuts token spend significantly on high-traffic endpoints, and the savings compound fast. We’ve seen customers attribute over $1 million in savings in part to token caching alone.

- Batch processing. Workloads that don’t require real-time responses such as document processing, offline embeddings, or report generation are cheaper when batched. Identifying all non-latency-sensitive AI workloads is a fast first pass in any AI cost efficiency sprint.

- Context window management. Longer prompts cost more, full stop. Prompt engineering, context pruning, and RAG architectures that retrieve only relevant context are as much a cost technique as an accuracy one.

- Commitment-based pricing. Reserved capacity and committed use discounts can reduce GPU costs for predictable inference volumes. The catch: you need enough visibility to forecast accurately first, which is why this lever comes last.

For organizations building guardrails around AI cost efficiency, CloudZero recommends a progressive approach: start with anomaly detection and soft budget nudges, then move to hard gates as maturity increases.

Useful resource: Smarter AI cost optimization with guardrails that scale.

FinOps For AI: Why The Old Playbook Doesn’t Work

The three-layer framework above reflects a broader shift in how cloud financial management needs to evolve.

FinOps for AI isn’t a separate discipline. It’s an extension of FinOps best practices to cover AI-specific cost behavior, and it’s something every team managing AI spending now needs.

FinOps for AI means new metrics (cost per inference, training run, token), new allocation models (request-level attribution rather than resource-level tagging), and new governance mechanisms (per-team AI spend budgets, model usage policies, and inference cost thresholds).

It also means accepting that the tools and workflows built for virtual machines and reserved instances weren’t designed for token-based economics and volatile inference costs.

Our FinOps In The AI Era: A Critical Recalibration report tells the story plainly: formal cloud cost programs now exist at 72% of organizations — nearly double the prior year — yet mean Cloud Efficiency Rate dropped from 80% to 65%, with unmanaged AI spending as the primary culprit.

The FinOps Foundation’s 2026 State of FinOps shows the same gap: organizations ranked visibility over optimization as their most urgent AI priority, signaling that allocation is still where most organizations are stuck.

And our own data shows that customers using third-party cost tracking platforms are more confident evaluating AI ROI than those without.

The organizations building cost-effective AI practices aren’t treating AI spend as a separate problem. They’re treating it as the next frontier of their existing FinOps work.

For a deeper look at how that plays out: Stop asking what AI costs — ask if it’s worth it.

How To Track AI Spend: 4 Starting Points

Understanding the framework is one thing. Building the practice is another. For teams starting from scratch, CloudZero’s AI cost intelligence framework points to four concrete first steps for tracking AI spend effectively.

- Audit your tagging coverage. Query your cloud billing data for the past 90 days and identify all resources with zero cost allocation tags. A significant share will be AI-related. This is your baseline problem statement.

- Map your AI workload inventory. List every AI-related workload in production: training jobs, inference endpoints, embedding pipelines, vector databases, third-party API integrations. For each, identify the owning team and the product or feature it supports.

- Define your primary unit cost metric. Pick the metric that matters most for your business — cost per inference, cost per token, cost per model run, or cost per customer interaction. CloudZero defines cost per inference as the total cost of a model response divided by the number of inference calls in a given period, adjusted for model and context length. Define it once and align engineering and finance on the same number.

- Establish a 30-day baseline. Use the first 30 days to understand what normal AI spending looks like, broken down by workload, team, and unit metric. Without a baseline, every optimization decision is a guess.

For the full data picture on where AI spending stands today, see the CloudZero State of AI Costs 2025 report.

What Good AI Cost Management Looks Like

With a baseline established and the framework in motion, the question shifts from “how do we start?” to “how do we know it’s working?”

According to CloudZero research, mature AI cost management practices and the teams building cost-effective AI operations share three characteristics:

- They measure AI cost per inference, not just total spend. Total spend is a lagging indicator. Unit cost shows whether efficiency is improving as volume scales, and it often does, which means a rising bill isn’t always bad news.

- They attribute AI spending to the teams and products generating it. Shared infrastructure without AI cost allocation encourages engineers to optimize for performance alone. Attribution changes that dynamic. AI cost sprawl is almost always a symptom of missing allocation, not missing intent.

- They connect AI cost to business outcomes. Cost per inference means nothing without a business result attached to it. The organizations getting this right have shifted from asking “how much did we spend on AI?” to asking “was it worth it?” — and they have the unit economics to answer. That’s what cost-effective AI actually looks like in practice.

The Proof Is In The Numbers: How Global Organizations Manage AI Costs With CloudZero

CloudZero manages more than $15 billion in cloud and AI spending across some of the world’s most sophisticated engineering organizations, including Toyota, Duolingo, Grammarly, DraftKings, PicPay, Expedia, and Moody’s.

Recognized as a Visionary in the Gartner Magic Quadrant for Cloud Financial Management Tools and a Strong Performer in the Forrester Wave, we’ve spent years solving the exact problem this article describes: making AI cost visible, attributable, and connected to business outcomes.

A few examples from our customer base:

- A global SaaS platform managing 50+ LLMs was drowning in AI costs spread across GPT, Claude, Llama, and dozens of other models with no way to track AI spend by product, customer, or team. We gave them granular AI cost allocation by customer, region, app, and user tier — connected directly to cost-per-token and cost-per-user metrics. They uncovered over $1 million in savings and cut compute spend by more than 50%, a clear example of what cost-effective AI operations actually look like at scale.

- Progress Software used CloudZero to drive FinOps for AI accountability across their engineering org, and in the process, prevented their Claude service from accruing unnecessary costs.

- An AI-native search company had zero visibility into OpenAI spend that made up 25% of their entire cloud bill. Within minutes of connecting CloudZero, they had full AI cost allocation by token type and live spend trends across every AI provider in their environment, turning a blind spot into a driver of AI cost efficiency.

If you’re ready to do the same, take a free tour of CloudZero, , or start with a free cloud cost assessment.

, or start with a free cloud cost assessment.