Quick Answer

As of May 2026, there are three ways to run Claude on AWS: Claude on Amazon Bedrock (AWS-operated, data inside the AWS boundary), the new Claude Platform on AWS (Anthropic-operated, billed through AWS Marketplace in Claude Consumption Units), and the direct Anthropic API (separate billing). Per-token rates are identical across all three. The differences are billing mechanics, feature availability, data residency, and cost visibility.

Claude Platform on AWS is a service that gives AWS customers direct access to Anthropic’s native Claude platform, including the full API, console, and beta features like Managed Agents, billed through their existing AWS account. It launched in general availability on May 11, 2026, making AWS the first cloud provider to offer this integration.

For platform engineers, FinOps practitioners, and engineering leaders evaluating Claude deployment paths, the question isn’t which path costs less per token.The rates are identical. The question is which path gives you the features you need, the data boundary you require, and the cost visibility to answer “what did that Claude spend actually buy us?”

That last question is the one most organizations skip. It’s also the one that determines whether your AI investment compounds or just accumulates. CloudZero tracks Claude API costs across thousands of production workloads and has helped customers identify over $1 million in AI savings by connecting token-level spend to business outcomes.

Claude On Bedrock Vs. Claude Platform VS. Direct Anthropic Features

Amazon Bedrock Claude pricing is the same on a per-token basis whether you use Bedrock, Claude Platform on AWS, or the direct Anthropic API.

Where costs diverge is in who operates the service, where your data goes, how you get billed, and which optimization features are available on day one.

| Claude on Amazon Bedrock | Claude Platform on AWS | Direct Anthropic API | |

| Operator | AWS | Anthropic | Anthropic |

| Data boundary | Inside AWS | Outside AWS (Anthropic) | Outside AWS (Anthropic) |

| Billing | AWS (per-token line items) | AWS Marketplace (CCUs) | Anthropic (per-token) |

| Auth | AWS IAM + SigV4 | AWS IAM + SigV4 | Anthropic API key |

| Audit logging | CloudTrail | CloudTrail | Anthropic console |

| Prompt caching | Available (may lag on new features) | Available (same day as Anthropic) | Available |

| Batch processing (50% off) | Yes, with S3 integration | Yes | Yes |

| Web search ($10/1K searches) | No | Yes | Yes |

| Web fetch | No | Yes | Yes |

| Provisioned Throughput | Yes (hourly commitments) | No | No |

| Guardrails, Knowledge Bases | Yes | No | No |

| Managed Agents, MCP connector | No (Bedrock has its own Agents) | Yes (beta) | Yes (beta) |

| New feature availability | Days to weeks after Anthropic | Same day | Same day |

| Fast Mode (Opus 4.6, 6x pricing) | No | No | Yes (beta) |

For a complete breakdown of all Anthropic model rates, caching multipliers, and batch discounts, see our Anthropic API pricing guide.

The most consequential row on this table isn’t a pricing line. It’s “new feature availability.” Teams that depend on prompt caching or batch processing to keep costs under control should verify that the specific optimization they need is live on Bedrock before assuming parity.

Claude Platform on AWS eliminates that lag entirely, because it runs the same Anthropic infrastructure that powers the direct API.

Research Report

FinOps In The AI Era: A Critical Recalibration

What 475 executives told us about AI and cloud efficiency.

How AWS Billing Works For Claude Platform Vs. Bedrock

This is where the two AWS Claude paths stop looking like siblings and start looking like cousins who happen to share a last name. And it’s where FinOps for AI teams should pay close attention.

Bedrock billing

Claude on Amazon Bedrock shows up as standard Bedrock line items in your AWS bill. You see token-level charges broken out by model, and you can use AWS Cost Explorer tags and cost allocation categories to attribute spend to teams, projects, or environments.

Bedrock also supports Provisioned Throughput (hourly reserved capacity) for predictable workloads, and its batch inference integrates directly with S3. If your organization already has tagging standards and FinOps workflows built around Cost and Usage Reports, Bedrock spend fits neatly into those systems.

Related Read: Bedrock cost optimization guide

Claude Platform on AWS billing

Claude Platform on AWS introduces a different unit entirely: the Claude Consumption Unit (CCU). Here’s the conversion flow:

- Anthropic calculates your token usage at standard per-model rates

- Any negotiated discounts are applied

- The total is converted to CCUs at $0.01 per CCU (100 CCUs = $1.00 USD)

- AWS Marketplace reports the CCU quantity hourly

On your AWS bill, you’ll see a single CCU line item. Not a per-model breakdown. Not a per-team split. One number. For example, a workload consuming 10 million input tokens and 2 million output tokens on Sonnet 4.6 costs $60 on all three paths — but on Claude Platform, it appears as 6,000 CCUs on your AWS Marketplace invoice. That’s simpler for procurement but harder for engineering attribution. You know how much you spent. You don’t automatically know which team, product, or model drove it.

One operational detail worth flagging: if you have an existing Bedrock private offer with negotiated discounts, Anthropic’s documentation explicitly warns to contact your account representative before activating Claude Platform on AWS. Discounts cannot be applied retroactively to usage that occurs before a Claude Platform private offer is accepted.

Two paths, one budget, no shared language

Here’s the practical problem these billing mechanics create.

A growing number of organizations will use both paths: Bedrock for production inference behind Guardrails, Claude Platform for agent development with beta features. Sometimes intentionally. Sometimes because different teams made different procurement decisions and nobody compared notes until the bill arrived.

Two billing mechanisms. Two line items. One budget that finance wants reconciled by Thursday. When you split the same model’s usage across two billing mechanisms on the same cloud provider, those numbers get worse, not better. The total Claude on AWS footprint just doubled in surface area. The visibility most teams have didn’t keep pace.

The billing mechanics determine how your costs appear. The next section covers how to make those costs smaller.

How To Track And Optimize Claude API Costs Across Deployment Paths

Cost optimization only works when you can see what you’re optimizing. Here are the levers, in order of impact.

- Route by task, not by default. Haiku 4.5 at $1/$5 handles classification, routing, and extraction. Sonnet 4.6 at $3/$15 covers most production inference. Opus 4.7 at $5/$25 earns its rate on complex reasoning and autonomous agents. A routing layer based on prompt length and task type is the AI equivalent of rightsizing your EC2 fleet: same output, lower spend.

- Cache the prompts that recur. Prompt caching at 90% off cached reads is the single highest-impact feature for any workload that reuses system prompts, documents, or conversation context. If you’re passing the same 50K-token system prompt across thousands of requests without caching, you’re paying full price for the same work on every call.

- Batch what doesn’t need an instant answer. The 50% batch discount applies across all models and all deployment paths. Content generation, data classification, document analysis: if the use case can tolerate a 24-hour window, it belongs in the batch queue.

- Benchmark before any model migration. The Opus 4.7 tokenizer shift (up to 35% more tokens for identical text) is one example, but any model swap changes your effective cost per request. Run your actual prompts through both models and compare total token counts before committing to a migration in production.

- Unify visibility across billing mechanisms. This is the hard one, and the one that makes or breaks AI cost intelligence at scale. Bedrock charges arrive as token-denominated line items. Claude Platform charges arrive as CCUs. Neither format answers “what does Claude cost us per customer?” or “which product is driving this month’s Anthropic spend?” Those answers require a normalization layer that maps both streams to business dimensions.

This last point is the difference between cost monitoring and cost intelligence. Monitoring tells you the bill went up. Intelligence tells you why, and tells you if the spend was worth it. That distinction matters more when you’re splitting Claude usage across deployment paths, which brings us to the question most teams face next.

Claude On AWS Vs. Direct Anthropic API: Cost And Feature Tradeoffs

For organizations not committed to AWS, the direct Anthropic API remains a clean option. The decision comes down to procurement and integration.

The strongest argument for the AWS paths is billing consolidation. If your organization has committed AWS spend through an Enterprise Discount Program (EDP), Claude Platform on AWS lets you retire those commitments against Anthropic usage. That’s real money, not theoretical savings. You also get IAM-based access control and CloudTrail audit logging at no additional cost.

The strongest argument for the direct API is simplicity: one auth mechanism, one billing relationship, no CCU conversion to reason about.

Most enterprises will end up on the AWS paths. The billing consolidation and IAM integration alone justify it. The remaining question is which AWS path, and how many teams end up using both without telling each other.

Choose Bedrock if you need data to stay within the AWS boundary, want Guardrails and Knowledge Bases, or have strict regional data residency requirements.

Choose Claude Platform on AWS if you want every native Anthropic feature from day one, are building with Managed Agents or MCP connectors, and can operate without AWS-boundary data processing. Note that Managed Agents sessions include runtime pricing (billed to the millisecond while the session is running) on top of standard token costs — a cost layer that doesn’t exist on Bedrock.

Use both if your teams have different requirements. Just make sure the cost visibility infrastructure can handle the complexity you’re introducing.

Can you run Claude Code on AWS?

This is a question Claude Code users have been asking since Bedrock launched Claude models, and the answer just changed. Claude Code on Bedrock is not natively supported.

Bedrock’s API surface doesn’t expose the interactive, session-based workflow Claude Code needs. But Claude Code on AWS is now possible through Claude Platform on AWS. You point Claude Code at your regional endpoint, set your workspace ID, and authenticate with IAM credentials. Same tool, same models, billed through your AWS account.

For teams that adopted Claude Code for development workflows and want to keep billing consolidated on AWS, Claude Platform is the path. For teams running batch inference or RAG pipelines, Bedrock still makes more sense. The model underneath is the same. The billing and feature surface are not.

Every path described above shares one limitation: none of them natively answers the question “what business value did this Claude spend produce?” That’s not a criticism of Anthropic or AWS. Billing systems track consumption. Answering “was it worth it?” requires connecting consumption to outcomes.

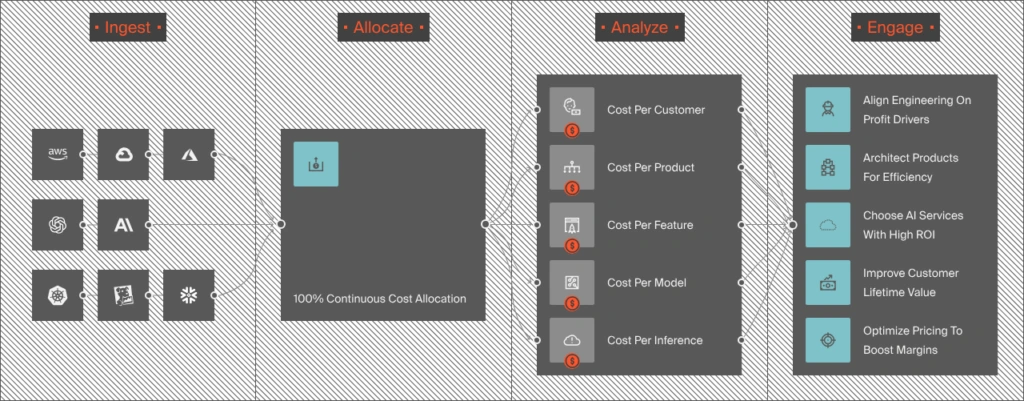

How CloudZero Tracks Claude Costs Across Every Deployment Path

Three deployment paths for one model family creates a problem that only CloudZero can solve: spend fragments across billing mechanisms before anyone builds a unified view.

Most teams discover the problem after it’s already a line item. A Bedrock integration here. A Claude Platform workspace there. A direct API key that a product team spun up for prototyping six months ago and never decommissioned. Each path with its own billing unit, its own cost allocation model, and its own blind spots. The AI equivalent of three credit cards from the same bank, each with different statements.

CloudZero was the first cloud cost platform to integrate directly with Anthropic, and also pulls AWS billing data including Bedrock and Marketplace charges. For teams running Claude across multiple paths, CloudZero normalizes every source into a single cost-per-inference metric, allocated by customer, feature, team, or any business dimension you define.

CloudZero manages $15 billion+ in cloud and AI spend and tracks 50-plus LLMs across its customer base. One customer achieved over $1 million in AI savings and a 50%+ reduction in compute after gaining model-level cost visibility.

Toyota, Grammarly, Skyscanner, and Coinbase use CloudZero to manage $15 billion+ in AI and cloud costs across every deployment path. Schedule a demo or take a free cloud cost assessment to see where your Claude spend stands.