To reduce cloud costs, organizations need to address three root causes: over-provisioned resources, shared infrastructure without clear owners, and cloud bills that can’t be explained at the feature or customer level.

The most effective programs combine rightsizing, commitment-based discounts, idle resource elimination, and unit economics — and deliver 20–30% reductions in monthly spend without impacting performance. CloudZero customers average 22% savings in year one.

Cloud Cost Reduction Strategies At A Glance

Strategy | Savings Potential | Effort | Time to Results | Best For |

Eliminate idle/zombie resources | 5–20% | Low | 30–60 days | Quick wins |

Rightsize compute | 15–25% | Medium | 60–90 days | Most organizations |

Commitment-based pricing | 20–60% | Medium | Immediate after purchase | Stable workloads |

Storage tiering | Up to 90% on eligible data | Low | 30–60 days | Data-heavy workloads |

Egress optimization | Variable | Medium–High | 60–120 days | Multi-region architectures |

Unit economics / full allocation | Sustained reduction | High | 90+ days | Long-term programs |

Cloud bills don’t just grow, they compound. A misconfigured autoscaling policy, an untagged Kubernetes cluster, a dev environment left running through the weekend: each is small on its own. Together they quietly erode margins, because unlike headcount or real estate, cloud spend scales automatically and silently.

Reducing cloud costs is now one of the most pressing challenges in enterprise technology. Global public cloud spending reached $723.4 billion in 2025, up 21.5% year-over-year, according to Gartner, and is projected to approach $880 billion in 2026. Yet only 30% of organizations can accurately identify where their cloud budget is going, according to CloudZero’s State of Cloud Cost Intelligence Report.

Cloud cost optimization is the practice of eliminating the gap between what you provision and what you actually use, and connecting the spend you keep to measurable business outcomes. Structured programs usually deliver 20–30% reductions in monthly spend without impacting performance or velocity.

This guide covers the strategies that produce those results, what makes them durable, and where most organizations go wrong.

playbook

The AI Cost Optimization Playbook

Traditional cloud cost management is broken. Here’s why — and how to make the switch to cloud cost intelligence.

Why Cloud Costs Keep Rising Even When Teams Are Trying To Manage Them

Before getting into solutions, it is worth understanding why the problem persists despite widespread investment in FinOps practices and tools.

Three structural forces drive persistent cloud overspend:

- Provisioning bias. Engineers provision for peak demand, not average demand. That is rational, downtime costs more than compute, but it creates persistent over-provisioning across compute, storage, and Kubernetes workloads. Resources are sized for a load that occurs 5% of the time and run at that level continuously.

- Ownership gaps. Shared infrastructure, a central data platform, a shared Kubernetes cluster, a multi-tenant application, generates costs that don’t map cleanly to any single team or product. When nobody owns a cost, nobody optimizes it.

- Invisible spend. Most cloud bills contain thousands of line items across multiple services, regions, accounts, and providers. Without cost allocation logic that connects those line items to business owners and business outcomes, the bill is a black box. You can see the total. You cannot explain it.

Cloud cost savings strategies that stick address all three. The ones that don’t, such as one-time rightsizing exercises, tagging mandates without enforcement or dashboards nobody acts on, always produce short-term savings followed by a reliable return to baseline.

1. How do you get complete visibility into cloud costs?

The first rule of cloud cost reduction is that you cannot optimize what you cannot see. Most organizations are working with an incomplete picture, and making optimization decisions on incomplete data is how well-intentioned cloud cost savings initiatives undermine themselves.

Complete visibility means more than opening AWS Cost Explorer. It means:

- Knowing which team, product, or customer owns every dollar of spend including shared and untagged resources

- Seeing costs normalized across all providers, not separate dashboards for AWS, GCP, Azure, Snowflake, OpenAI, Anthropic and more

- Understanding cost in business terms, not just infrastructure terms

This matters for cloud cost reduction specifically because without full allocation, teams cut infrastructure that supports profitable features and leave waste running in areas they cannot see. The result is savings on paper and margin erosion in practice.

Only 13% of organizations have successfully allocated 75% or more of their cloud costs, according to CloudZero’s research, a threshold below which optimization decisions are structurally unreliable.

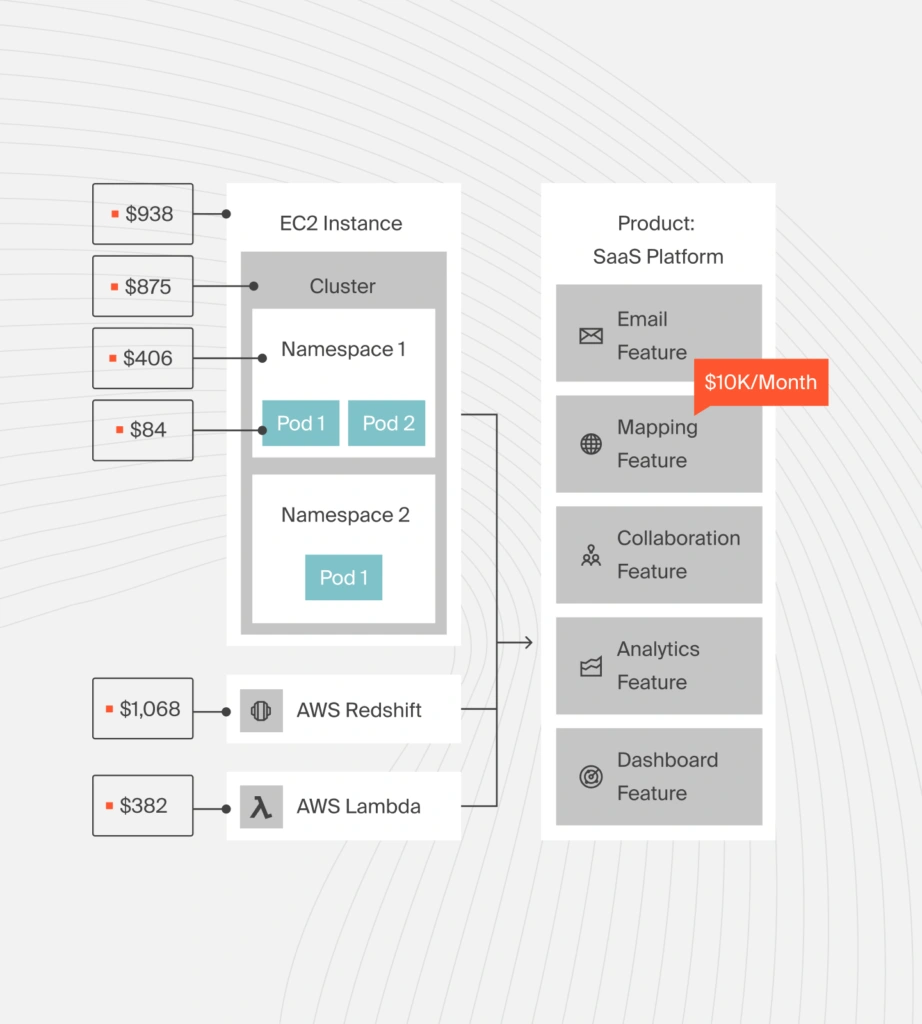

How CloudZero approaches this differently. Most cloud cost management tools depend on perfect tagging to achieve cost allocation. CloudZero’s CostFormation technology uses a code-based allocation model, similar to Infrastructure as Code, but applied to cost , to attribute 100% of spend regardless of tag quality.

This includes shared resources, multi-tenant architecture, and Kubernetes workloads that native cloud tools simply cannot allocate. The result is a complete picture of who and what is driving costs, which is the precondition for every other optimization strategy on this list. You can learn more about how it works in our guide to cloud cost allocation methods.

2. How does rightsizing reduce cloud infrastructure costs?

Rightsizing, matching instance types and sizes to actual workload requirements, is one of the most reliably effective levers for reducing cloud costs. It is also the strategy most commonly executed once and then forgotten, which is why the savings rarely hold.

Workloads change. An instance sized correctly in Q1 may be significantly over-provisioned by Q3 as traffic patterns shift, features ship, or usage scales down.

Effective rightsizing covers several resource types:

- Compute instances running below 20–30% CPU utilization consistently are candidates for downsizing or consolidation. Most organizations find 15–25% of compute can be right-sized without performance impact.

- Kubernetes pods and nodes introduce their own rightsizing challenge. CPU and memory requests set at deployment time frequently don’t reflect actual runtime consumption.nOver-set requests lead to over-provisioned nodes and wasted cluster capacity. CloudZero’s Kubernetes cost visibility connects container costs to business owners so teams can prioritize the rightsizing that actually affects margin.

- Databases such as RDS instances, Redshift clusters, BigQuery are frequently over-provisioned relative to actual query load. Database rightsizing tends to be more conservative than compute rightsizing because performance impacts are less predictable, but regular utilization review can surface significant savings.

- Storage accumulates silently. EBS volumes, S3 buckets, and similar resources continue generating costs long after the workloads they served have changed or disappeared. Automated storage auto-scaling eliminates the over-provisioning that comes from manual sizing decisions.

The most durable cloud computing cost savings come from building rightsizing reviews into regular engineering workflows rather than treating them as periodic cleanup tasks with a start and end date.

3. What are idle and zombie cloud resources — and how do you find them?

Idle and zombie resources, compute instances running with no meaningful workload, storage volumes attached to terminated instances, unattached load balancers, forgotten development environments, are among the cleanest sources of cloud cost savings because eliminating them has zero performance impact. There is no trade-off to manage. The spend is pure waste.

The challenge is finding them at scale. In large cloud environments with hundreds of accounts and thousands of resources, idle resource identification requires systematic monitoring rather than manual review.

Common categories to prioritize include:

Non-production environments left running continuously when they are only needed during business hours or for active development cycles represent some of the largest quick-win opportunities. Automated scheduling typically reduces non-production compute costs by 60–70% with no engineering impact.

Unattached storage volumes from terminated instances continue to generate storage costs indefinitely. Stale snapshots and backups that have accumulated over years without a defined retention policy compound storage bills silently. Idle load balancers and orphaned Kubernetes namespaces add smaller but consistent charges that aggregate to meaningful amounts at scale.

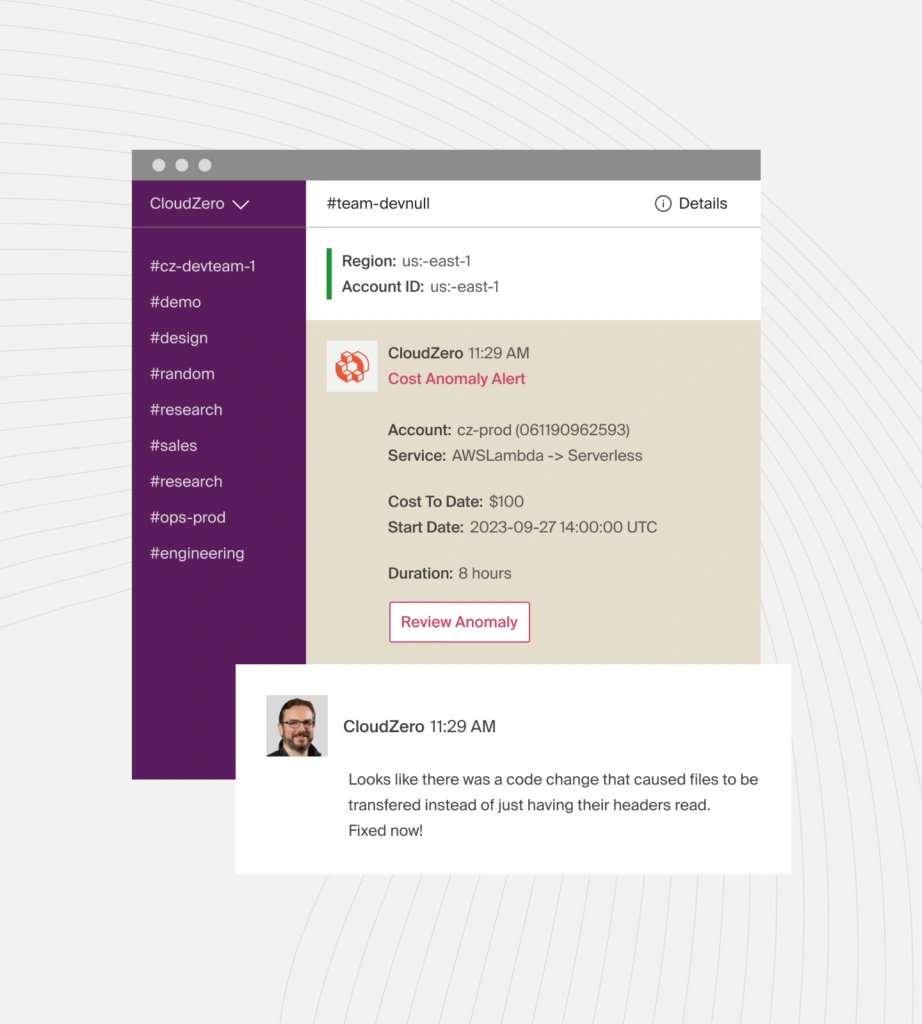

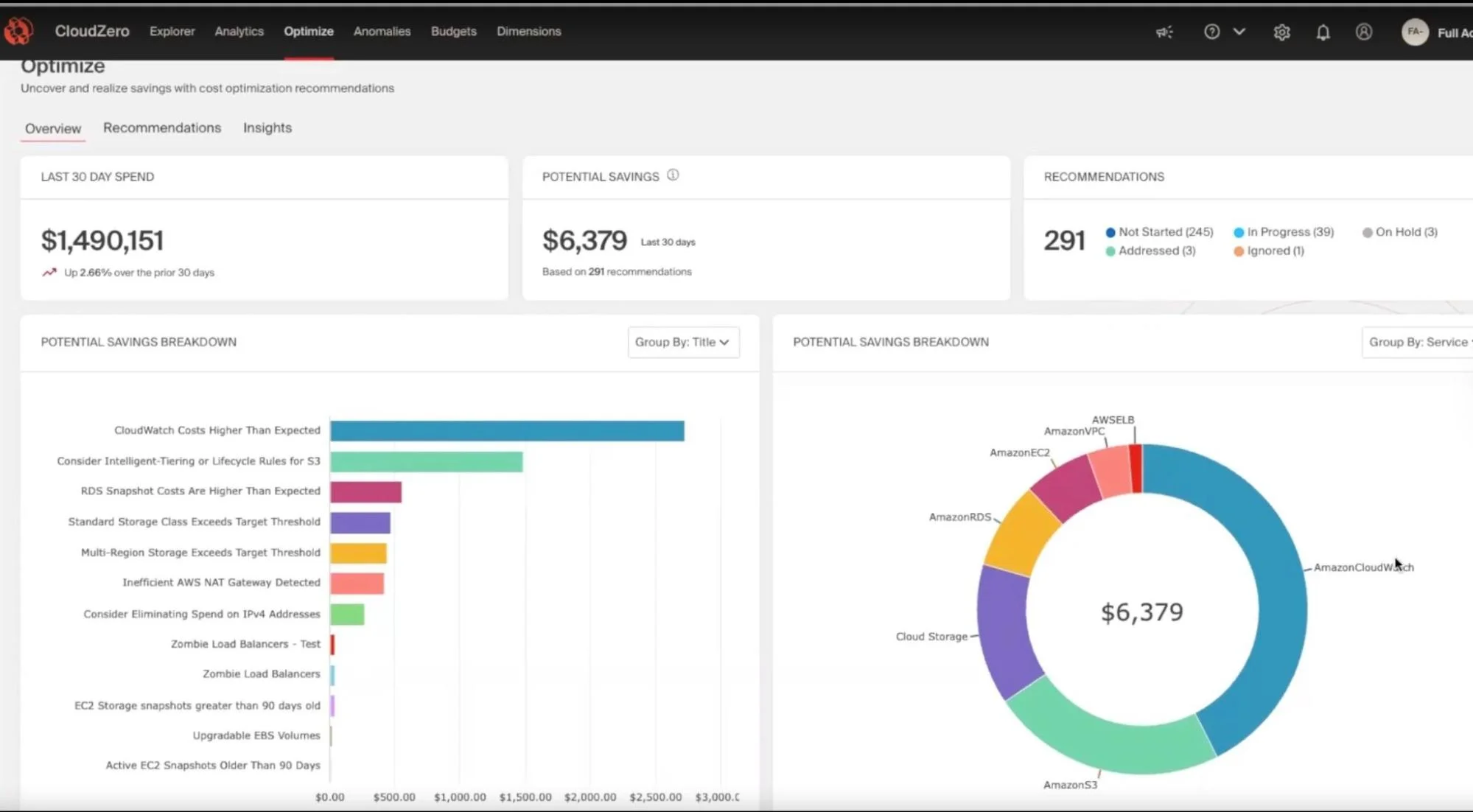

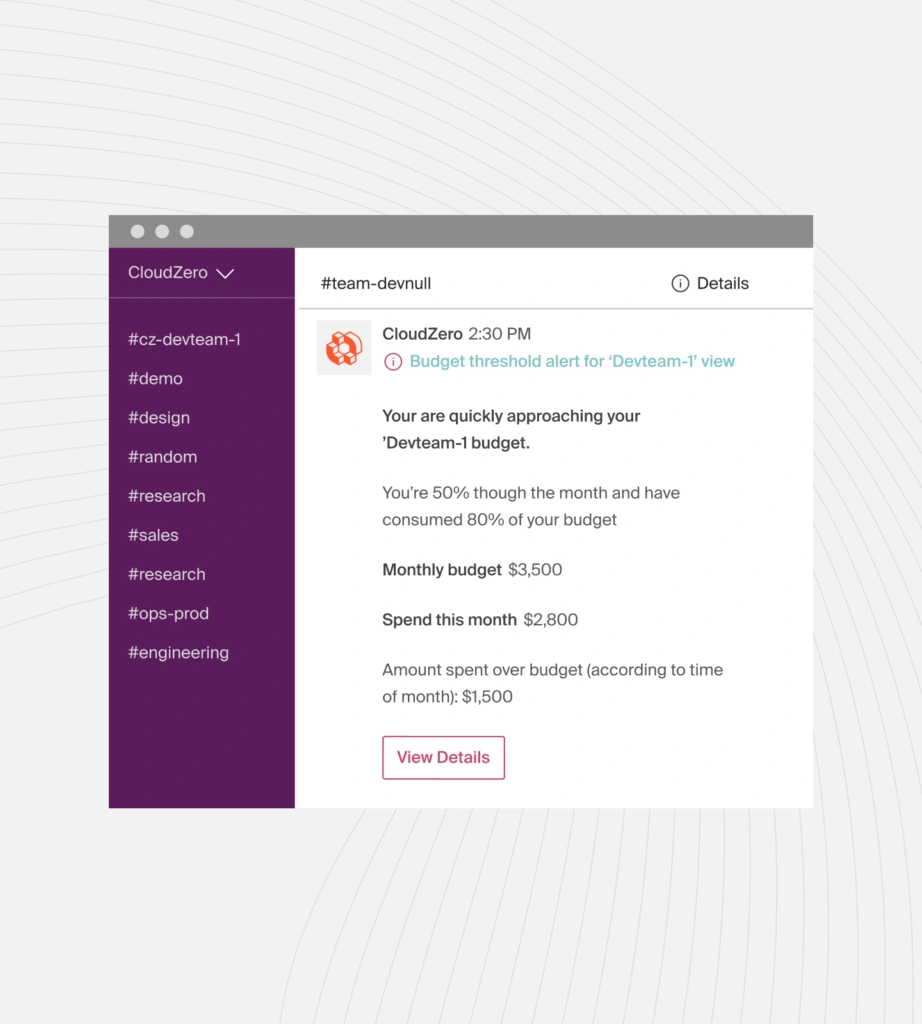

CloudZero’s anomaly detection surfaces cost spikes and unusual patterns in real time, alerting the relevant engineering team rather than a central FinOps function that may not have the context to act. The platform has surfaced more than $20 billion in anomalous spend across its customer base before those costs hit monthly bills.

4. How do Reserved Instances and Savings Plans reduce cloud costs?

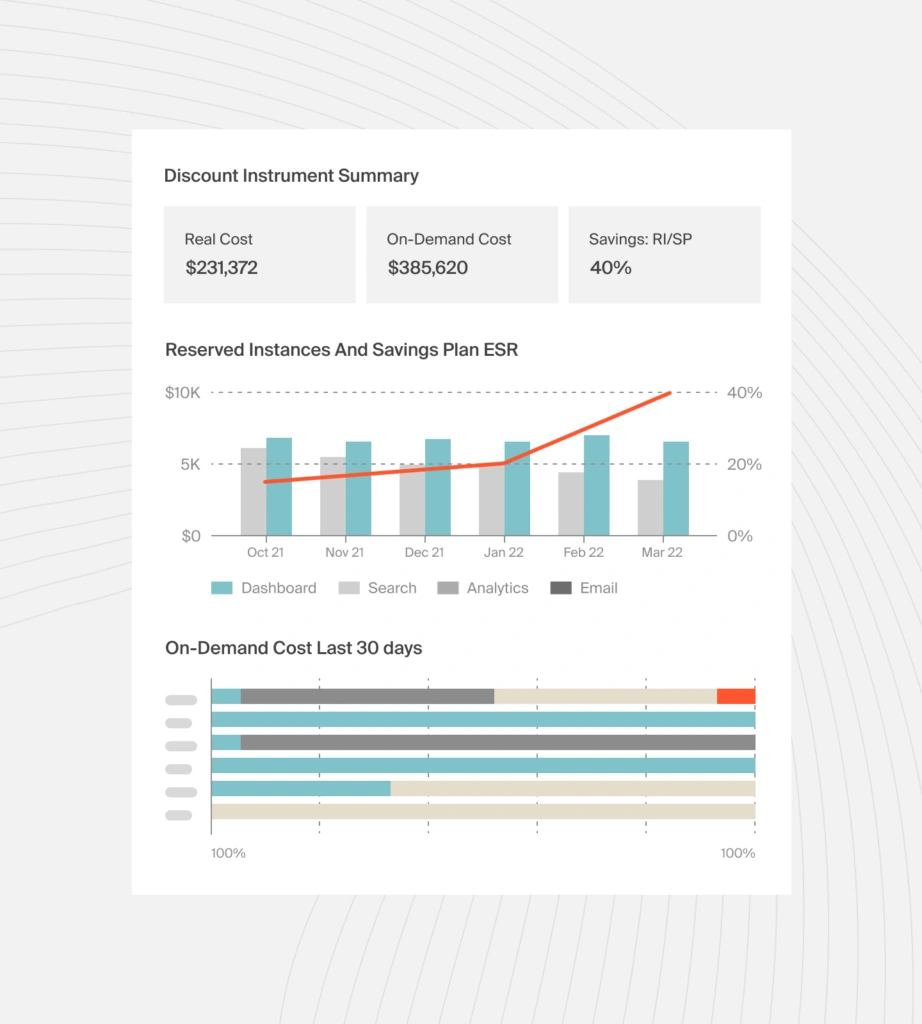

Cloud providers offer significant discounts in exchange for committed usage over one or three years. AWS Reserved Instances and Savings Plans, GCP Committed Use Discounts, and Azure Reservations all operate on this model. When managed well, they represent some of the largest available cloud computing cost savings.

The risk is over-commitment. Organizations without a disciplined approach to commitment management often find themselves over-committed in one area and under-covered in another, capturing neither the savings nor the flexibility they planned for.

Several principles reduce this risk. Cover your steady-state baseline, not your peak, commitments work best for stable, predictable workloads. Use Savings Plans over Reserved Instances where possible, as the flexibility across instance families and regions makes them more resilient to architecture changes.

Automate commitment management instead of relying on manual portfolio reviews, which are operationally intensive and easy to let drift. And layer Spot instances for variable workloads like ML training, batch processing, and CI/CD pipelines, where GPU-backed Spot instances can reduce training costs by 70–80% compared to on-demand pricing.

Commitment management is one area where having the right data makes all the difference. Most teams either over-commit or under-commit, leaving meaningful discounts on the table.

CloudZero’s commitment-based discount management gives teams a complete snapshot of their discount coverage across Reserved Instances and Savings Plans, so purchase decisions are made against actual usage data, instead of guesswork.

5. Why are data transfer and egress costs hard to control?

Data transfer costs grow with every architectural decision that moves data across regions, availability zones, or out to the internet, yet they rarely appear in forecasts until they have already compounded into a major line item.

The problem is not visibility into totals. It is visibility into causes.

A standard cloud bill tells you what you spent on data transfer. It does not tell you which service, team, feature, or customer generated it, so optimization decisions get made against aggregates rather than root causes, which is why egress problems tend to persist.

CloudZero’s Optimize platform surfaces specific data transfer recommendations, including NAT Gateways where processing fees exceed 60% of total gateway costs, EC2 cross-region transfers exceeding 10% of total EC2 data transfer, and internet traffic bypassing an existing CloudFront CDN. Each connects the waste directly to the architectural pattern causing it.

CloudZero’s action ready intelligence

And because CloudZero attributes every egress charge to the team, feature, or environment driving it, the conversation moves from “we have an egress problem” to “here is exactly who owns it and what to fix.” See our full guide to reducing AWS egress costs for a deeper look.

6. How does storage tiering cut cloud storage costs?

Not all data needs to be stored at the same cost. Cloud providers offer storage tiers with different price points based on access frequency and retrieval time.

AWS S3, for example, ranges from S3 Standard to S3 Glacier Deep Archive, with a cost differential exceeding 90% between the two extremes. Similar tiering options exist across GCP and Azure.

For organizations storing large volumes of infrequently accessed data, storage tiering is frequently one of the largest available cloud cost savings per dollar of engineering effort.

Diaceutics, a CloudZero customer, exceeded its S3 spend savings target by 70% through structured storage optimization informed by CloudZero’s visibility into what was actually being accessed and when.

Effective storage tiering requires understanding actual data access patterns, implementing lifecycle policies that move data to cheaper tiers as it ages, and reviewing those policies regularly as access patterns evolve.

S3 Intelligent-Tiering automates some of this, but custom lifecycle policies provide more granular control for certain data types and compliance requirements.

7. How can cloud migration reduce long-term infrastructure costs?

Cloud migration is one of the highest-leverage points for building cost efficiency into your infrastructure from the start. Organizations that migrate workloads to cloud without rethinking architecture inherit on-premises cost patterns such as over-provisioned, monolithic, sized for peak, in a billing model that charges for every hour those decisions run.

The cloud migration cost savings available during re-architecture are precisely because you are making design decisions before they become operational costs. Lift-and-shift migrations that move workloads without modification fail to realize the cost benefits cloud promises.

Re-architecting for cloud-native services such as managed databases, serverless compute, containerized workloads with autoscaling, and right-sizing for actual cloud demand rather than on-premises convention is where migration-driven cloud cost savings are realized.

Migration cost savings opportunities include:

- Replacing self-managed databases with managed services that scale with usage

- Adopting serverless architecture for event-driven workloads that don’t require continuous compute

- Building autoscaling into applications so they provision capacity dynamically rather than statically.

CloudZero supports migration teams by providing cost visibility throughout the migration process, helping teams validate that re-architected workloads are actually delivering the cost improvements the business case projected.

8. How do you manage AI and SaaS costs alongside cloud infrastructure?

For most organizations in 2026, cloud cost reduction is no longer purely an AWS/Azure/GCP problem. AI infrastructure costs, LLM inference, GPU compute, token-based API usage, and SaaS platform costs (Snowflake, Databricks, Datadog) operate outside traditional cloud billing and outside most traditional FinOps tools.

This creates a structural blind spot. Teams optimize their EC2 fleet carefully while AI features consume more in inference costs than the entire compute layer. CloudZero’s State of AI Costs research found that average monthly AI spend jumped 36% year-over-year to $85,500 in 2025, yet only 51% of organizations can confidently evaluate the ROI of that spend.

The most effective cloud cost reduction strategies in 2026 extend visibility to cover these areas:

- AI cost management requires token-level attribution by team, feature, or customer. Understanding cost per inference call, not just aggregate model spend, is the difference between a budget line item and a margin management tool. CloudZero’s LiteLLM integration ingests spend from hundreds of LLMs and pulls AI usage into the same cost model that powers cloud visibility, enabling true cost-per-AI-feature analysis alongside traditional infrastructure.

- Snowflake and Databricks cost management calls for query-level visibility into which workloads, teams, or jobs are driving data platform costs, now addressable through CloudZero’s native Snowflake and Databricks integration.

- SaaS cost consolidation means normalizing spend from multiple providers into a single data model through a tool like CloudZero’s AnyCost framework, which ingests cost data from over 50 cloud, data, and AI providers into one normalized view. You can explore which cost sources CloudZero covers in the full integrations list.

9. Why does engineering ownership of cloud costs matter?

The strategies above are technical. This one is organizational, and it may be the most important of all, because it determines whether cloud cost savings persist or revert.

CloudZero’s State of Cloud Cost research found a strong and consistent correlation between engineering ownership of cloud costs and better outcomes: 81% of organizations report cloud costs are in a healthy range when engineers have some level of ownership. The reverse is equally reliable, organizations where cost visibility is siloed to a central FinOps team show consistently higher rates of overspend.

The reason is structural. Engineers make thousands of infrastructure decisions every week, instance type selection, storage configuration, data retention policies, deployment architecture. These decisions have direct cost implications. Without cost feedback in the engineering workflow, those decisions default to performance and convenience rather than efficiency.

Building cost awareness into engineering culture does not mean asking engineers to prioritize cost above delivery. It means giving them the information they need to make good trade-offs.

Practically this means surfacing cost anomalies to the engineering teams that own the relevant services, embedding cost data into CI/CD pipelines so developers can see the impact of changes before deployment, and creating shared dashboards that give product, engineering, and finance teams a common view of cost-per-feature and cost-per-customer.

CloudZero’s 2025 State of Cloud Cost research documents this pattern clearly: teams that actively used CloudZero to decentralize cost data to engineers consistently outperformed peer organizations on cloud efficiency benchmarks.

Wise used CloudZero to engage more than 250 engineers in proactive cost management. Neon increased engineering cost ownership by 700%. These outcomes don’t come from governance alone; they come from making cost data visible to the people who write the code that generates it.

10. What is unit economics and how does it change cloud cost strategy?

The final and most advanced strategy for cloud cost reduction reframes how success is measured entirely.

Reducing absolute cloud spend is a reasonable goal. But it is an incomplete one, because cloud spend that drives revenue growth, supports profitable customers, or powers high-margin features is spend worth keeping. The goal is not minimum spend. It is maximum return on spend.

Unit economics is the framework that enables this. By measuring cost per customer, product, feature, cloud or AI, teams can answer not just “how much did we spend?” but “was it worth it?”

This is the central question that separates cloud cost intelligence from cloud cost management, and it is a question that most FinOps tools are not built to answer.

This reframing changes optimization priorities in important ways.

For example: A feature that costs $50,000 per month in cloud infrastructure but drives $2 million in revenue is not a cost reduction target. A feature that costs $40,000 per month and generates minimal usage is, regardless of whether the absolute spend is larger or smaller than the first example.

Organizations that measure unit economics consistently report three compounding effects: engineering decisions improve because teams can see the cost-per-unit impact of architectural choices; pricing decisions improve because product and finance teams have accurate cost-of-goods data; and waste reduction accelerates because low-value workloads become visible not as abstract cost line items but as margin drains with identifiable owners.

A CloudZero customer, a neobank valued in the tens of billions of dollars, tracks more than 40 unit cost metrics and 45 cost allocations to maintain a clear view of cloud efficiency across its entire product estate. Getting to that level demands allocating 100% of spend to business owners and integrating that allocation to the metrics that represent business value. You can see how this works in practice in our cloud unit economics guide.

Key Takeaways

- Structured cloud cost reduction programs consistently deliver 20–30% reductions in monthly spend without impacting performance or velocity.

- You cannot optimize what you cannot see — full cost allocation across shared resources, Kubernetes, and untagged infrastructure is the precondition for every other strategy.

- One-time optimization exercises reliably revert to baseline; sustained savings require rightsizing, anomaly detection, and cost ownership embedded in engineering workflows.

- The goal is not minimum spend — it is maximum return on spend. Unit economics is the framework that connects cloud costs to business outcomes.

The Missing Piece In Most Cloud Cost Reduction Programs

Most cloud cost reduction strategies stall at the same point: teams can see what they’re spending but not why, and without that context, every optimization decision is a guess.

That is the problem CloudZero was built to solve. After working with hundreds of engineering-led organizations managing billions in cloud spend, a clear pattern emerged: the teams achieving sustained cloud cost savings weren’t just cutting costs. They were building cost intelligence, understanding the relationship between their cloud spend and the business outcomes it was producing. The metrics that connect a cloud bill to a margin.

The path from here to there runs through four capabilities that most tools don’t provide together.

- Full cost allocation without tag dependency, through CostFormation

- Normalized visibility across every cost source, through AnyCost

- Business-context unit economics, through the Dimensions framework

- Real-time anomaly detection that alerts the engineering teams responsible for driving costs

CloudZero customers including Duolingo, Toyota, Upstart, PicPay, Skyscanner, Wise, and Coinbase use this combination to reduce cloud costs and stay reduced, because the intelligence is embedded in how their engineering teams work, not bolted on as a reporting layer.

If you’re ready to move from cost visibility to cost intelligence, get a free cloud cost assessment to see exactly where your spend is going and what it’s producing. Or  to see CloudZero in action for your environment.

to see CloudZero in action for your environment.